Managed Metadata fun: Troubleshooting the Taxonomy Update Scheduler…

Hi all

I have opted to post this nugget of info in two formats, to account for different audiences

For those who have a twitter-sized attention span…

In SharePoint 2010, if you migrate site collections between SharePoint farms, and you find that the Taxonomy Update Scheduler timer job is not working (i.e. your managed metadata columns are not being refreshed) try deactivating and reactivating the hidden feature called TaxonomyFieldAdded to make the timer job work again.

…and for the rest of us…

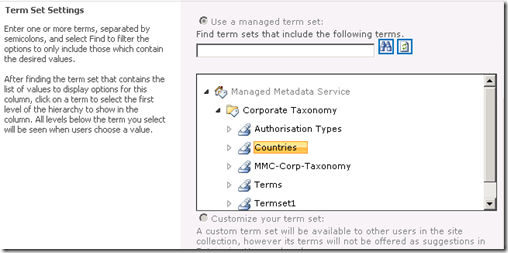

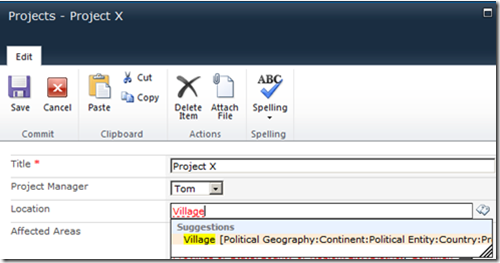

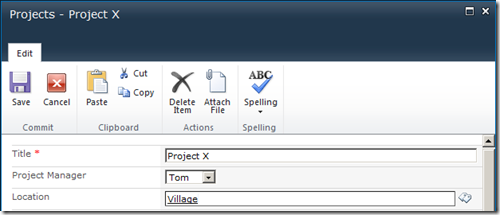

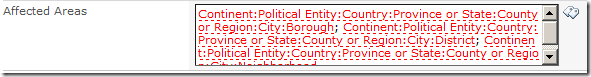

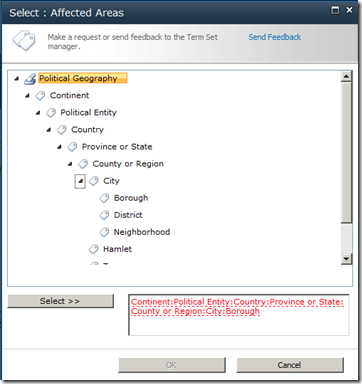

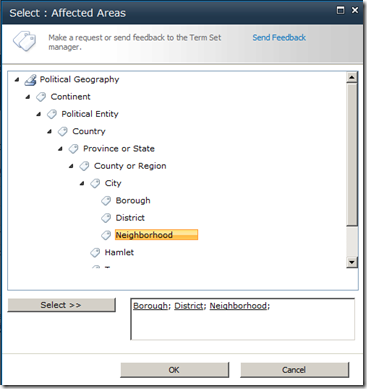

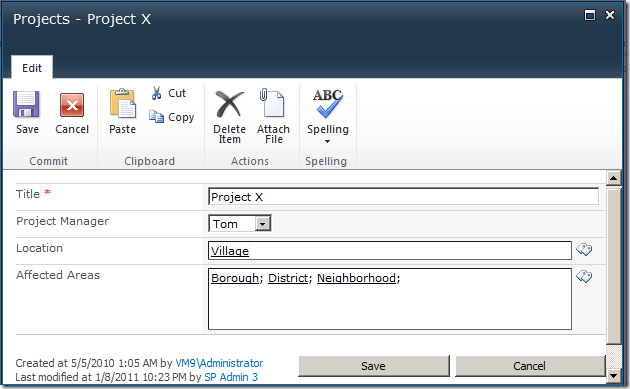

Anyone who has done any significant work with managed metadata in SharePoint 2010, is probably familiar with the TaxonomyHiddenList that is created in each site collection. This hidden list exists to improve the performance of the managed metadata service application, specifically when displaying lists and libraries containing managed metadata columns. SharePoint mega-guru Wictor Wilén wrote up the definite guide to how it all works a couple of years back so I won’t rehash it here, except to say that the TaxonomyHiddenList functions in a similar way to a cache, and like all caches, every so often they need a refresh of the data being cached. In this case, a timer job exists called Taxonomy Update Scheduler, which pushes down any term store changes to TaxonomyHiddenList on an hourly basis.

One of Seven Sigma’s clients makes extensive use of managed metadata for both navigation and search and has separate development, test and production farms. Given there are three separate farms, this necessitates not only migrating site collections between the farms periodically, but migrating managed metadata term sets as well. We ended up utilising the built-in content deployment functionality for the site collections, because we have found this method to work well in scenarios where 3rd party tools are not available, where you do not have access to the underlying SQL Server and where custom development has been done with a bit of forethought.

But one of the implications of migrating site collections with managed metadata dependencies between farms is dealing with managed metadata term sets. Given each farm has its own managed metadata service application instance, each will have its own unique term store containing term sets. If one were to export and import terms from one farm to another, the GUID’s of the term sets and each term would not match between farms, and you would experience the sort of symptoms described in this post. Fortunately, the Export-SPMetadataWebServicePartitionData and Import-SPMetadataWebServicePartitionData PowerShell cmdlets save the day as they allow you to back up and restore the term store from farm to farm (I have included a very verbose sample process of how its done at the end of this post).

When the Taxonomy Update Scheduler doesn’t update…

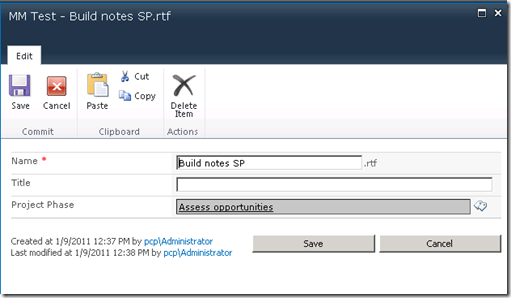

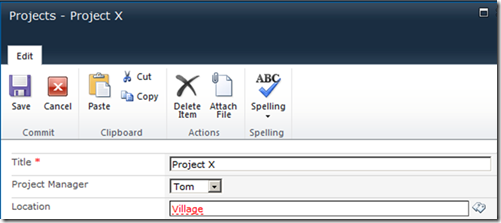

We recently had an issue where changes to the managed metadata term sets were not being refreshed in the TaxonomyHiddenList . Admins would rename terms using the Term Store Management Tool in site settings no problem. They knew the updates wouldn’t be immediately reflected in the site because it relies on the aforementioned timer job that runs hourly. Nevertheless, they still expected that the changes would take effect eventually, but it didn’t happen at all. This was particularly annoying in this case because a managed metadata term set was being used in a list which defined the headings and sub headings in the mega menu navigation system we’d developed. As a result, they would end up with navigation headings with the wrong phrase that just wouldn’t reflect the term store changes no matter how long they waited.

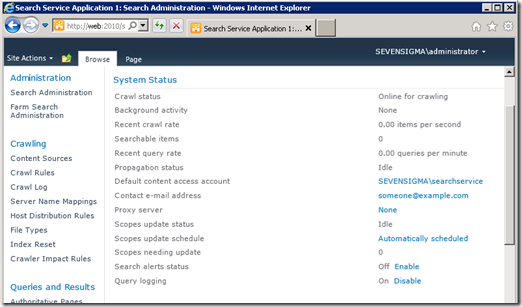

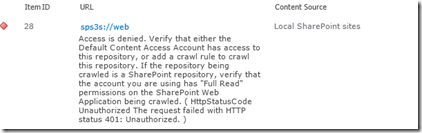

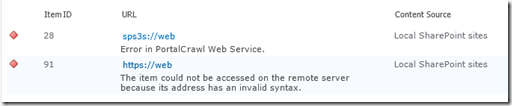

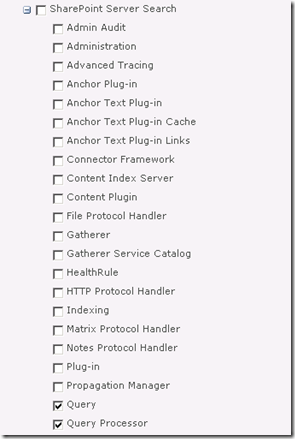

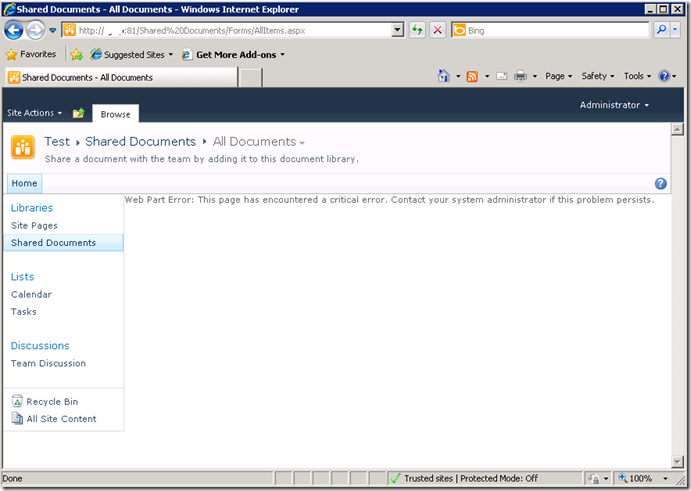

When this was first reported we thought, “ah they just haven’t waited long enough and the timer job hasn’t run” so Dan (one of our consultants who I mentioned in the last post), went and found the appropriate Taxonomy Update Scheduler timer job and ran it manually. He checked the results and found that the terms still had not updated, even though the timer job had completed successfully. He checked out the SharePoint ULS logs at the time the timer job was being run and all appeared fine, no errors or warnings to indicate that it wasn’t working. He then turned up the debug logging to verbose and ran the timer job again. Still no warnings or errors, but it definitely hadn’t affected the TaxonomyHiddenList which still contained the old taxonomy values.

I had previously seen many reports of permission related issues with the TaxonomyHiddenList so that was checked and found to be fine. Additionally there were known issues with the timer job that was fixed in cumulative updates but they did not apply here. So while we worked out what was going wrong, we had the pressing issue of sorting out the hidden list for our client so their navigation system could function correctly. Dan found some code samples showing how to force an update and put together the following script so that whenever an update was needed we could run this script and force the update. Here is the sample script code:

$siteUrl = Read-Host "Enter the SharePoint site URL (eg: http://portal.mycompany.com.au/sites/site)"

$site = Get-SPSite $siteUrl

[Microsoft.SharePoint.Taxonomy.TaxonomySession]::SyncHiddenList($site)

$site.dispose()

Now be warned with this script – it can hammer your search. This is because this script isn’t the same as what the the timer job does. When you run the timer job, it runs rather quickly and if you are monitoring the system resources you wouldn’t really observe any noticeable spikes. But this script (depending on the size of your taxonomy store) runs for a number of minutes at about 15-20% CPU constantly. Dan checked this performance behaviour out using reflector and found that the method SyncHiddenList in the Microsoft.SharePoint.Taxonomy assembly goes through the entire TaxonomyHiddenList, then searches the term store for the matching term’s GUID identifier, then it updates TaxonomyHiddenList with the actual value of that term in the term store. Without going into too much detail, there is quite a bit of enumerating and searching through lists and term stores for terms and it is obviously not very efficient. The timer job on the other hand – judging from the names of the methods it invokes (the ULS shows the timer job making a WCF service call on the MetadataWebService called GetChanges) – instead looks directly for items which have changed and then updates only those items. This is of course, far more efficient than going through everything in the Term Store/TaxonomyHiddenList. So you have been warned – perhaps avoid running a script like this during business hours because it may make things slower.

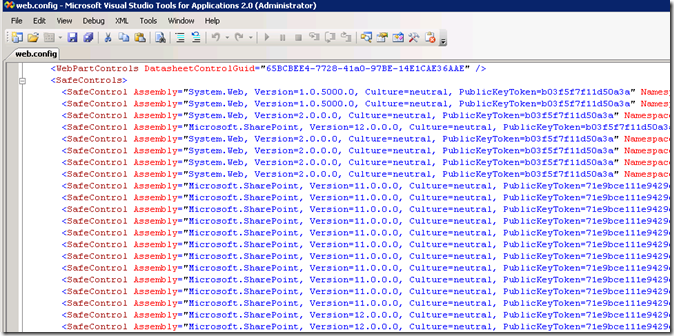

So having said all of that, after a bit of searching for what might be the problem since the logs weren’t hinting at the cause of the failure, Dan found this Technet forum post http://social.technet.microsoft.com/Forums/sharepoint/en-US/51174c11-68b0-4e39-9491-6676d3dbf008/taxonomy-update-scheduler-not-updating and noticed that one respondent (Ed) had used the STSADM command to deactivate and then activate the TaxonomyFieldAdded feature because he’d found something got corrupted when copying a live content DB from his DEV system. As I said earlier, we’d used the built-in content migration tools to do a similar thing. Could this have corrupted things just like Ed had witnessed? It couldn’t hurt to try out his fix and see, so Dan ran the following commands:

stsadm -o deactivatefeature -name TaxonomyFieldAdded -url [SITE_URL]

stsadm -o activatefeature -name TaxonomyFieldAdded -url [SITE_URL]

where [SITE_URL] was replaced with the URL to our SharePoint site. He then used the Term Store Management Tool to rename one of the terms and the ran the Taxonomy Update Scheduler timer job. Sure enough, he saw that the term in the hidden list had changed to what was in the term store. So Ed’s fix worked for us – and it does appear that some corruption of the link between the Term Store and the TaxonomyHiddenList had occurred in similar circumstances of migrating site collections. It just seemed strange that forcing an update with Dan’s workaround script worked, but again that just highlights the differences between the Taxonomy Update Scheduler timer job and the SyncHiddenList method we called in the script.

Footnote: A slightly verbose term migration process

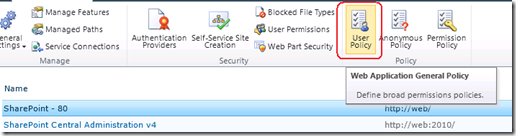

Earlier in this post I said I should show an example of how to migrate a term store between service applications using PowerShell. While the PowerShell commands are quite easy, it can be tricky to get working at first because a couple of pre-requisites have to be met:

- This method can only be used by an account with local administration access to the entire farm (hopefully you have a SharePoint admin type account that has local admin access to all servers)

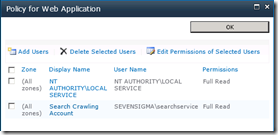

- Despite this process being run via PowerShell on an application server on a farm, most of the activity is performed on the destination farm SQL Server via stored procedures. Accordingly, the account you are using for SharePoint service applications on the destination farm temporarily needs bulk import rights on SQL Server which it does not have by default.

- This can be achieved by temporarily granting the account elevated access. (For example: granting the service application account sysadmin role in SQL)

Exporting the term store

On one of the web/application servers of the source SharePoint farm, we first export the term store to a backup file via PowerShell:

- 1) Determine where the export file will be saved to. Note: This must be a UNC path (eg \\server\share on the SQL Server for the destination farm. Ensure that all SharePoint farm and service application accounts have write access to this share and the folder. (Note: If this share is temporarily created and deleted after use, granting full control to the share may be deemed appropriate)

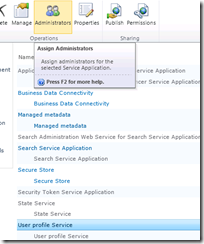

- 2) Using the SharePoint 2010 Management Shell on the source server, determine the ID of the managed metadata service application (note in the example below, the matching service application is marked in bold). The GUID listed in the ID column is the important bit

PS C:\> Get-SPServiceApplication DisplayName TypeName Id ----------- -------- -- State Service App... State Service 833e41ed-9574-4f6b-978b-787a087735e1 Managed Metadata ... Managed Metadata ... 479cd8d7-af32-4f85-adb1-9cdd858ed3e6 Web Analytics Ser... Web Analytics Ser... 4b54f5d4-2148-4cbf-ad24-b1e49b0eb7e5

- 3) Create a PowerShell object bound to the managed metadata service application ID.

PS C:\Users\SP_Admin> $mms = Get-SPServiceApplication -Identity 479cd8d7-af32-4f85-adb1-9cdd858ed3e6

- 4) Confirm that the correct service application is selected by examining the properties of the object. Confirm the service type is “Managed Metadata Service”

PS C:\> $mms.TypeName Managed Metadata Service

- 5) Using PowerShell, determine the ID of the managed metadata service application proxy (note the matching service application is marked in bold). The GUID listed in the ID column is the important bit

PS C:\> Get-SPServiceApplicationProxy DisplayName TypeName Id ----------- -------- -- State Service App... State Service Proxy 24f87eba-85af-4938-98f4-3002ff0da95b Managed Metadata ... Managed Metadata ... 373ad4c0-cdd2-4db8-9dd8-a0c5c8d1df41 Secure Store Serv... Secure Store Serv... 7ac50f4f-addc-466e-a695-9a37890058f5

- 6) Create an object bound to the managed metadata service application proxy.

PS C:\> $mmp = Get-SPServiceApplicationProxy -Identity 373ad4c0-cdd2-4db8-9dd8-a0c5c8d1df41

- 7) Confirm that the correct service application proxy is selected by examining the properties of the object. Confirm the service type is “Managed Metadata Service Connection”

PS C:\> $mmp.TypeName Managed Metadata Service Connection

- 8) Export the current Managed metadata term store to the UNC path specified in step 1, utilising the service application object and service application proxy object created in steps 3 and 6.

PS C:\> Export-SPMetadataWebServicePartitionData -Identity $mms.id -ServiceProxy $mmp -Path "\\server\share\termstore.bak"

Importing the term store

The following steps import the term store to a backup file: These steps are performed on an application server on the destination farm:

- 1) Determine where the export file will restored from. Note: This will be the UNC path (eg \\server\share used for the export process documented in step 8 of the export process.

- 2) Using SharePoint 2010 Management Shell on the destination server, determine the ID of the managed metadata service application (note the matching service application is marked in bold). The GUID listed in the ID column is the important bit

PS C:\> Get-SPServiceApplication DisplayName TypeName Id ----------- -------- -- State Service App... State Service 833e41ed-9574-4f6b-978b-787a087735e1 Managed Metadata ... Managed Metadata ... 479cd8d7-af32-4f85-adb1-9cdd858ed3e6 Search Service Ap... Search Service Ap... a3434173-fc5d-464d-a05c-aeda41d4959f

- 3) Create an object bound to the managed metadata service application.

PS C:\> $mms = Get-SPServiceApplication -Identity 479cd8d7-af32-4f85-adb1-9cdd858ed3e6

- 4) Confirm that the correct service application is selected…

PS C:\> $mms.DisplayName Managed Metadata Service Application PS C:\> $mms.TypeName Managed Metadata Service

- 5) Using PowerShell, determine the ID of the managed metadata service application (note the matching service application is marked in bold). The GUID listed in the ID column is the important bit

PS C:\> Get-SPServiceApplicationProxy DisplayName TypeName Id ---------- -------- -- State Service App... State Service Proxy 24f87eba-85af-4938-98f4-3002ff0da95b Managed Metadata ... Managed Metadata ... 373ad4c0-cdd2-4db8-9dd8-a0c5c8d1df41 WSS_UsageApplication Usage and Health ... c8de2c82-8ae5-41ab-bf58-9724c48776d5

- 6) Create an object bound to the managed metadata service application proxy and confirm that the correct service application proxy is selected…

PS C:\> $mmp = Get-SPServiceApplicationProxy -Identity 373ad4c0-cdd2-4db8-9dd8-a0c5c8d1df41 PS C:\> $mmp.DisplayName Managed Metadata Service Application PS C:\> $mmp.TypeName Managed Metadata Service Connection

- 7) Import the Managed metadata configuration to the destination farm store. Perform this command on the destination farm, specifying the UNC path specified in step 1, utilising the service application object and service application proxy object.

Import-SPMetadataWebServicePartitionData -Identity $mms.id -ServiceProxy $mmp -Path "\\server\share\termstore.bak" –OverwriteExisting

- 8) Undo the sysadmin permissions on the SharePoint Service Application Account on the SharePoint DB server as the permissions are no longer required.

Thanks for reading (and once again thanks Dan Wale for nailing this issue)

Paul Culmsee