Trials or tribulation? Inside SharePoint 2013 workflows–Part 10

Hi there and welcome back to my series of articles that puts a real-world viewpoint to SharePoint 2013 workflow capabilities. This series is pitched more to Business Analysts, SharePoint Hackers and generally anyone who might be termed a citizen developer. This series shows the highs and lows of out of the box SharePoint Designer workflows, and hopefully helps organisations make an informed decision around whether or not to use what SharePoint provides, or moving to the 3rd party landscape.

By now you should be well aware of some of the useful new workflow capabilities such as stages, looping, support for calling web services and parsing the data via dictionary objects. You also now understand the basics of REST/oData and CAML. At the end of the last post, we just learnt that it is possible to embed CAML queries into REST/oData, which gets around the issue of not being able to filter lists via Managed metadata columns. We proved this could be done, but we did not actually try it with the actual CAML query that can filter managed metadata columns. It is now time to rectify this.

Building CAML queries

Now if you are a SharePoint developer worth your salt, you already know CAML, because their are mountains of documentation on this topic on MSDN as well as various blogs. But a useful shortcut for all you non coders out there, is to make use of a free tool called CAMLDesigner 2013. This tool, although unstable at times, is really easy to use, and in this section I will show you how I used it to create the CAML XML we need to filter the Process Owners list via the organisation column.

After you have downloaded CAMLDesigner and successfully gotten it installed, follow these steps to build your query.

Step 1:

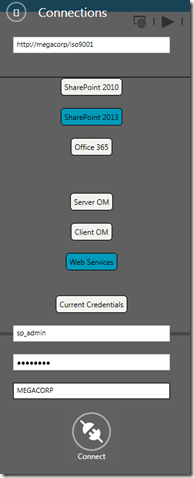

Start CAMLDesigner 2013 and on the home screen, click the Connections menu.

Step 2:

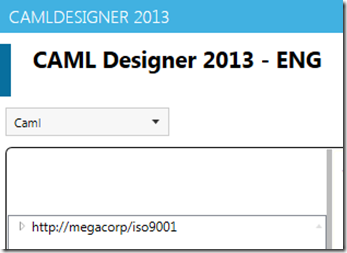

In the connections screen that slides out from the right, enter http://megacorp/iso9001 into the top textbox, then click the SharePoint 2013 and Web Services buttons. Enter the credentials of a site administrator account and then click the Connect icon at the bottom. If you successfully connect to the site, CAMLDesigner will show the site in the left hand navigation.

Step 3:

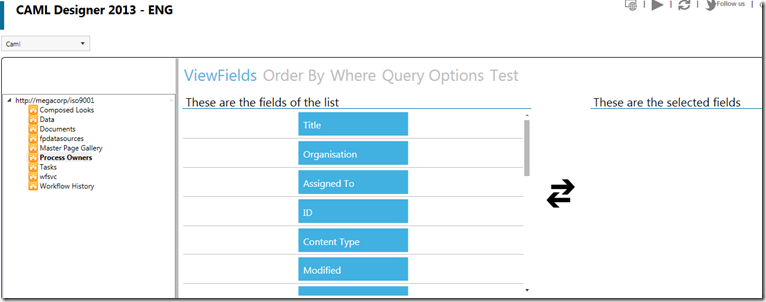

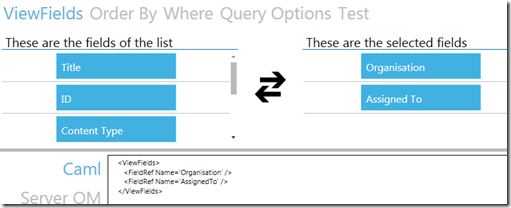

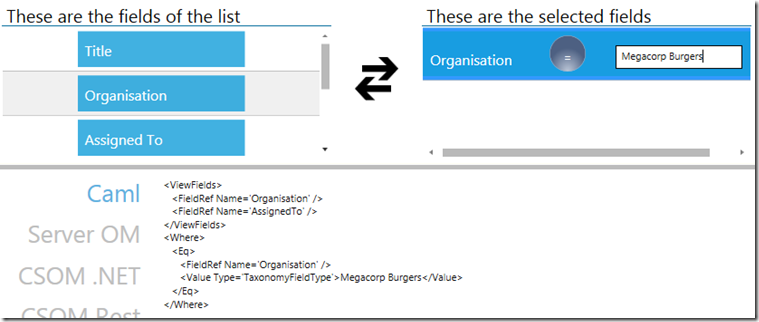

Click the arrow to the left of the Megacorp site and find the Process Owners list. Click it, and all of the fields in the list will be displayed as blue boxes below the These are the fields of the list section.

Step 4:

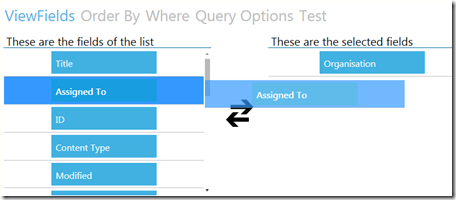

Drag the Organisation column to the These are the selected fields section to the right. Then do the same for the Assigned To column. If you look closely at the second image, you will see that the CAML XML is already being built for you below.

Step 5:

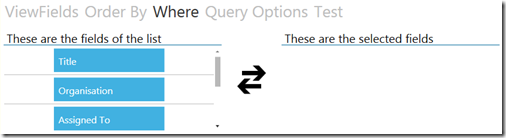

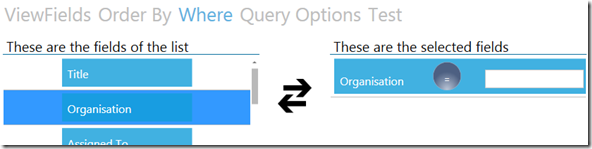

Now click on the Where menu item above the columns. Drag the Organisation column across to the These are the selected fields section. As you can see in the second image below, once dragged across, a textbox appears, along with a blue button with an operator. Also take note of the CAML XML being build below. You can see that has added a <Where></Where> section.

Step 6:

In the Textbox in the Organisation column you just dragged, type in one of the Megacorp organisations. Eg: Megacorp Burgers. Note the XML changes…

Step 7:

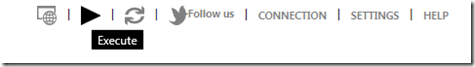

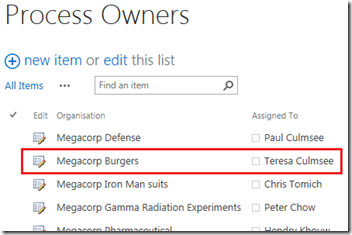

Click the Execute button (the Play icon across the top). The CAML query will be run, and any matching data will be returned. In the example below, you can see that the user Teresa Culmsee is the process owner for Megacorp Burgers.

Step 8:

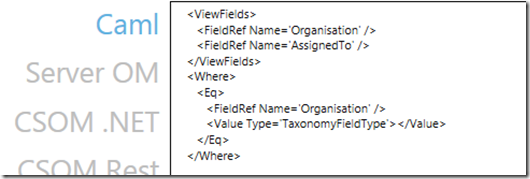

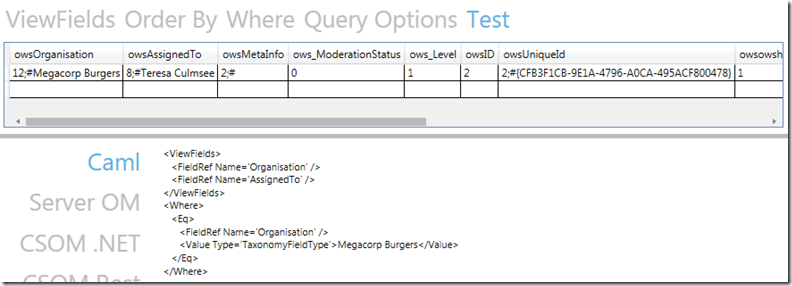

Copy the XML from the window to clipboard. We now have the XML we need to add to the REST web service call. Exit CAMLDesigner 2013.

Building the REST query…

Armed with your newly minted CAML XML as shown below, we need to return to fiddler and draft it into the final URL.

<ViewFields> <FieldRef Name='Organisation' /> <FieldRef Name='AssignedTo' /> </ViewFields> <Where> <Eq> <FieldRef Name='Organisation' /> <Value Type='TaxonomyFieldType'>Megacorp Burgers</Value> </Eq> </Where>

As a reminder, the XML that we had working in the past post looked like this:

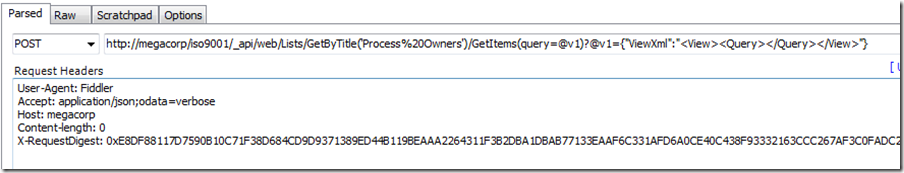

http://megacorp/iso9001/_api/web/Lists/GetByTitle(‘Process%20Owners’)/GetItems(query=@v1)?@v1={“ViewXml”:”<View><Query></Query></View>”}

Let’s now munge them together by stripping the carriage returns from the XML and putting it between the <Query> and </Query> sections. This gives us the following large and scary looking URL.

http://megacorp/iso9001/_api/web/Lists/GetByTitle(‘Process%20Owners’)/GetItems(query=@v1)?@v1={“ViewXml”:”<View><Query><ViewFields> <FieldRef Name=’Organisation’ /> <FieldRef Name=’AssignedTo’ /> </ViewFields> <Where> <Eq> <FieldRef Name=’Organisation’ /> <Value Type=’TaxonomyFieldType’>Megacorp Burgers</Value> </Eq> </Where></Query></View>”}

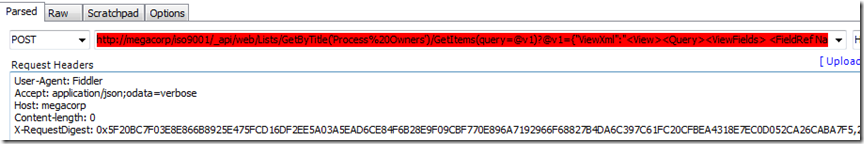

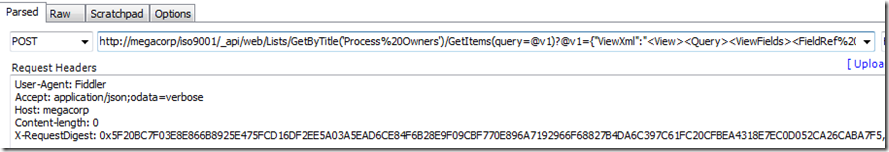

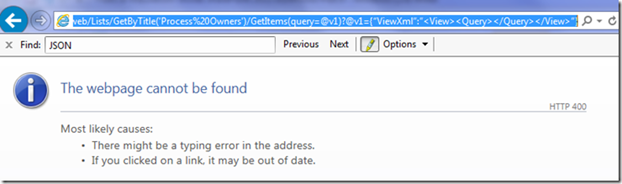

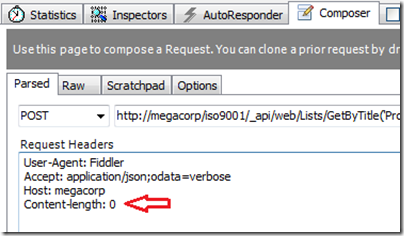

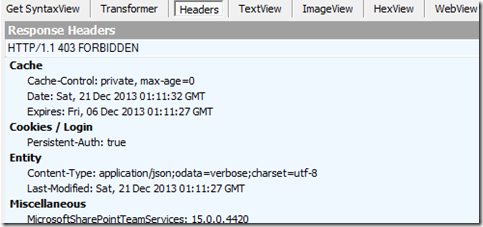

Are we done? Unfortunately not. If you paste this into Fiddler composer, Fiddler will get really upset and display a red warning in the URL textbox…

If despite Fiddlers warning, you try and execute this request, you will get a curt response from SharePoint in the form of a HTTP/1.1 400 Bad Request response with the message HTTP Error 400. The request is badly formed.

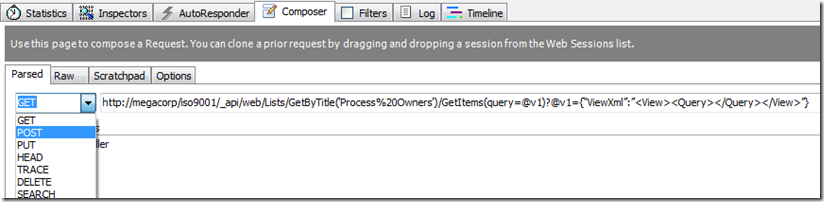

The fact that Fiddler is complaining about this URL before it has even been submitted to SharePoint allows us to work out the issue via trial and error. If you cut out a chunk of the URL, Fiddler is okay with it. For example: This trimmed URL is considered acceptable by Fiddler:

http://megacorp/iso9001/_api/web/Lists/GetByTitle(‘Process%20Owners’)/GetItems(query=@v1)?@v1={“ViewXml”:”<View><Query>

But adding this little bit makes it go red again.

http://megacorp/iso9001/_api/web/Lists/GetByTitle(‘Process%20Owners’)/GetItems(query=@v1)?@v1={“ViewXml”:”<View><Query><ViewFields> <FieldRef Name=’Organisation’ />

Any ideas what the issue could be? Well, it turns out that the use of spaces was the issue. I removed all the spaces from the URL above and where I could not, I encoded it in HTML. Thus the above URL turned into the URL below and Fiddler accepted it

http://megacorp/iso9001/_api/web/Lists/GetByTitle(‘Process%20Owners’)/GetItems(query=@v1)?@v1={“ViewXml”:”<View><Query><ViewFields><FieldRef%20Name=’Organisation’ />

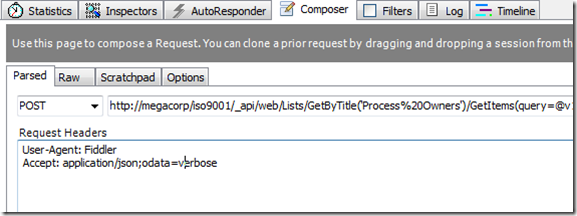

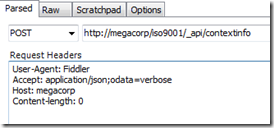

So returning to our original big URL, it now looks like this (and Fiddler is no longer showing me a red angry textbox):

http://megacorp/iso9001/_api/web/Lists/GetByTitle(‘Process%20Owners’)/GetItems(query=@v1)?@v1={“ViewXml”:”<View><Query><ViewFields><FieldRef%20Name=’Organisation’/><FieldRef%20Name=’AssignedTo’/></ViewFields><Where><Eq><FieldRef%20Name=’Organisation’/><Value%20Type=’TaxonomyFieldType’>Megacorp%20Burgers</Value></Eq></Where></Query></View>”}

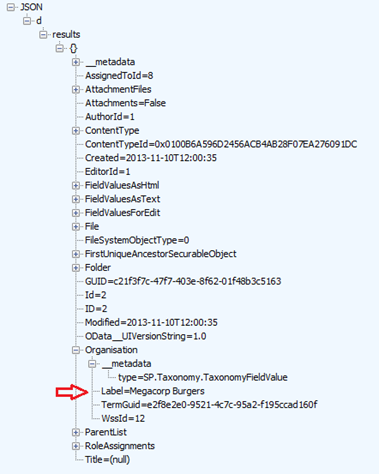

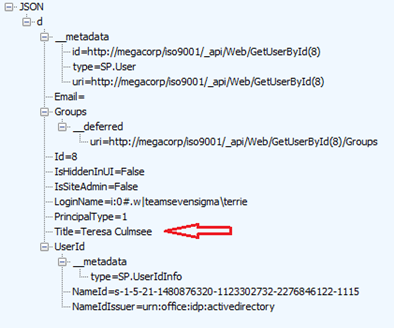

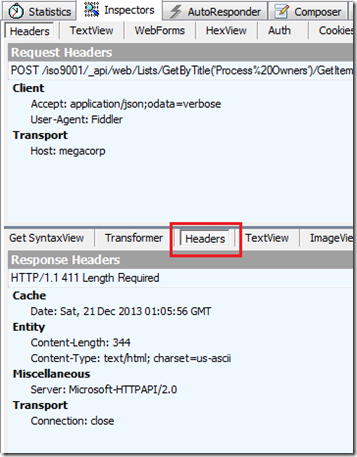

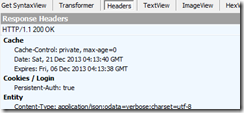

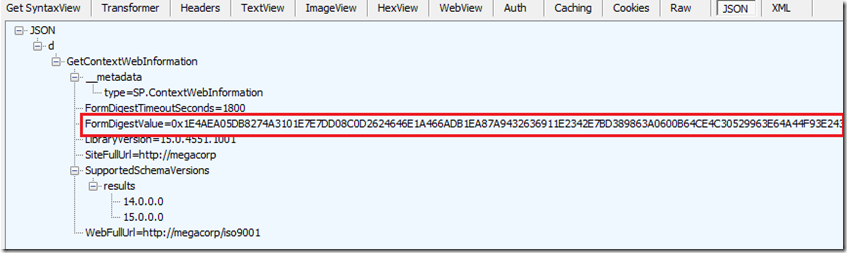

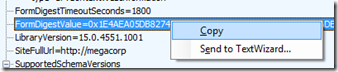

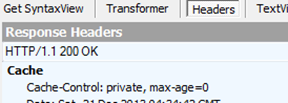

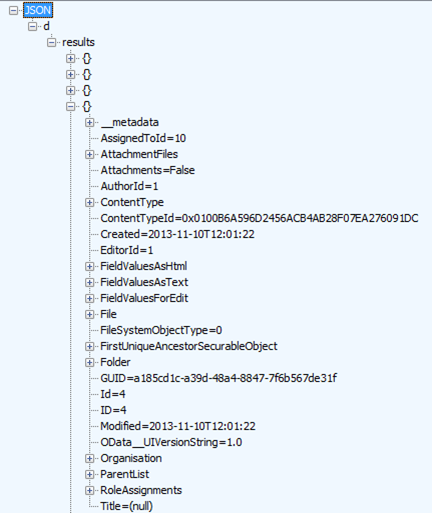

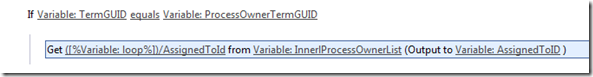

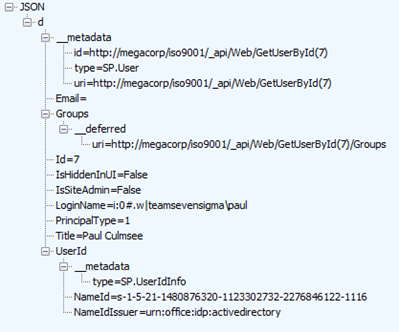

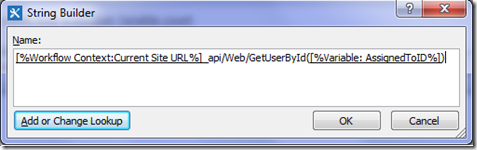

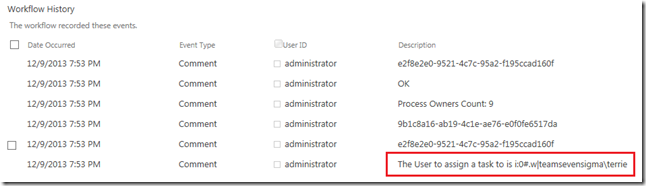

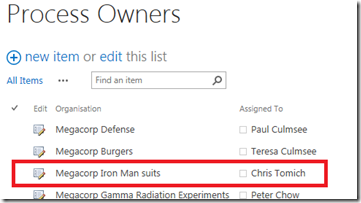

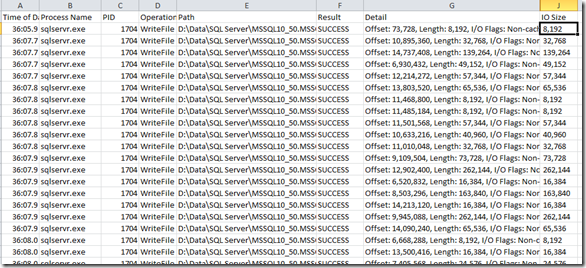

So let’s see what happens. We click the execute button. Wohoo! It works! Below you can see a single matching entry and it appears to be the entry from CAMLBuilder2013. We can’t tell for sure because the Assigned To column is returned as AssignedToID and we have to call another web service to return the actual username. We covered this issue and the web service to call extensively in part 8 but to quickly recap, we need to pass the value of AssignedToID to the http://megacorp/iso9001/_api/Web/GetUserById() web service. In this case, http://megacorp/iso9001/_api/Web/GetUserById(8) because the value of AssignedToId is 8.

The images below illustrate. The first one shows the Process Owner for Megacorp burgers. Note the value of AssignedToID is 8. The second image shows what happens when 8 is passed to the GetUserById web service call. Check Title and LoginName fields.

Conclusion

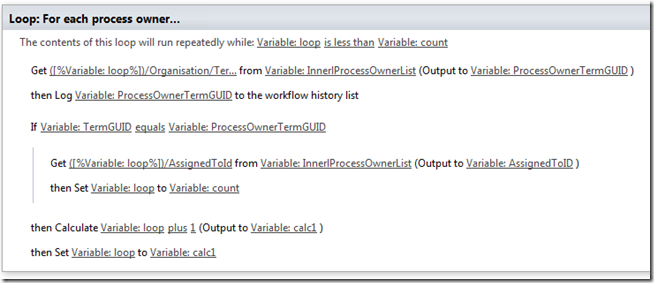

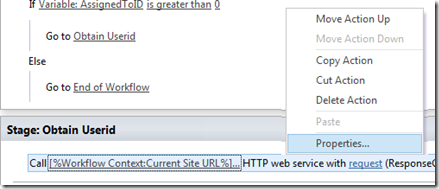

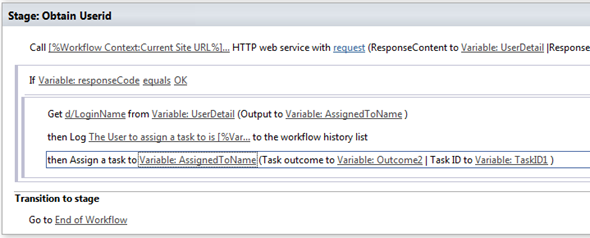

Okay, so now we have our web service URL’s all sorted. In the next post we are going to modify the existing workflow. Right now it has four stages:

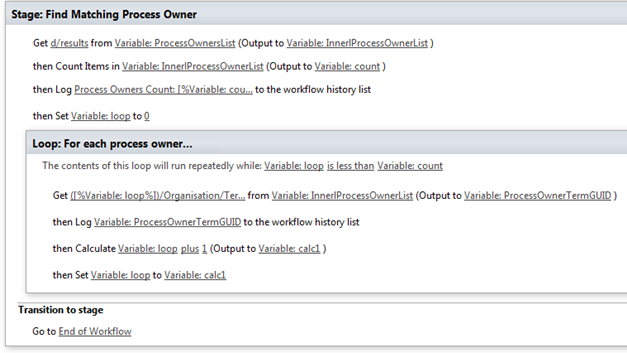

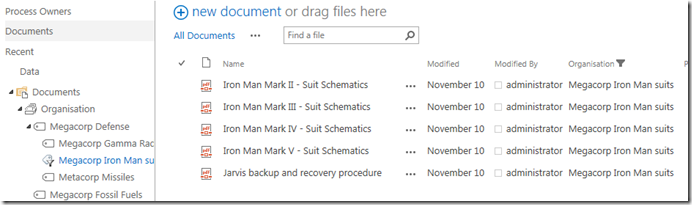

- Stage 1: Obtain Term GUID (extracts the GUID of the Organisation column from the current workflow item in the Documents library and if successful, moves to stage 2)

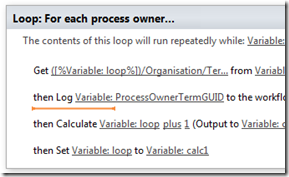

- Stage 2: Get Process Owners (makes a REST web service call to enumerate the Process Owners List and if successful, moves to stage 3)

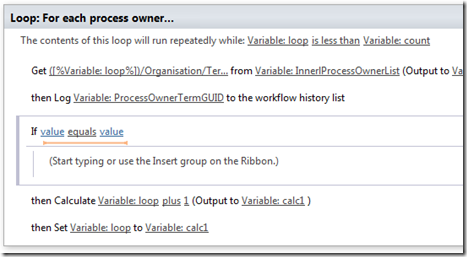

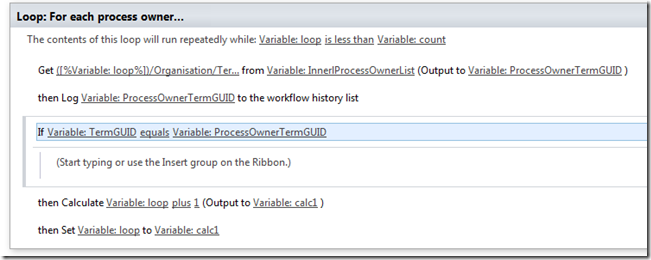

- Stage 3: Find Matching Process Owner (Loops through the process owners and finds the matching organisation from stage 1. For the match, grab the value of AssignedToID and if successful, move to stage 4)

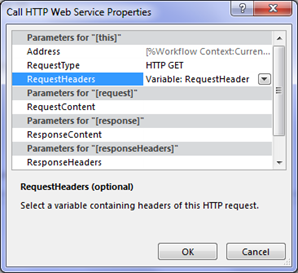

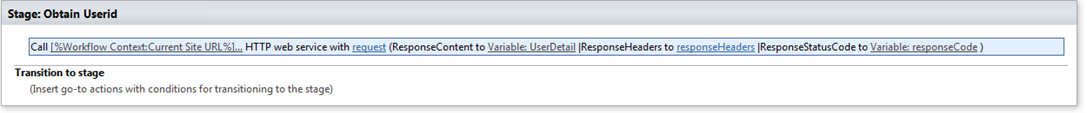

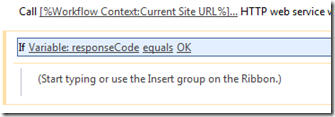

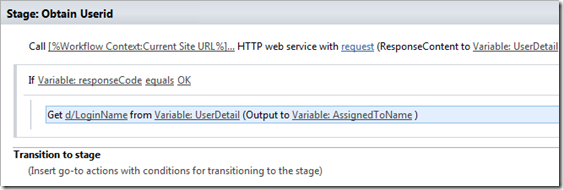

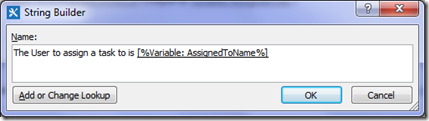

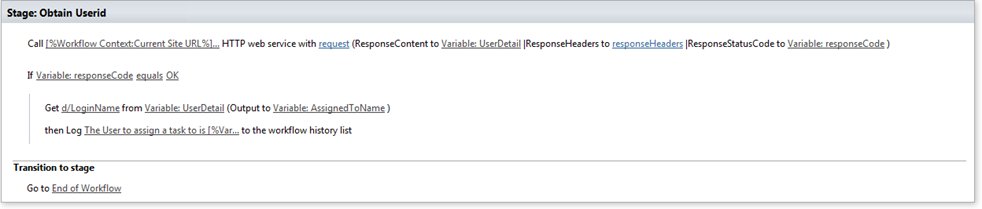

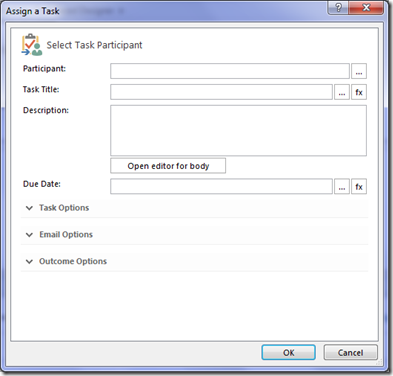

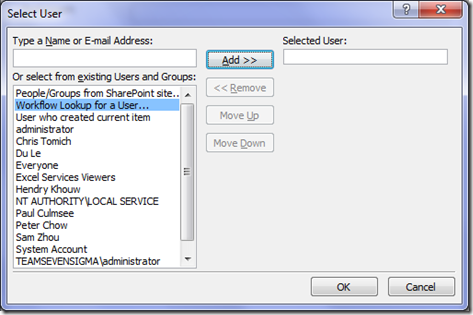

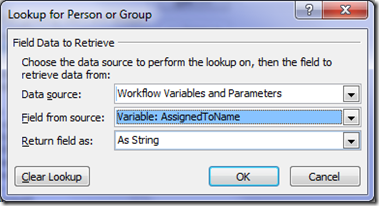

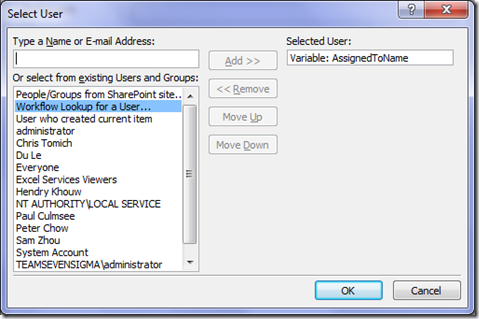

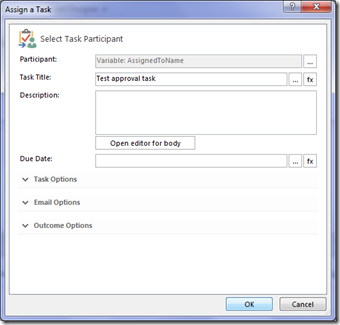

- Stage 4: Obtain UserID (Take the value of AssignedToID and make a REST web service call to return the windows logon name for the user specified by AssignedToID and assign a task to this user)

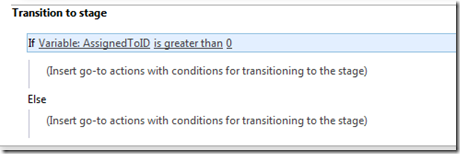

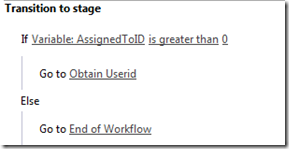

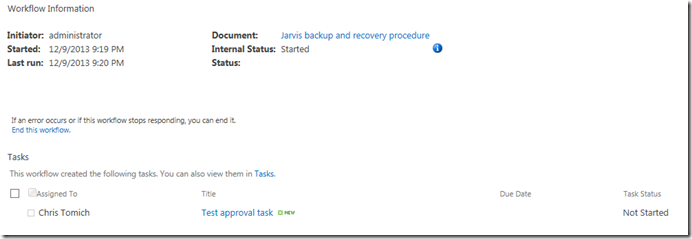

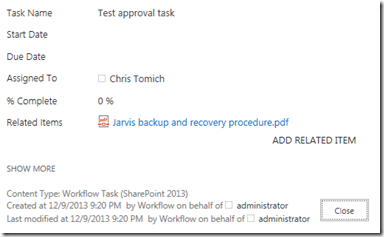

We will change it to the following stages:

- Stage 1: Obtain Term Name (extracts the name of the Organisation column from the current workflow item in the Documents library and if successful, moves to stage 2)

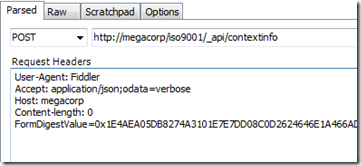

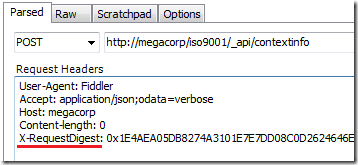

- Stage 2: Get the X-RequestDigest (We will grab the request digest we need to do our HTTP POST to query the Process Owners list. If successful move to stage 3)

- Stage 3: Get Process Owner (makes the REST web service call to grab the Process Owners for the organisation specified by the Term name from stage 1. Grab the value of AssignedToID and move to stage 4)

- Stage 4: Obtain UserID (Take the value of AssignedToID and make a REST web service call to return the windows logon name for the user specified by AssignedToID and assign a task to this user)

One final note: After this epic journey we have taken, you might think that doing this in SharePoint Designer workflow should be a walk in the park. Unfortunately this is not quite the case and as you will see, there are a couple more hurdles to cross.

Until then, thanks for reading…

Paul Culmsee