Some seriously powerful (and free) PDF functionality in PowerApps and Flow

Hiya

Pretty much every time I run a project kick-off meeting involving tools like SharePoint or PowerApps, I always ask the group the following question:

“If you had <insert goal here>, how would things be different to now”?

(Replace <insert goal here> with any common IT platitude like “improved collaboration”, “knowledge management”, “big data analytics” or “digital transformation”. )

Now inevitably, someone will say something like “I would have information at my fingertips”. While that may sound like a cliche, these days, it is pretty easy to meet this goal. So easy in fact, that I can do it in around 10 minutes using a SharePoint Document Library, a quick PowerApp and a Flow of only 5 steps. I then get to be a smartass, hand them my phone and say “there you go – at your fingertips… That’ll be $250 thanks”.

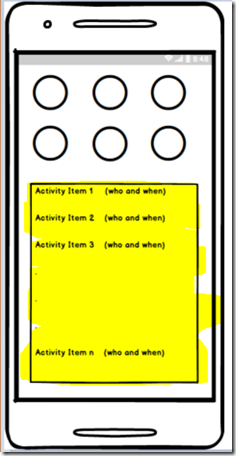

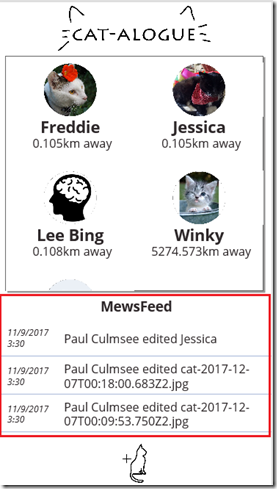

Now if you want to score $250, I have bad news for you. No client has ever actually paid me when I used that line. But nonetheless, if you have been looking for a demo to show just how powerful these tools have become, then one is pretty good… If you check the image below, you can see I have a PowerApp that is browsing a SharePoint document library and irrespective of whether the document is Word, Excel or PowerPoint, is loading it as a PDF into PowerApps for preview. Better still, this solution is very much in the citizen developer realm…

A common use-case for this sort of solution is a field worker who needs to access schematic data like drawings or equipment documentation. I have also deployed something similar for quality management and safety apps. In all cases, users had a need to be able to retrieve documents quickly and easily…

How to build this app

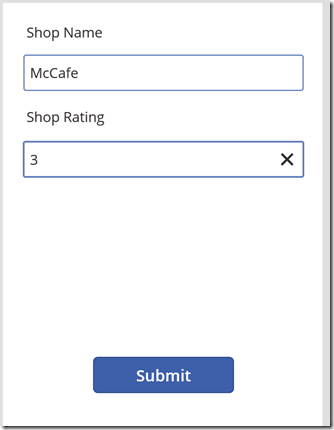

This app consists of a simple PowerApp, a SharePoint doclib and a 5 step Flow.

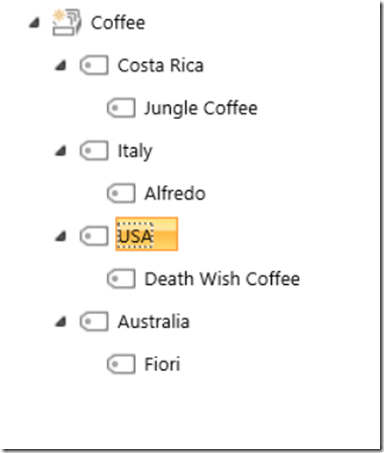

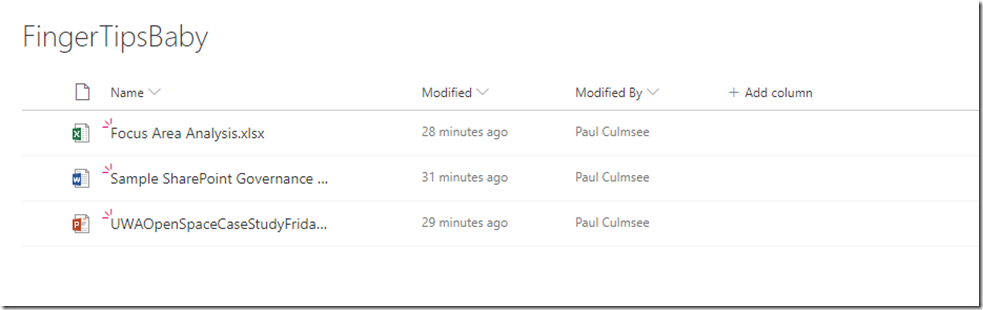

Step 1: Create a document library in SharePoint – in my case I called my library “FingertipsBaby”…

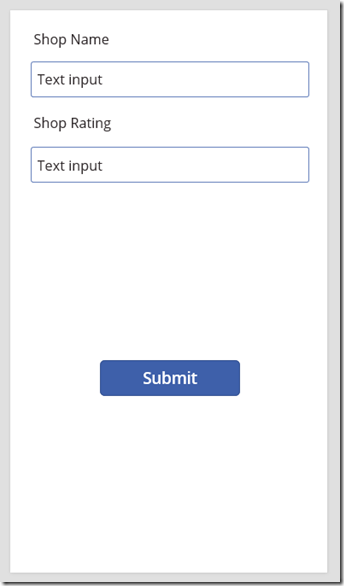

Step 2: Start PowerApps studio and make a blank tablet app.

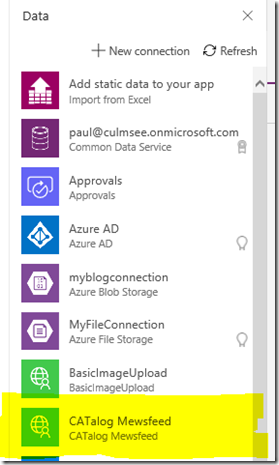

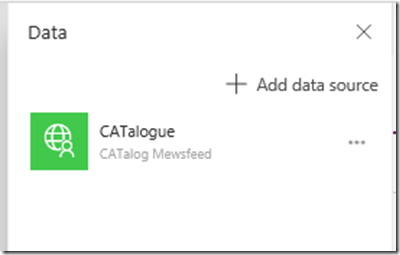

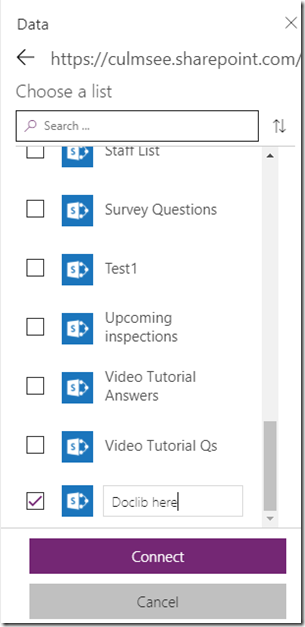

Step 3: Connect the document library as a data source using the SharePoint connector. Note, although document libraries are not listed by default, you can definitely connect them. Just scroll to the end of the lists and in the last box, type in the name of your document library…

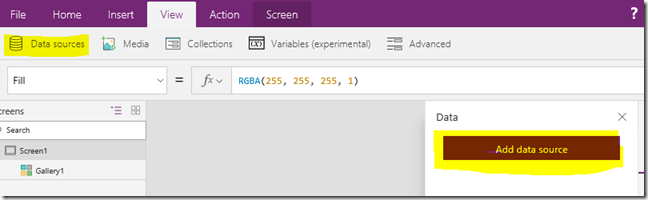

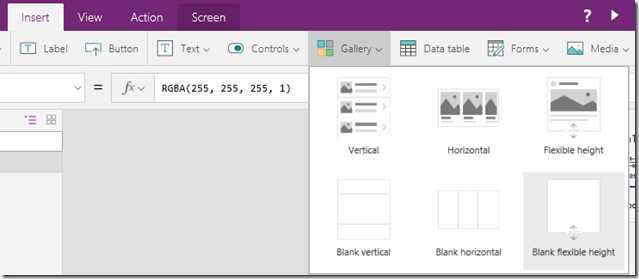

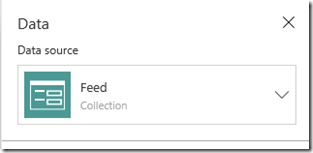

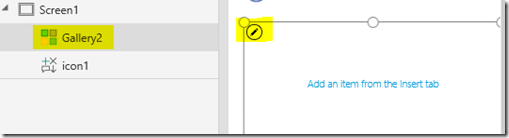

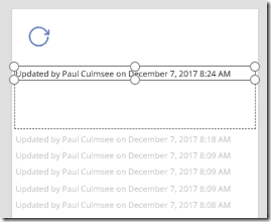

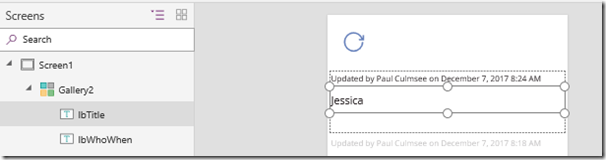

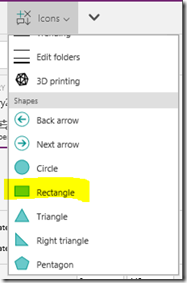

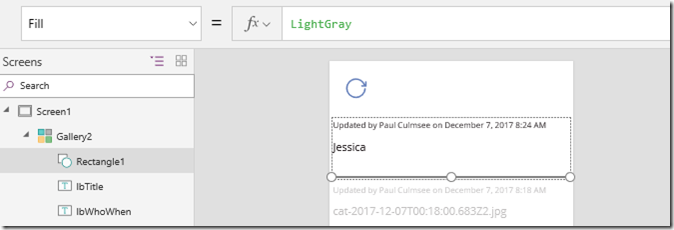

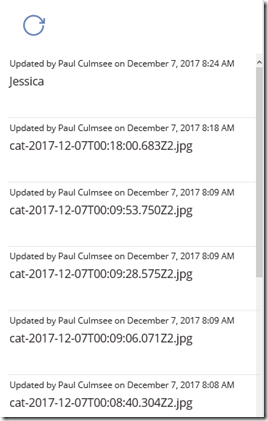

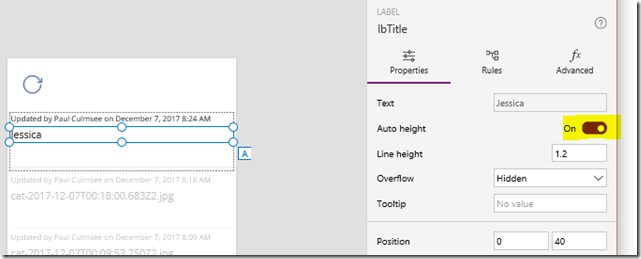

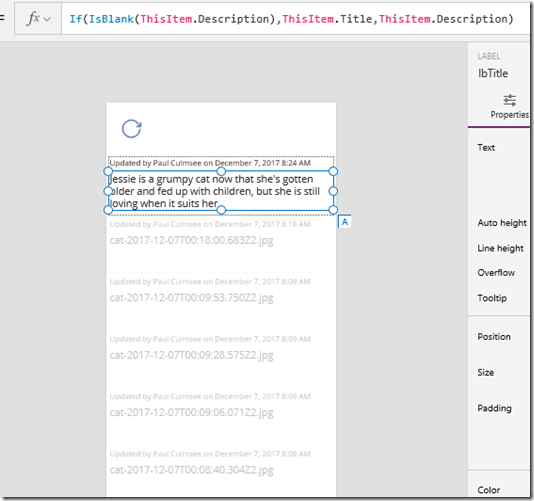

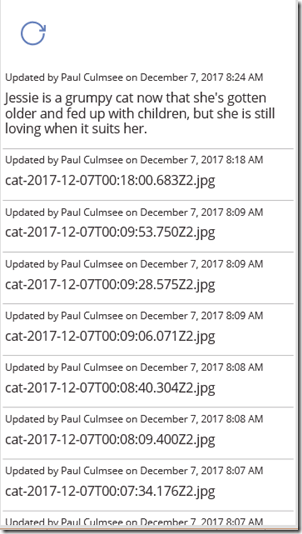

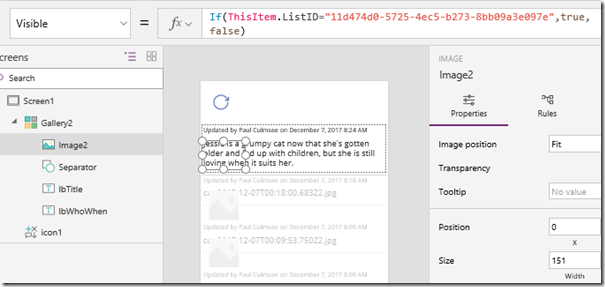

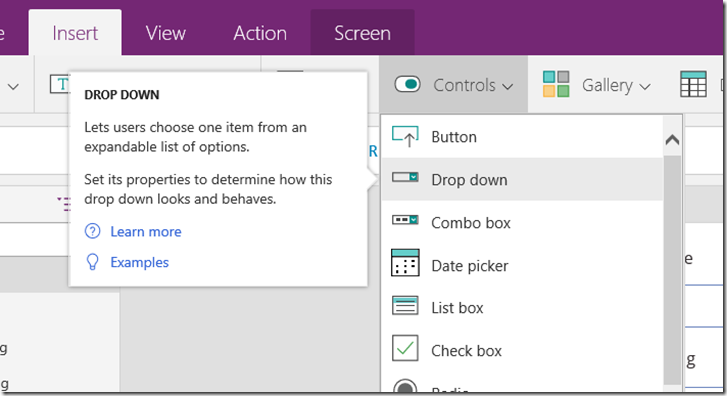

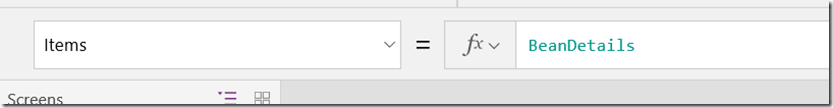

Step 4: On the left side of the screen, add a blank vertical gallery and linked it to the data source.

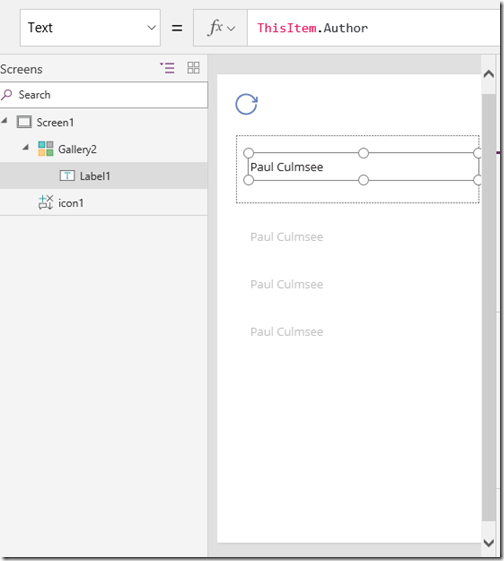

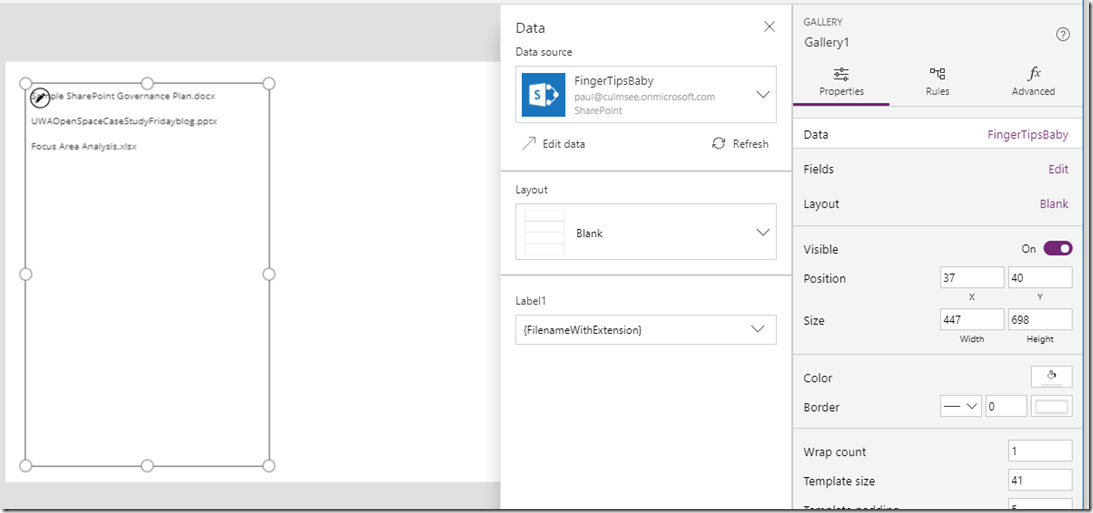

Step 5: Add a label to the gallery and set its Text property to ThisItem.’{FilenameWithExtension}’. If you check the image below, you can see my three documents listed from my library. Not pretty I know, but this is a 10 minute demo remember?

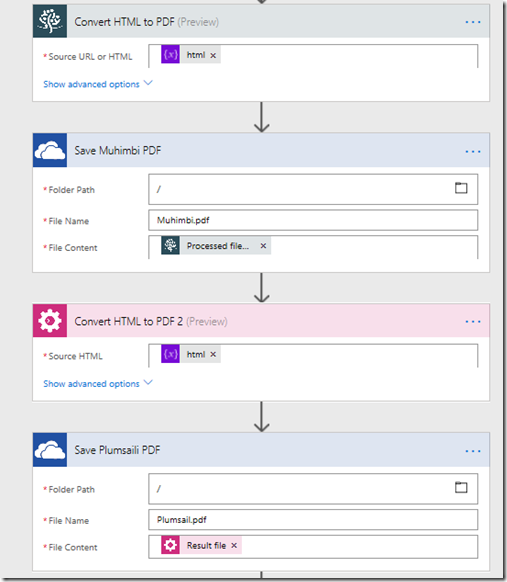

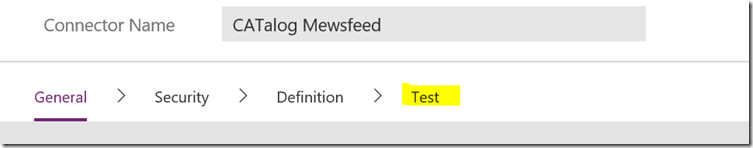

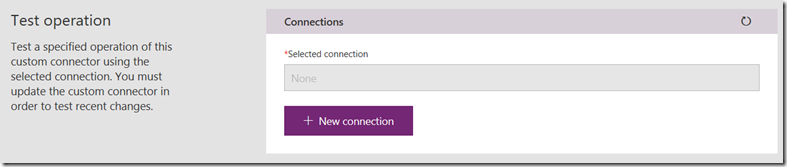

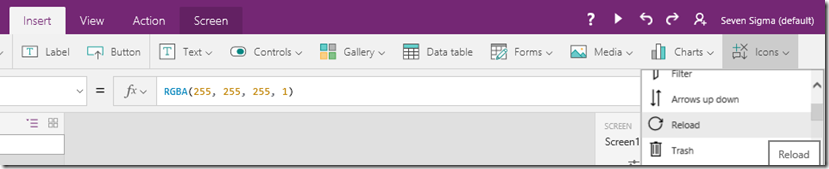

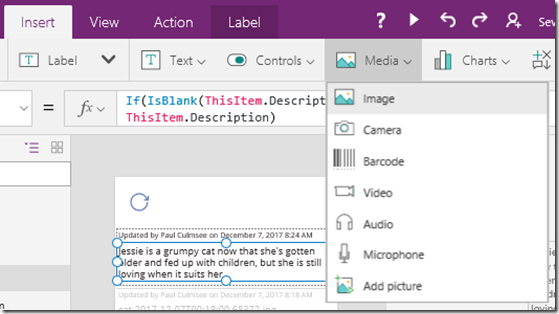

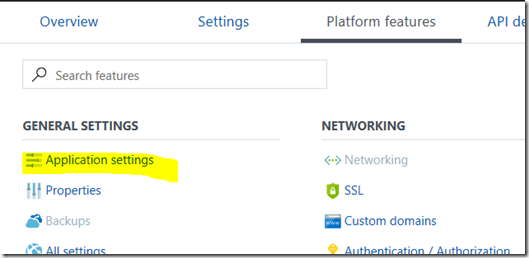

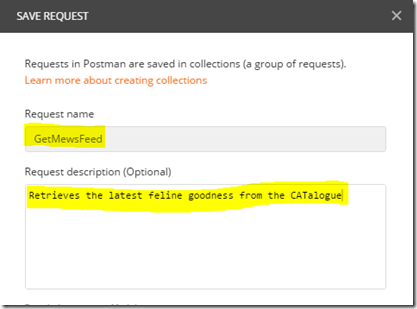

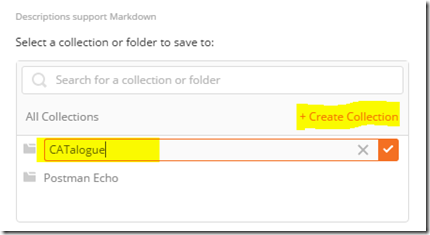

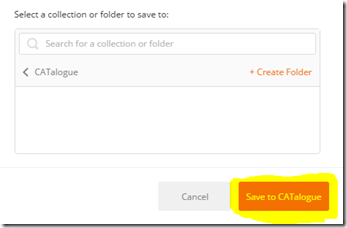

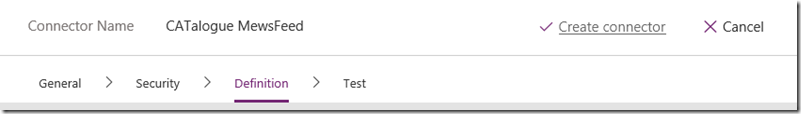

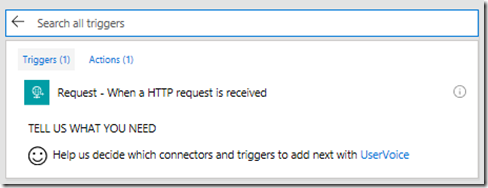

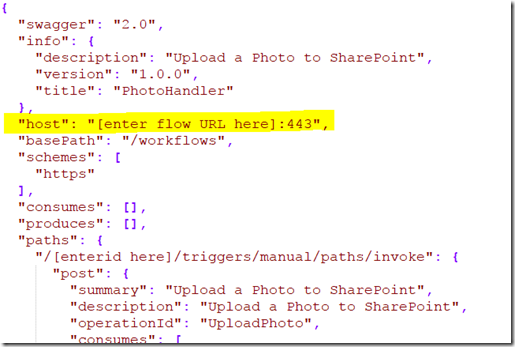

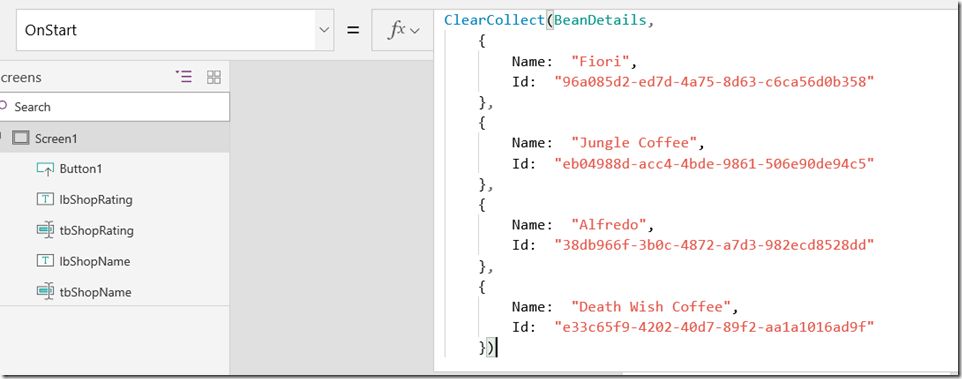

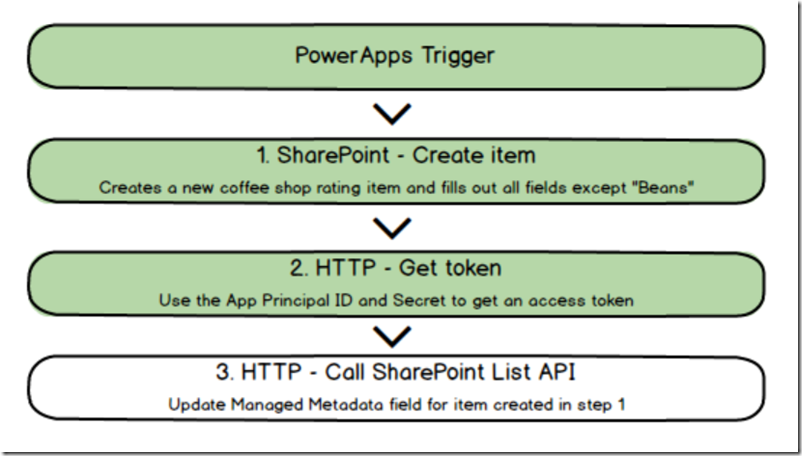

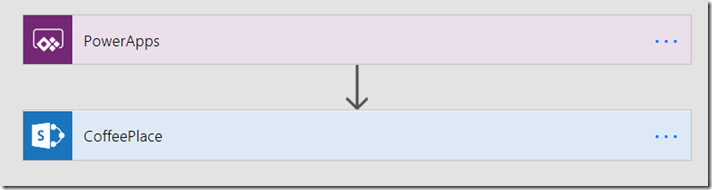

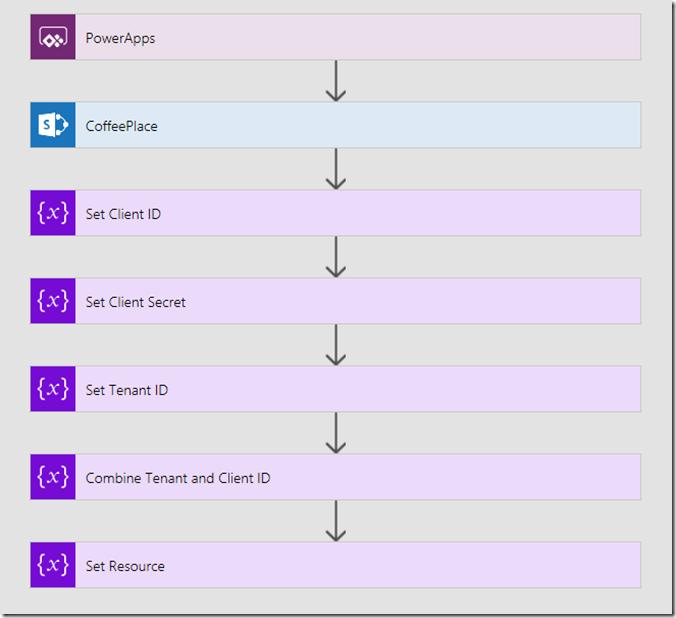

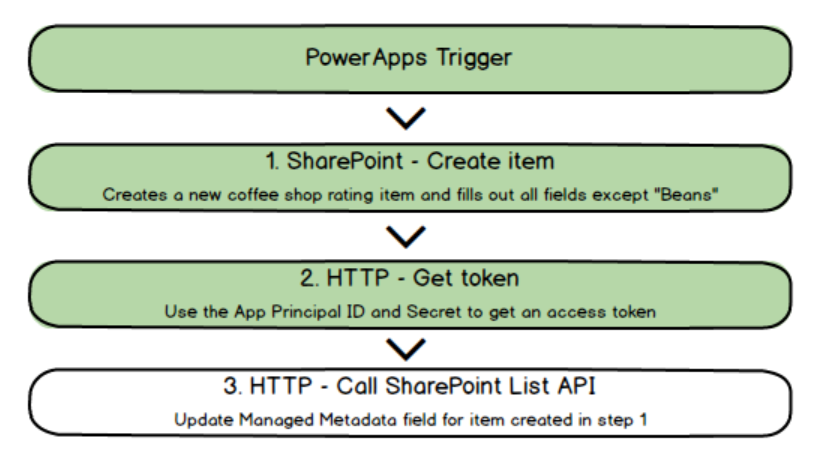

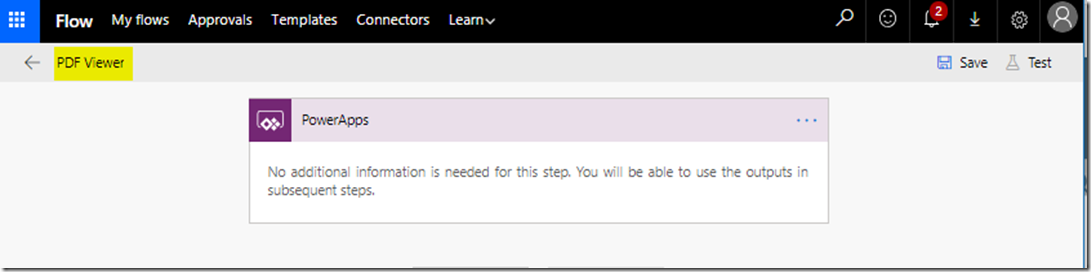

Step 6: Go to the Action menu and click the Flow icon. in the Data panel that opens on the right, click Create a new flow. A new tab will open in your browser and log you into flow. A new flow will be created using a PowerApps trigger as shown above. Take the opportunity to give your flow a name, such as “PDF Viewer”

Explanation interlude:

Now at this point I feel some explanation is needed. We are about to use a few recent enhancements to Flow and SharePoint. Kudos needs to go to Brian Edwards who made me aware of a powerful new SharePoint API that among other things, generates thumbnails and PDF’s of documents. Brian already has a blog that explains how to do thumbnails – so consider this article a companion one that does PDF’s.

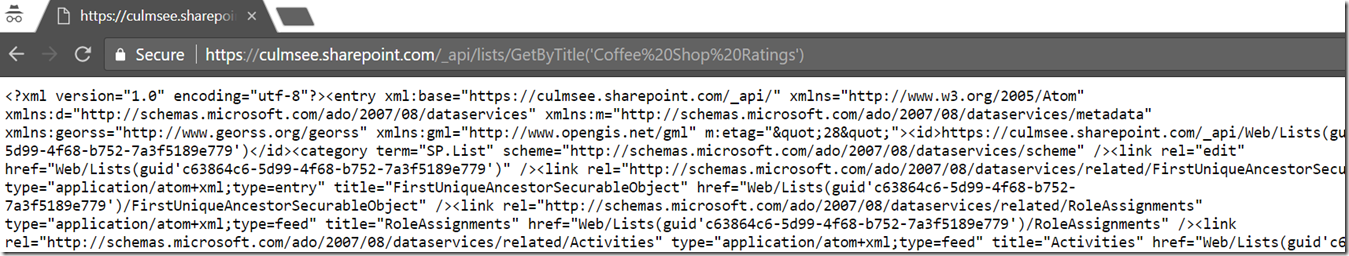

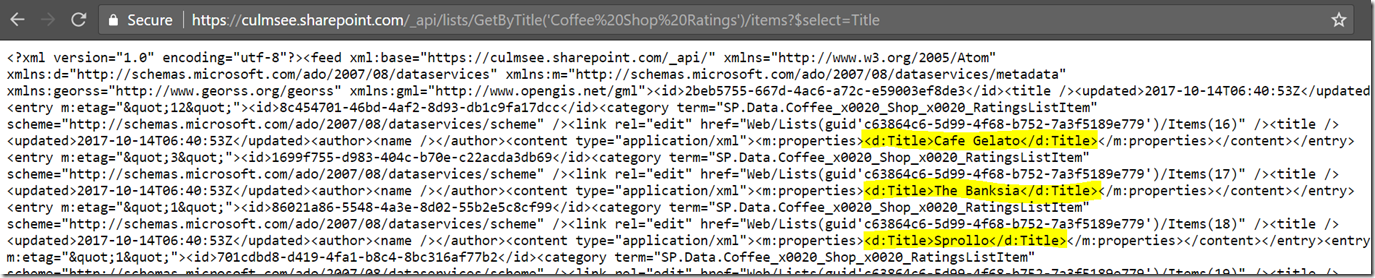

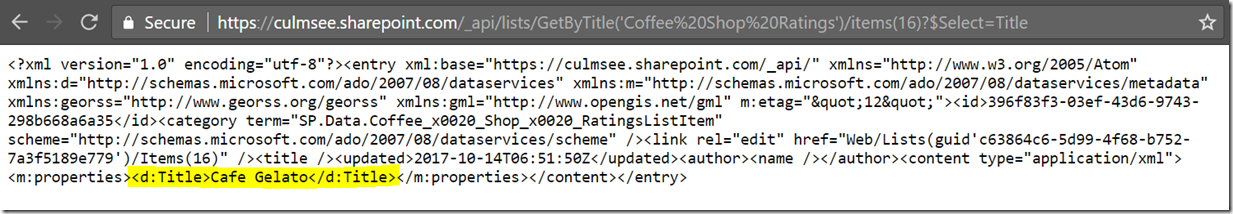

The key to the whole thing is an API called RenderListDataAsStream. This is a very powerful API that has a truckload of goodness, but for now I will keep it simple. I will pass a SharePoint List Item ID of a document to the API and it will give me back a PDF. The API call looks like this:

_api/web/lists/GetbyTitle(‘FingerTipsBaby’)/RenderListDataAsStream?FilterField1=ID&FilterValue1=<List Item ID>

In the body of the request, I need to specify the data I’d like returned. The parameter is called RenderOptions and looks like this:

{

“parameters”: {

“RenderOptions” : 4103

}

)

What is the deal with the number? Well, the documentation includes a table of different interesting things you can return, which you can do by adding the Values together.

| Label | Description | Value |

| ContextInfo | Return list context information | 1 |

| ListData | Return list data | 2 |

| ListSchema | Return list schema | 4 |

| EnableMediaTAUrls | Enables URLs pointing to Media TA service, such as .thumbnailUrl, .videoManifestUrl, .pdfConversionUrls. | 4096 |

So we are asking this API not just to bring back the data associated with a list item, but also some additional useful stuff. The last entry is particularly interesting as it mentions a mysterious beast known as the Media TA service which I assume means “translation”. Basically what happens is if we total the numbers listed in the above table (4103), we will end up all the data we need to do PDF conversion.

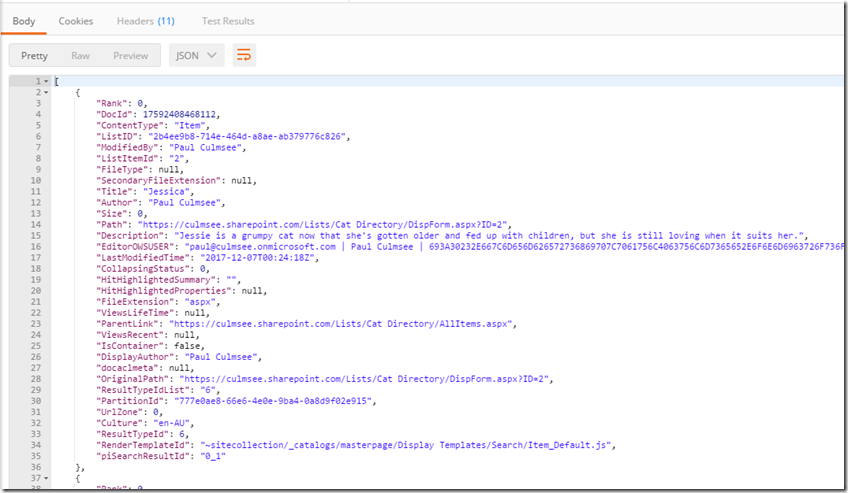

Now at this stage, I don’t want to do an exhaustive examination of the JSON data returned by this API call, but I will call out a couple more things before we get to flow…

First up, you will find this entry…

“.pdfConversionUrl”: “{.mediaBaseUrl}/transform/pdf?provider=spo&inputFormat={.fileType}&cs={.callerStack}&docid={.spItemUrl}&{.driveAccessToken}

This parameter refers to a URL that will convert the list item to a PDF. All you need to do is access this URL. How easy is that eh? Now all of the stuff in curly braces are tokens but they are also returned as part of the API call, so they can be grabbed from elsewhere in the output. For example, {.mediabaseURL} is a few lines up and in my case is:

“.mediaBaseUrl”: “https://australiasoutheast1-mediap.svc.ms”

So if we find each token in the .pdfConversionUrl and replace them, we basically have converted our document to PDF without needing to store a PDF. The document can stay in its native format!

Back to Work…

Okay so let’s deal with this flow.

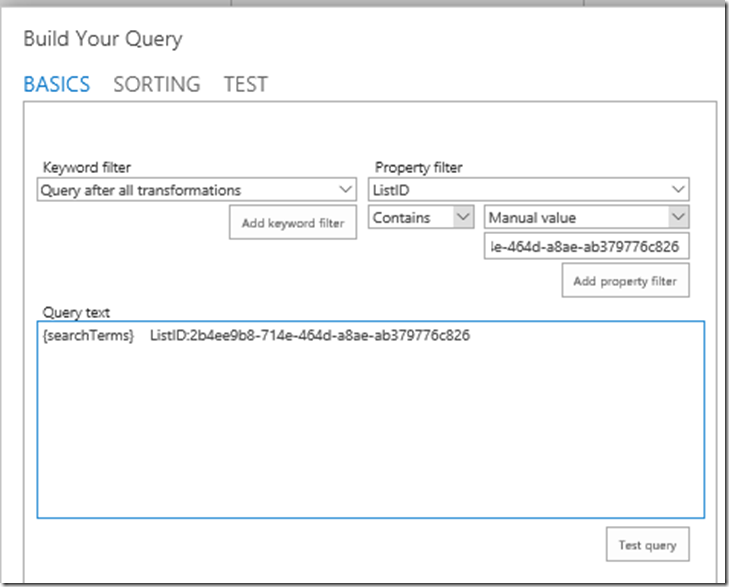

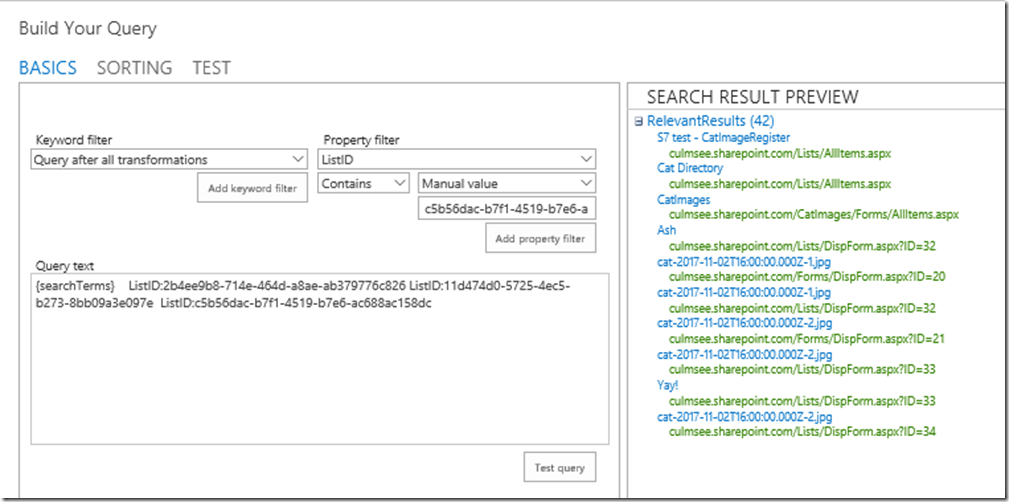

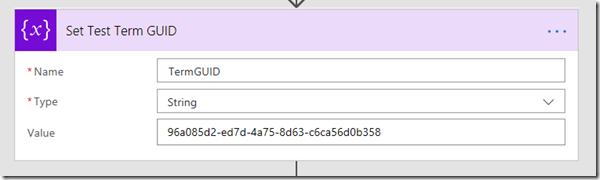

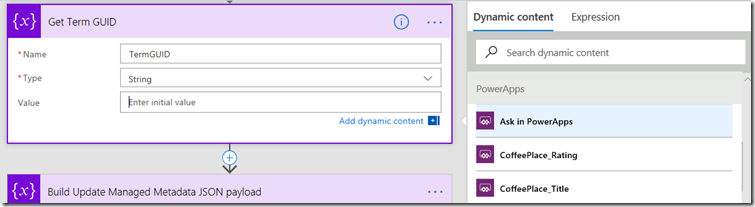

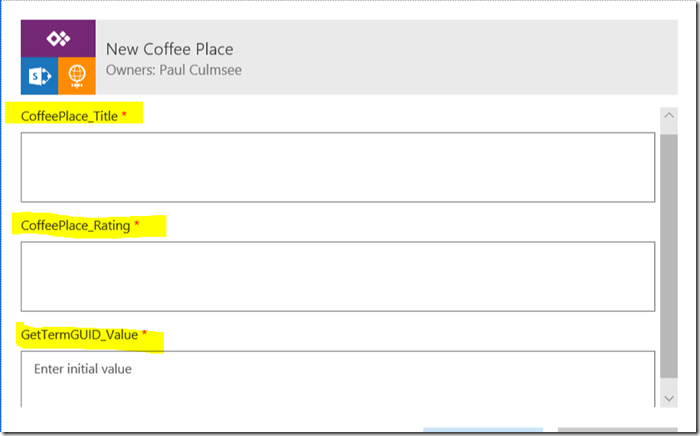

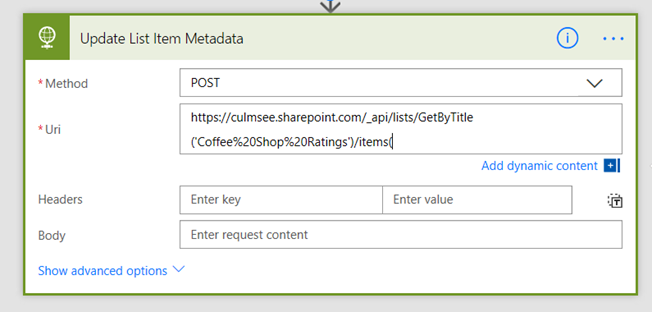

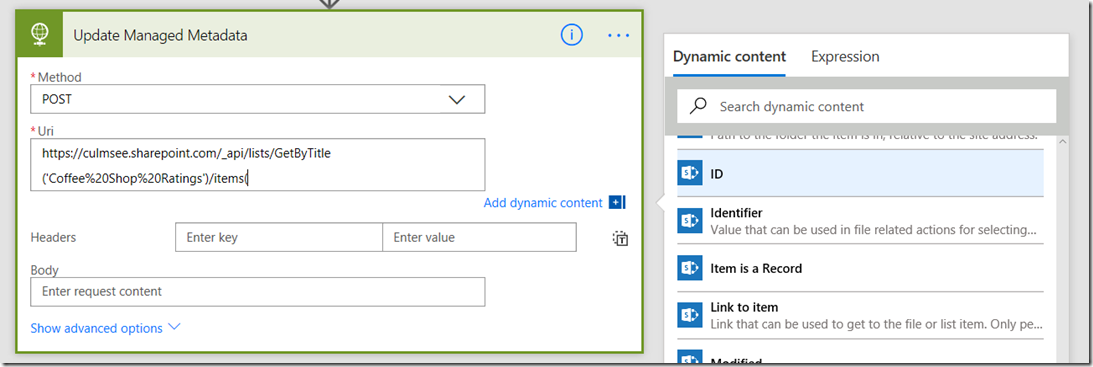

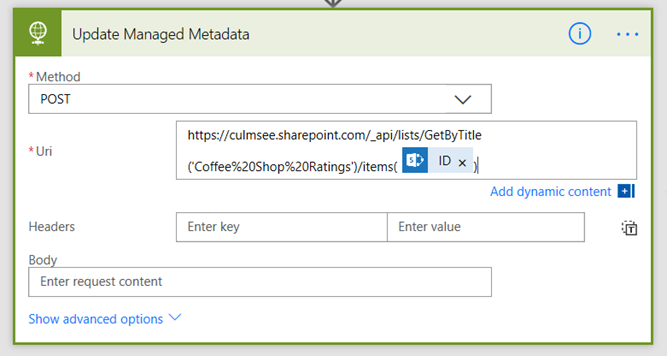

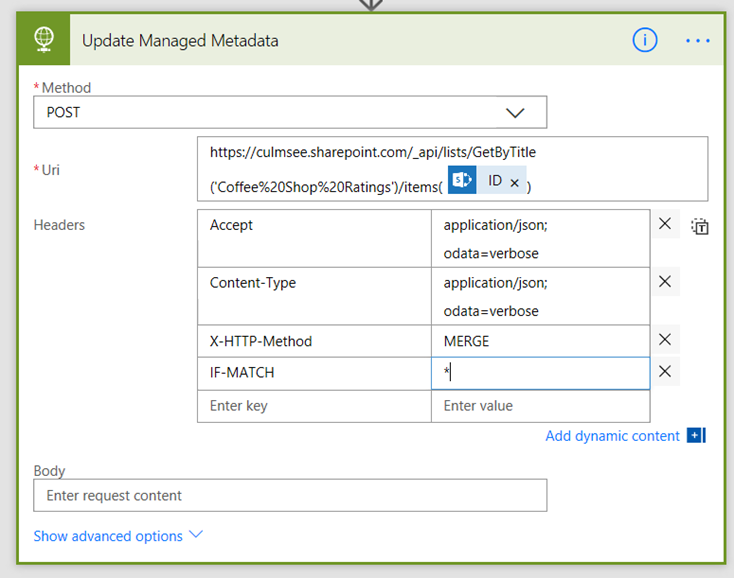

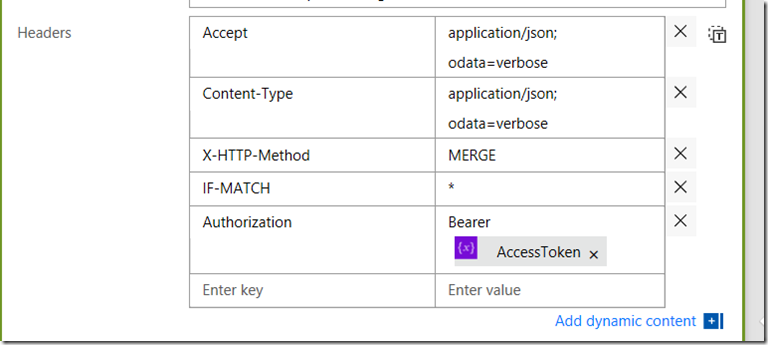

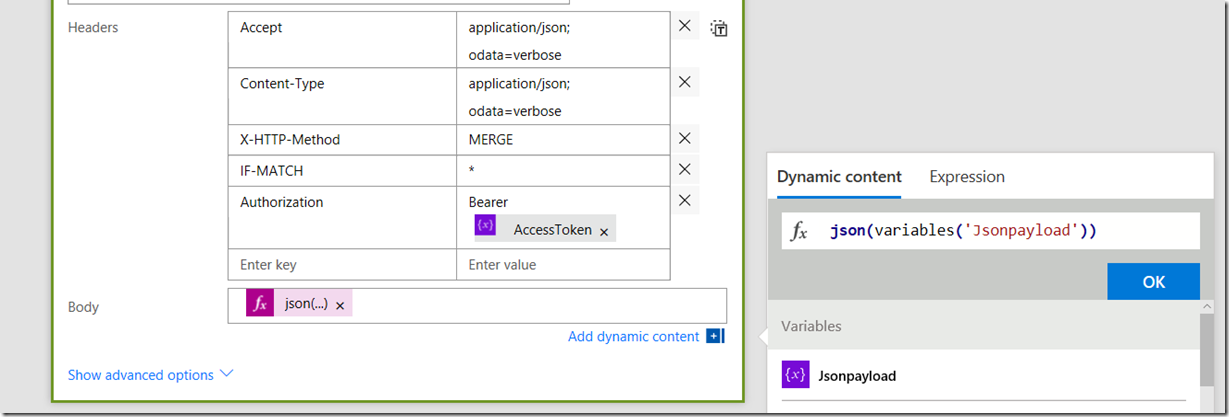

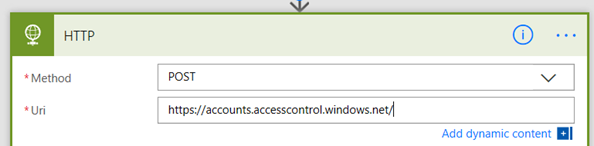

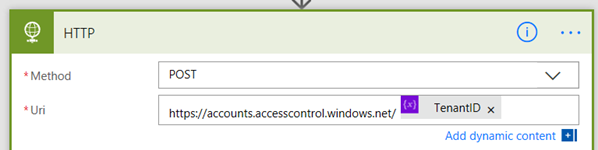

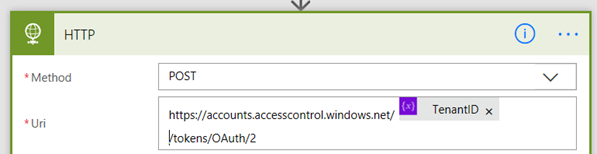

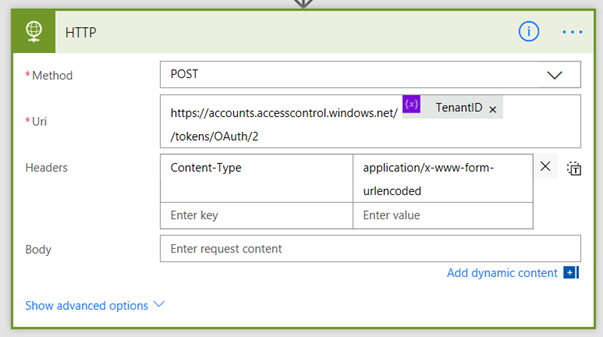

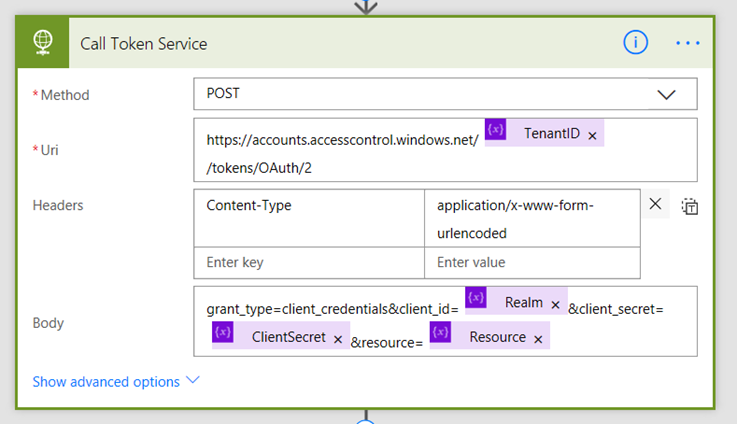

Step 7: Add an SharePoint action called Send an HTTP Request to SharePoint to the flow. Set the Site Address to the site that contains your document library and set the Method to POST. Set the URI to _api/web/lists/GetbyTitle(<docLib>)/RenderListDataAsStream?FilterField1=ID&FilterValue1=, where <Doclib> is the name you specified for the document library (for example, mine is _api/web/lists/GetbyTitle(‘FingerTipsBaby’)/RenderListDataAsStream?FilterField1=ID&FilterValue1=). On the end of the URI, click Dynamic Content and choose Ask in PowerApps as shown below:

Step 8: In the Body section, paste the following configuration (watch the quotes when pasting from this article):

{

“parameters”: {

“RenderOptions” : 4103

}

}

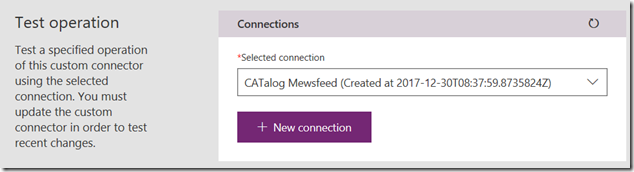

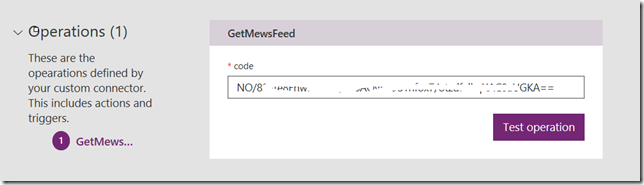

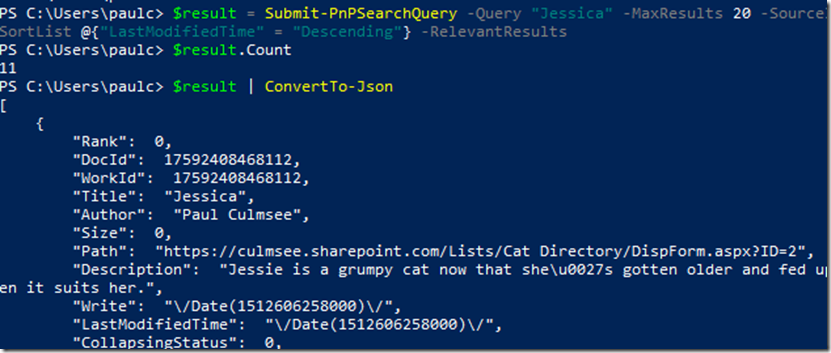

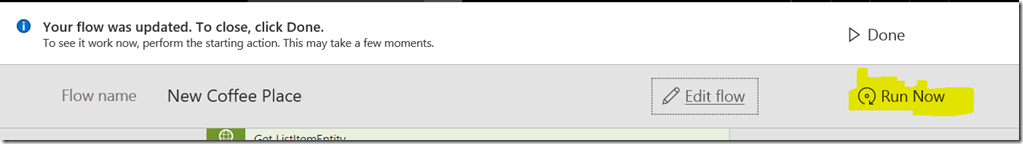

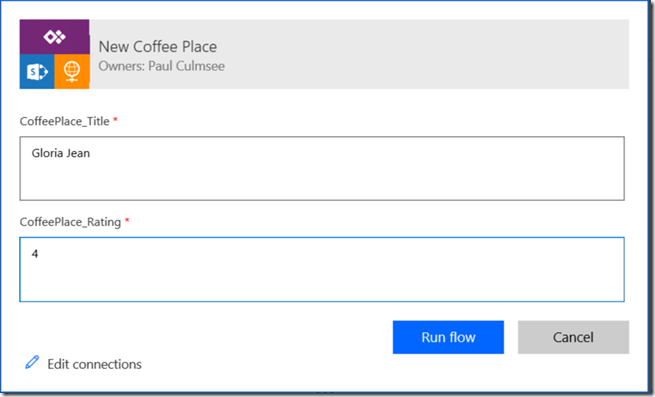

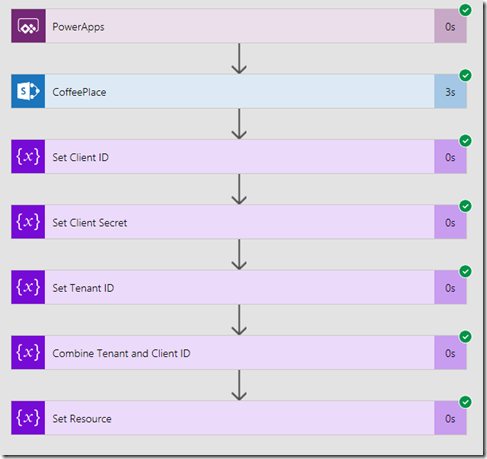

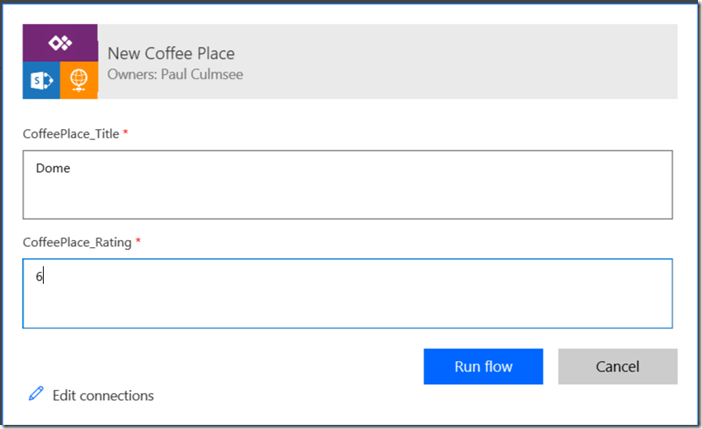

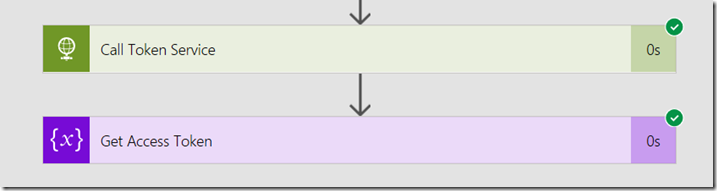

Step 9: Click the Save and then Test icon in the top right. Choose the option I’ll perform the trigger action and click the Save and Test button. Click the continue button and on the next screen, type in the ID number of one of the documents in your library and click the Run Flow button. Your flow will start and you can click Done. Assuming it worked, you will see a green tick of happiness.

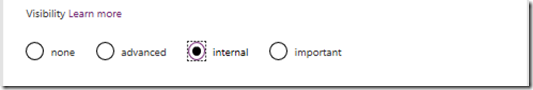

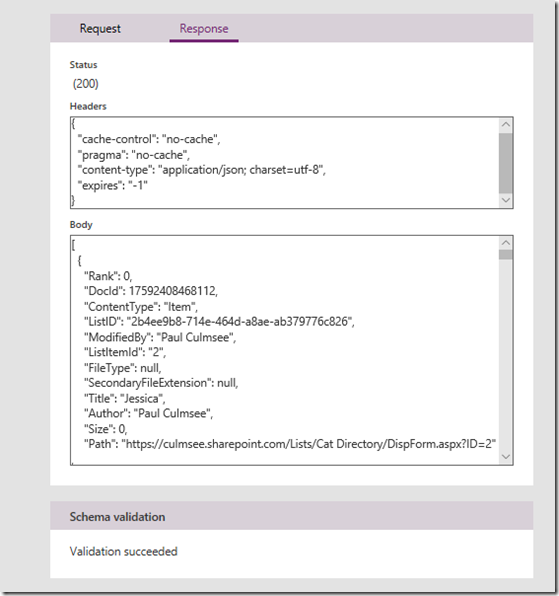

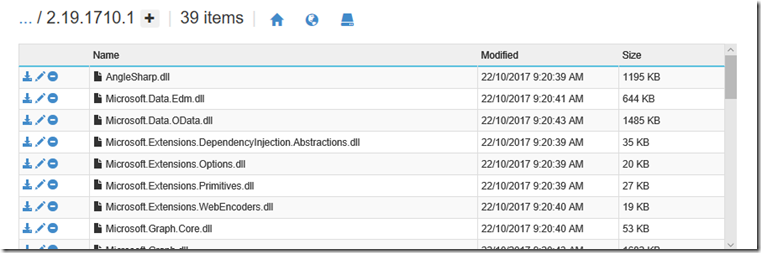

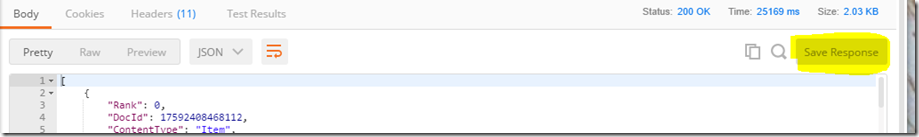

Step 10: Click on the Send an HTTP Request to SharePoint action to expand it. We need to grab the output from the API call for the next action. Find the OUTPUTS section and copy the entire contents to the clipboard….

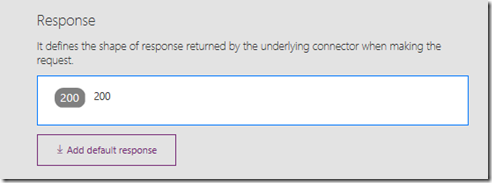

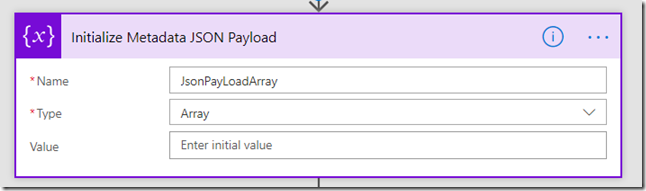

Step 11: Go back to edit mode and add a Data Operations action called Parse JSON to your flow. This action will allow us to make use of the output of the API call in the subsequent flow step.

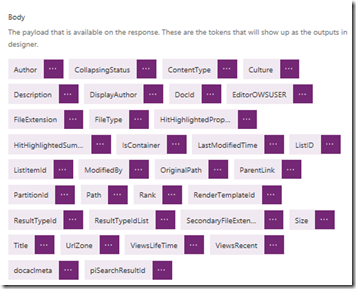

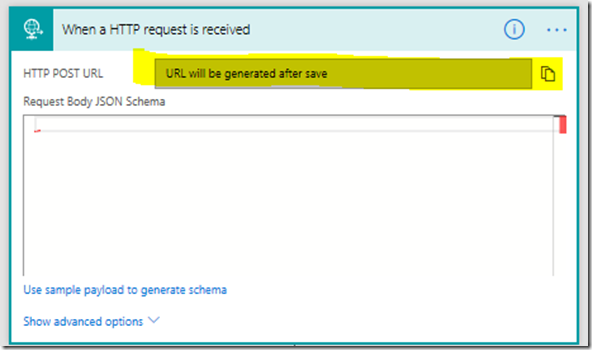

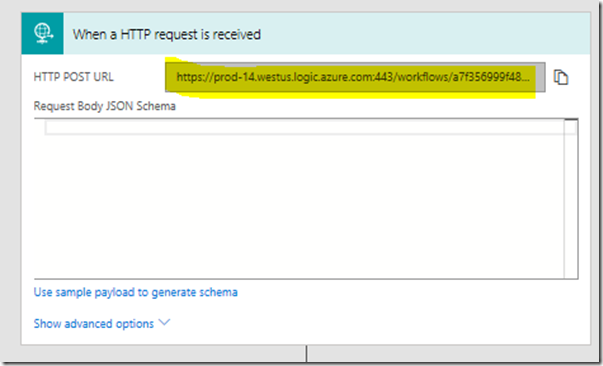

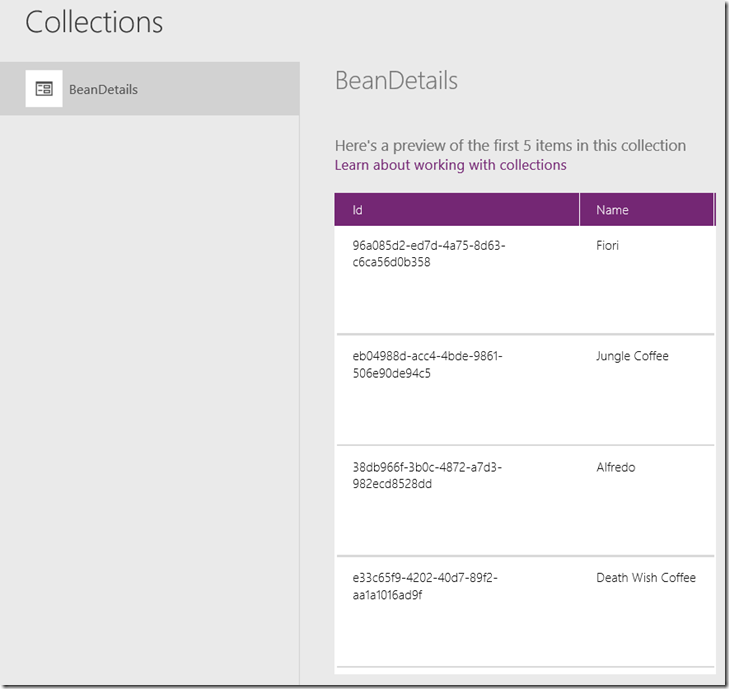

Click the Use sample payload to generate schema link and paste your clipboard contents into the window and click the Done button. In the Content field, go to Dynamic content popout and choose Body from the Send an HTTP Request to SharePoint action.

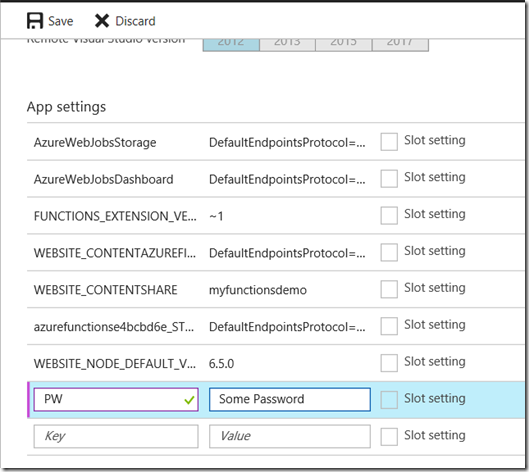

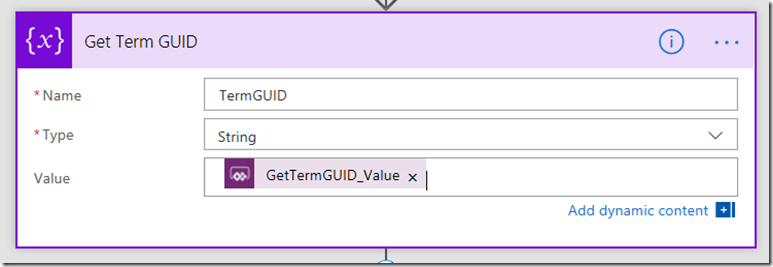

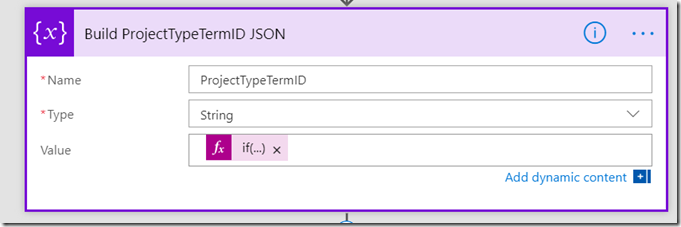

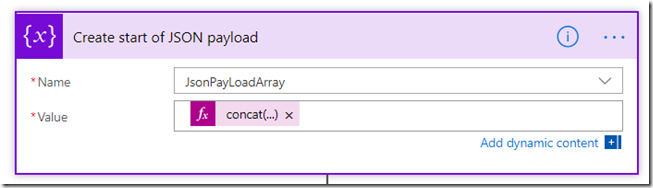

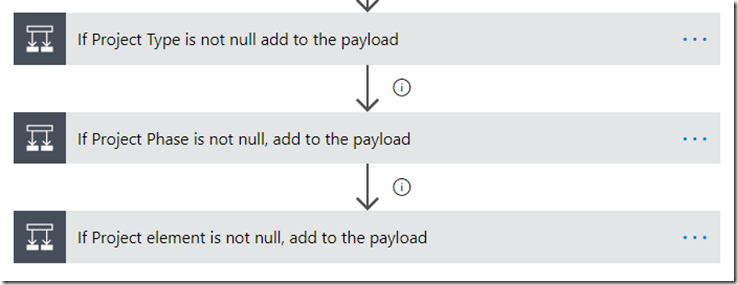

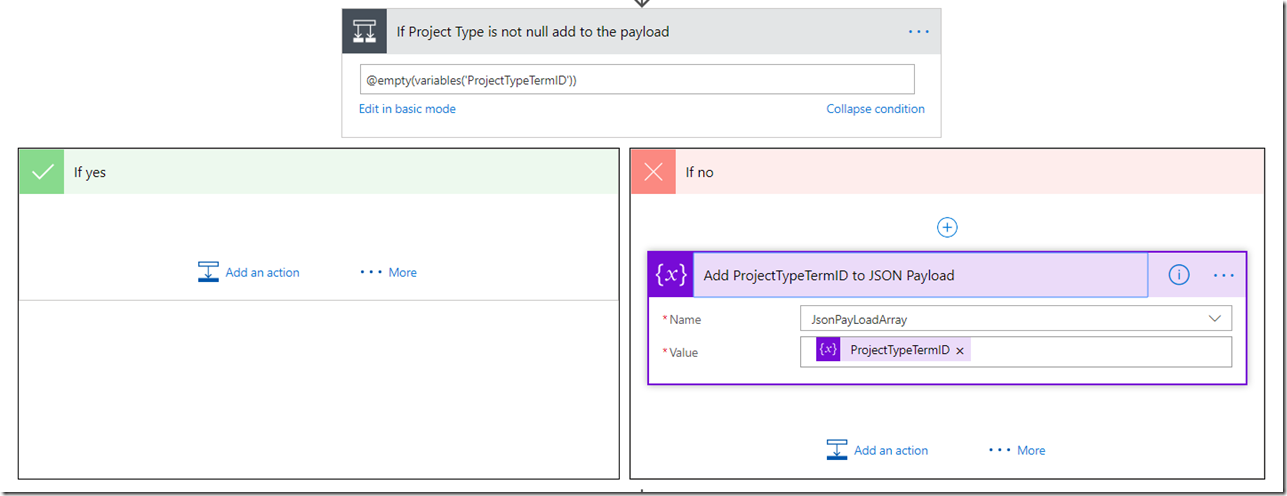

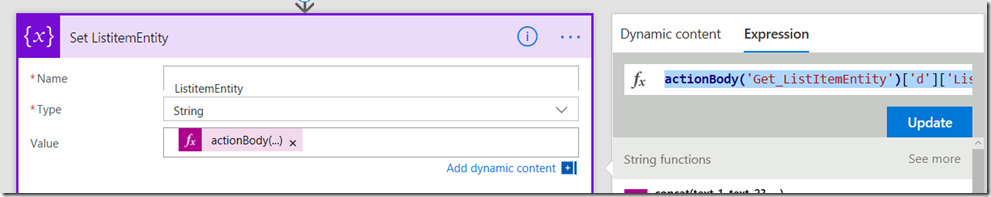

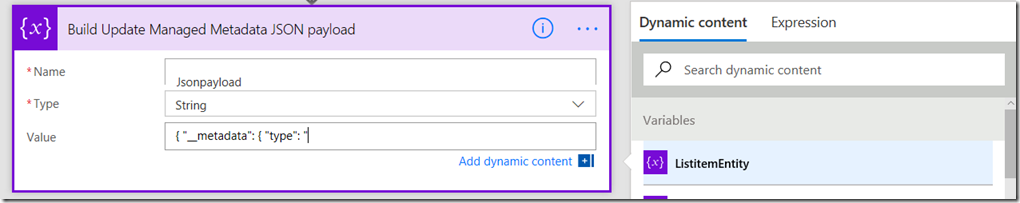

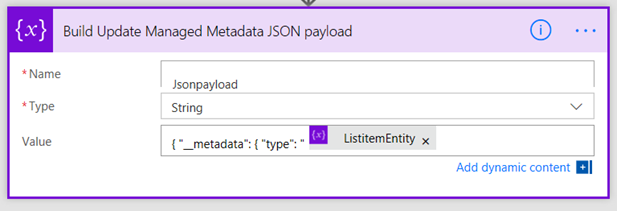

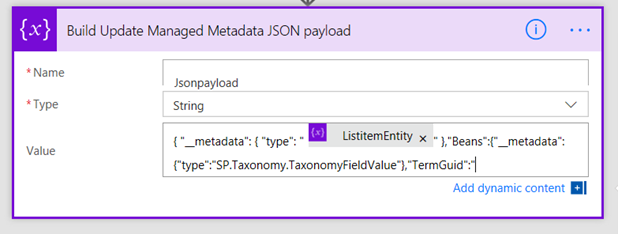

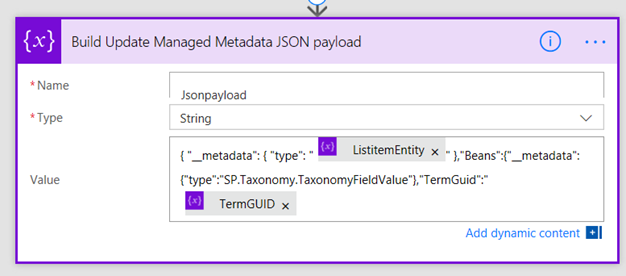

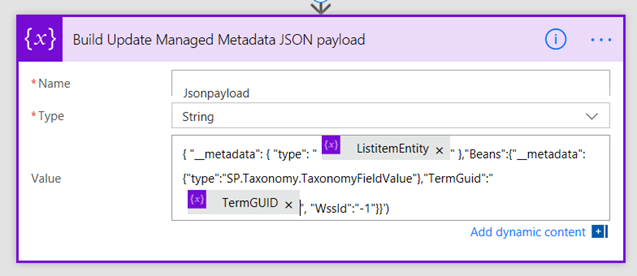

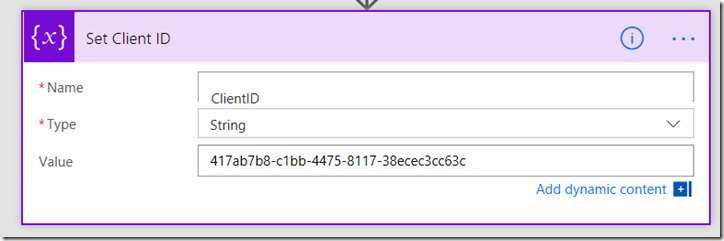

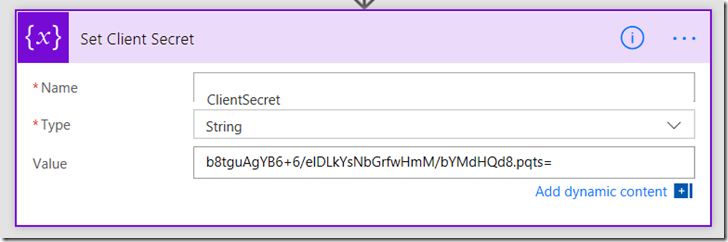

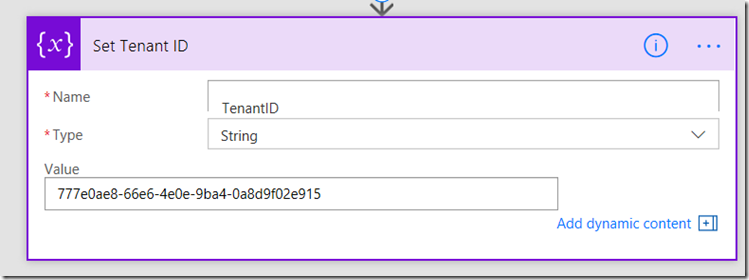

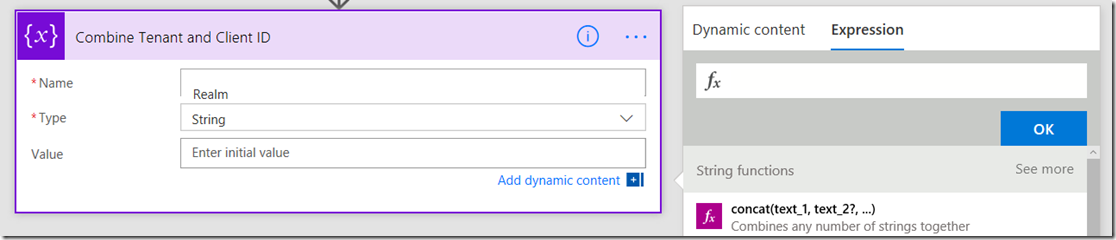

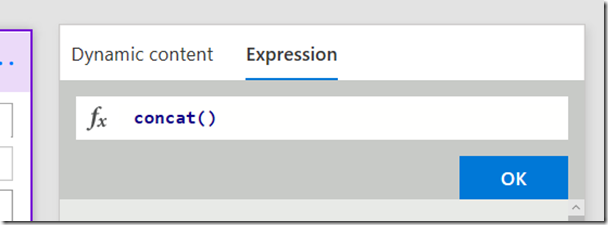

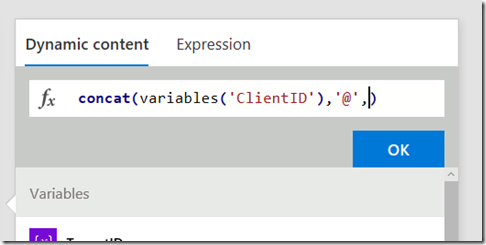

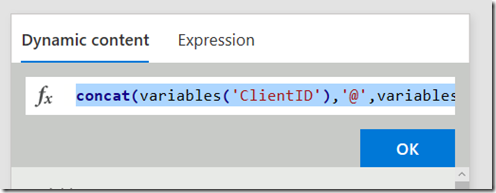

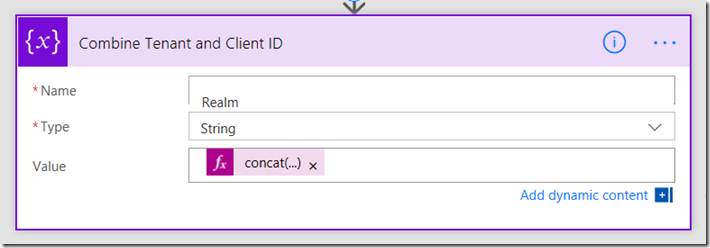

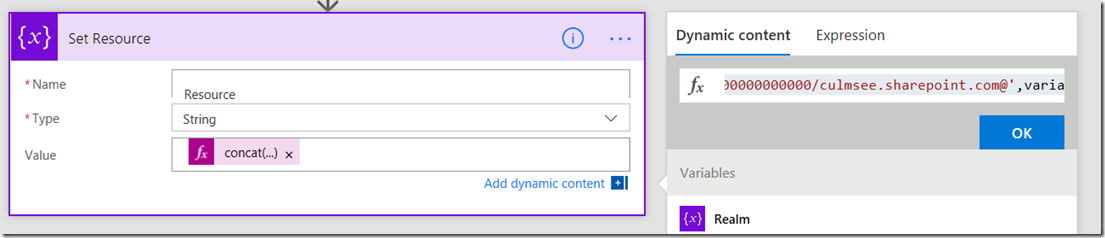

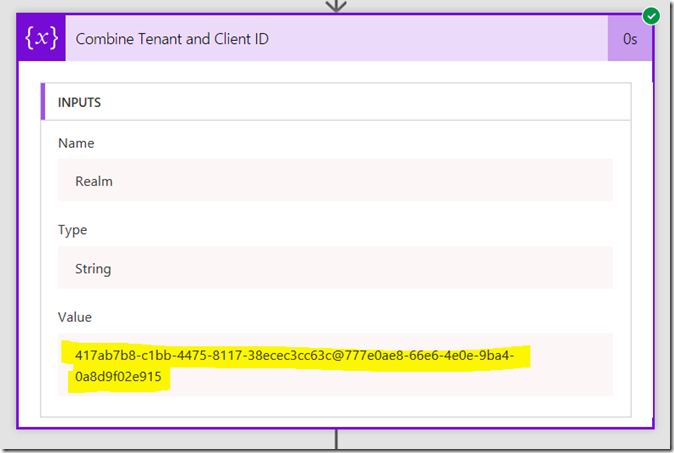

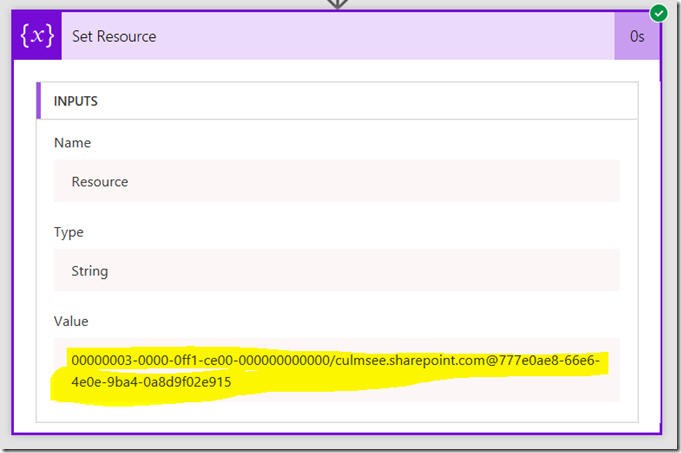

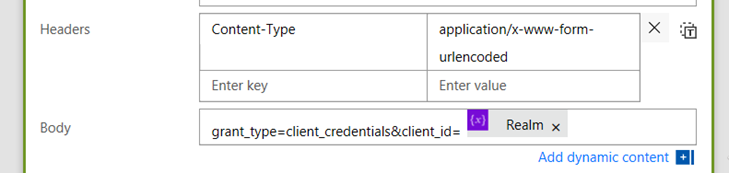

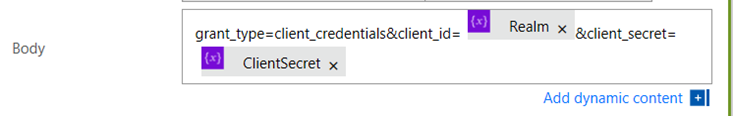

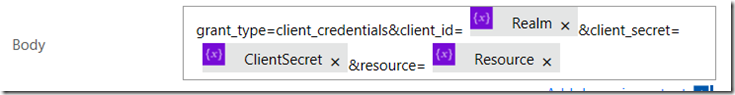

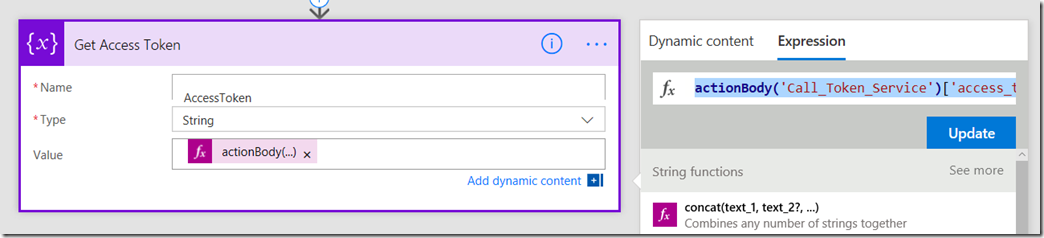

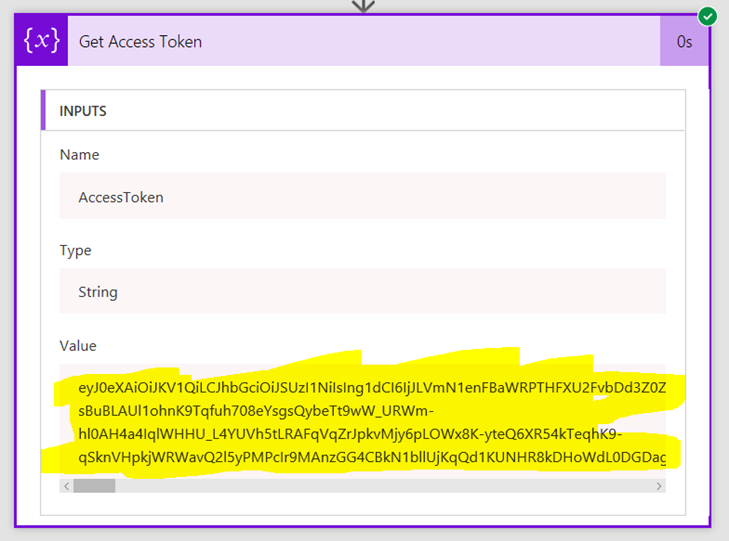

Step 12: Add an Initialize Variable action to your flow. Name the variable PDFURL (or something similar) and set it to a string. Now we come to the most complex bit of the flow where we have to substitute the token we examined in the interlude earlier. Be careful here… this is the most likely place to make an error…

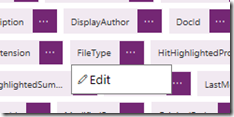

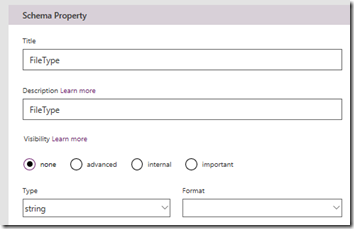

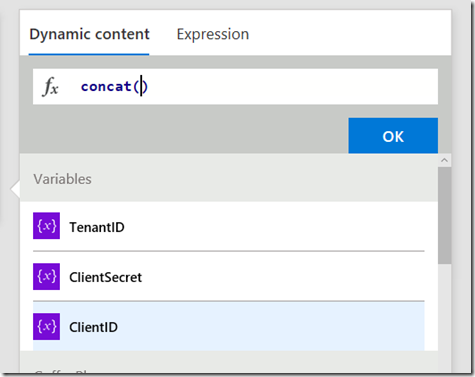

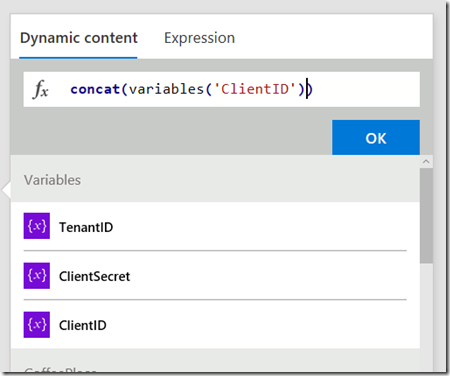

In the Value textbox, click the dynamic content flyout and find .mediaBaseUrl from the Parse JSON action…

Next, add the following text to the Value textbox, taking care not to delete what you just added in the previous step. <edit>Please note the image has a quote that is not meant to be there, so ignore it!</edit>

/transform/pdf?provider=spo&inputFormat=

Now we come to a slightly tricky bit. The next bit of content we need is the file type of the document we are dealing with. Now this is formatted as a single value array, so despite there being only a single value, we need to create an expression to handle it. Click the Expression tab and type in the following:

first(body(‘Parse_JSON’)?[‘ListData’]?[‘Row’])?[‘File_x0020_Type’]

For the uninitiated, this is known as Workflow Definition Language and is well worth learning…

Next, add the following text to the Value textbox, taking care not to delete what you just added in the previous step.

&cs=

Also be super careful here because at the time of writing, the cursor in this textbox can randomly move and wipe out your edits…

Now in the Value textbox, click the dynamic content flyout and find .callerStack from the Parse JSON action…

Next, add the following text to the Value textbox, taking care not to delete what you just added in the previous step.

&docid=

Now we come to another array that needs to be handled. This is the URL of the document we are dealing with. Click the Expression tab and type in the following:

first(body(‘Parse_JSON’)?[‘ListData’]?[‘Row’])?[‘.spItemUrl’]

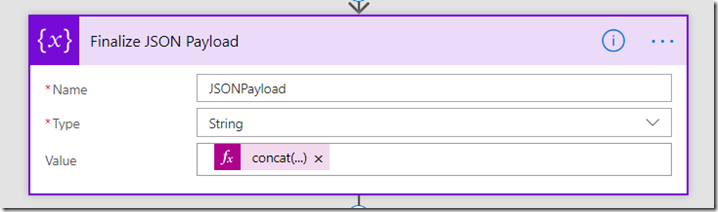

Okay we are almost done… Add an ampersand ( & ) to the Value textbox, and then click the dynamic content flyout and find .driveAccessToken from the Parse JSON action…

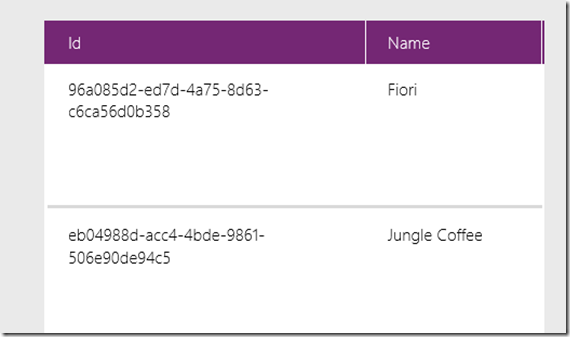

Step 13: Add a the PowerApps – Respond to PowerApps action to the flow. Click the Add an output icon and choose Text from the list of output types. Name the output PDFURL and set the value to the variable you created in step 12 (PDFURL).

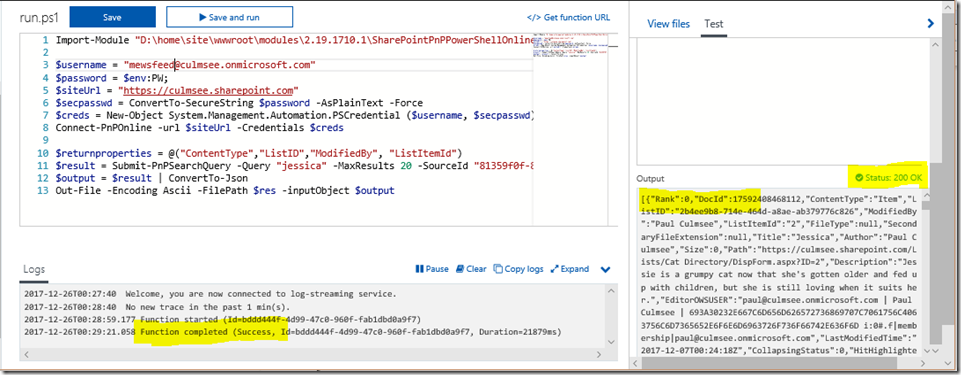

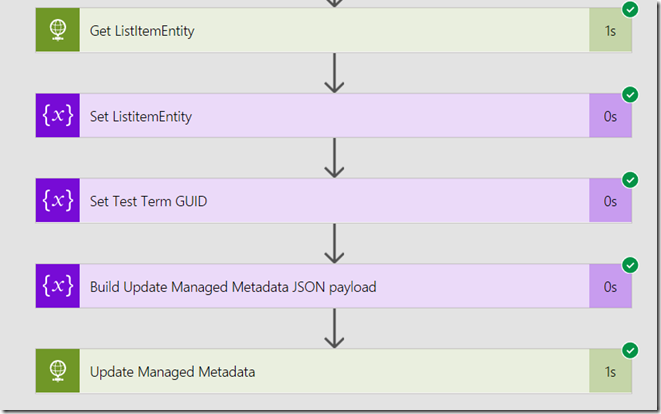

Ok we completed the flow. Save it and give it a quick review. It should look something like this…

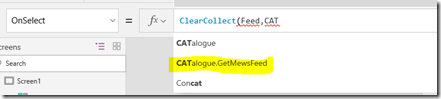

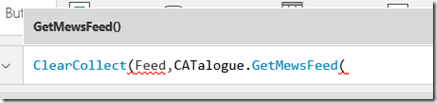

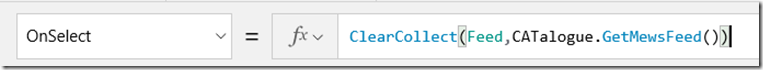

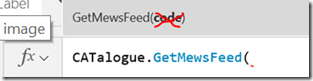

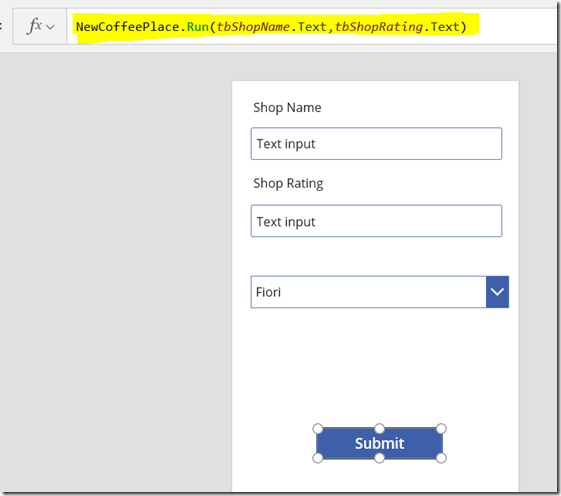

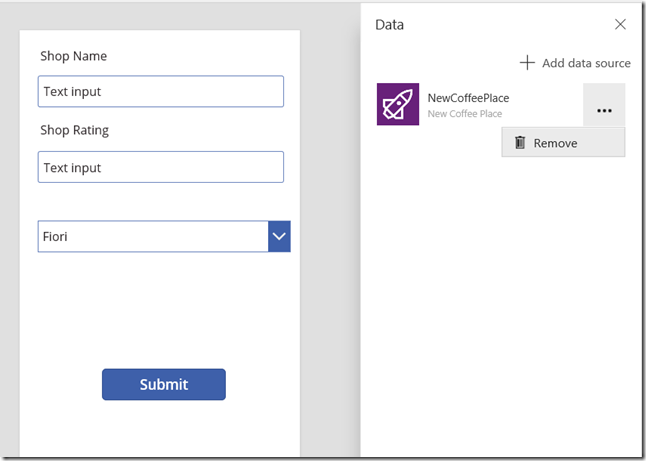

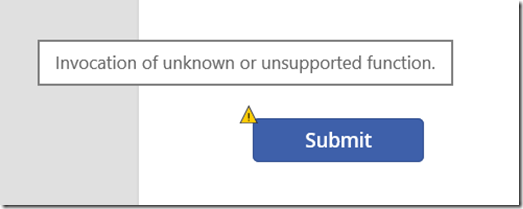

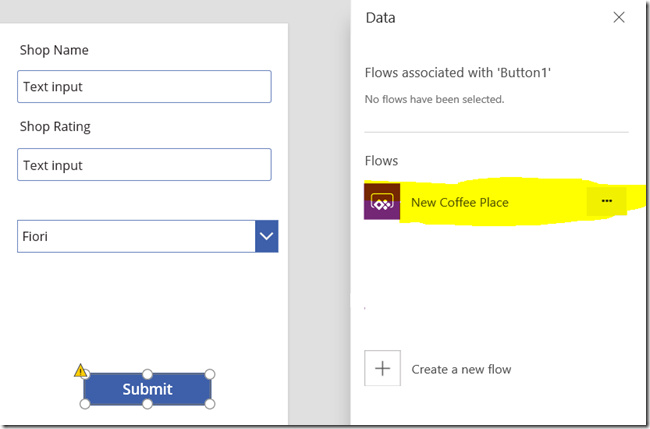

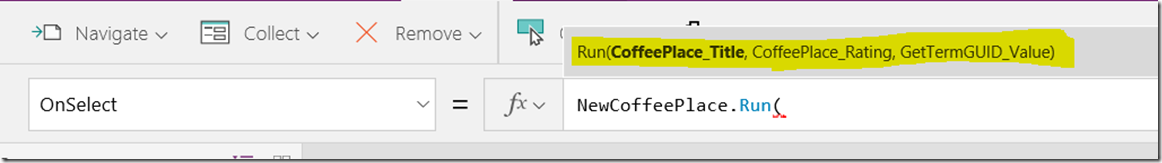

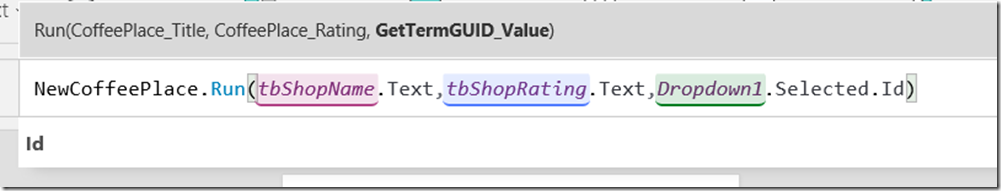

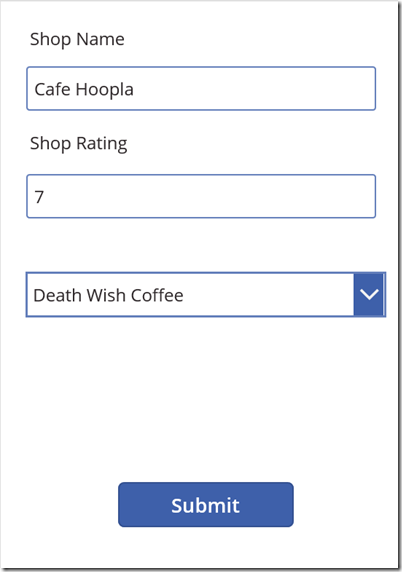

Step 14: Back in your PowerApp, now, select the label gallery you created in step 5. From the Action menu, choose Flow and you should see your newly created flow listed. Click on it to add it to your PowerApp. Once added, the label will partially fill in the OnSelect property to run your flow.

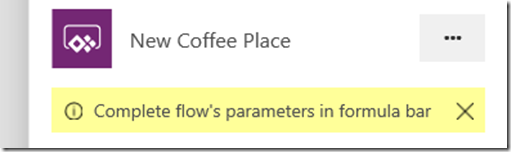

Step 15: Complete the flow invocation by adding a reference to the ID of the document from the gallery by using the following function:

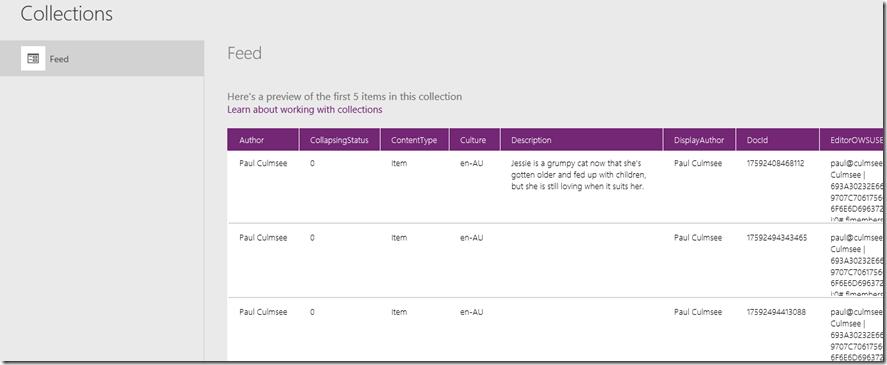

Set(PDF,PDFViewer.Run(ThisItem.ID))

This function will set a variable called PDF to the output from our newly minted flow. When you click on a file in the gallery, the flow will get called. In fact you can try this out before we move on. Press the play icon to test your app and click one of the files. Then go back into edit mode and click the Variables icon from the View menu. You should see a global variable called PDF that has a data type of record. Clicking the variable will bring up further details and if you click on the record icon, you will see the output returned from flow.

If you get this far, that’s great because you are almost home….

Step 16: From the Insert menu, Add a PDF Viewer from the Controls menu. Place it to the right of the gallery and set the Document property to PDF.pdfurl. (if you look closely at the above image you can see that PowerApps has decided that the output of the API is a record with a column name of pdfurl).

Now click on some of the documents… if you did it correctly, you will be seeing PDF’s! Wohoo! ![]()

Conclusion

Not bad eh? A 5 step flow has enabled a very powerful use-case and once again showcases how well PowerApps and Flow work together. I should note that there are three recent innovations that have enabled this scenario, namely the recent Send an HTTP request to SharePoint action, the RenderListDataAsStream API and the Respond to PowerApps action.

I need to also give credit to my daughter Ashlee, who actually figured a lot of this out earlier in the week. We will record a video on this fairly soon to accompany this post.

If you got value out of this post please let me know. I’d love to hear of other use-cases of variants on this approach

Thanks for reading…

Paul Culmsee

![image_thumb[6]_thumb image_thumb[6]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb6_thumb_thumb.png)

![image_thumb[16]_thumb image_thumb[16]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb16_thumb_thumb.png)

![image_thumb[19]_thumb image_thumb[19]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb19_thumb_thumb.png)

![image_thumb[21]_thumb image_thumb[21]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb21_thumb_thumb.png)

![image_thumb[23]_thumb image_thumb[23]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb23_thumb_thumb.png)

![image_thumb[28]_thumb image_thumb[28]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb28_thumb_thumb.png)

![image_thumb[31]_thumb image_thumb[31]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb31_thumb_thumb.png)

![image_thumb[33]_thumb image_thumb[33]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb33_thumb_thumb.png)

![image_thumb[35]_thumb image_thumb[35]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb35_thumb_thumb.png)

![image_thumb[38]_thumb image_thumb[38]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb38_thumb_thumb.png)

![image_thumb[41]_thumb image_thumb[41]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb41_thumb_thumb.png)

![image_thumb[43]_thumb image_thumb[43]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb43_thumb_thumb.png)

![image_thumb[45]_thumb image_thumb[45]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb45_thumb_thumb.png)

![image_thumb[47]_thumb image_thumb[47]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb47_thumb_thumb.png)

![image_thumb[50]_thumb image_thumb[50]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb50_thumb_thumb.png)

![image_thumb[52]_thumb image_thumb[52]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb52_thumb_thumb.png)

![image_thumb[55]_thumb image_thumb[55]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb55_thumb_thumb.png)

![image_thumb[57]_thumb image_thumb[57]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb57_thumb_thumb.png)

![image_thumb[59]_thumb image_thumb[59]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb59_thumb_thumb.png)

![image_thumb[64]_thumb image_thumb[64]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb64_thumb_thumb.png)

![image_thumb[66]_thumb image_thumb[66]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb66_thumb_thumb.png)

![image_thumb[68]_thumb image_thumb[68]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb68_thumb_thumb.png)

![image_thumb[70]_thumb image_thumb[70]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb70_thumb_thumb.png)

![image_thumb[72]_thumb image_thumb[72]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb72_thumb_thumb.png)

![image_thumb[76]_thumb image_thumb[76]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb76_thumb_thumb.png)

![image_thumb[78]_thumb image_thumb[78]_thumb](http://www.cleverworkarounds.com/wp-content/uploads/2018/06/image_thumb78_thumb_thumb.png)