[Note: It appears that SharePoint magazine has bitten the dust and with it went my old series on the “tribute to the humble leave form”. I am still getting requests to a) finish it and b) republish it. So I am reposting it to here on cleverworkarounds. If you have not seen this before, bear in mind it was first published in 2008.]

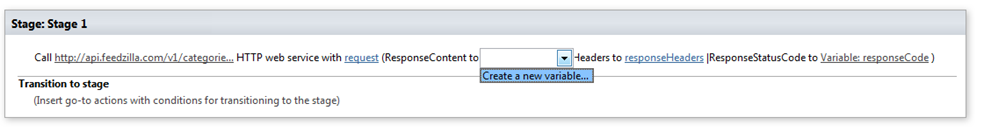

![image_thumb[2] image_thumb[2]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb2_thumb.png) Welcome again students to part 5 of the CleverWorkArounds Institute Body of Knowledge (CIBOK). As you know, the highly prestigious industry certification, the CleverWorkarounds Certified Leave Form Developer (CCLFD) requires candidates to demonstrate proficiency in all aspects of vacation/leave forms. Parts 1-4 of this series covered the introductory material, and now we move into the more advanced topic areas.

Welcome again students to part 5 of the CleverWorkArounds Institute Body of Knowledge (CIBOK). As you know, the highly prestigious industry certification, the CleverWorkarounds Certified Leave Form Developer (CCLFD) requires candidates to demonstrate proficiency in all aspects of vacation/leave forms. Parts 1-4 of this series covered the introductory material, and now we move into the more advanced topic areas.

Wondering what the hell I am talking about? Perhaps you’d better read part 4.

This post represents a change of tack. the first four articles were written from the point of view of demonstrating InfoPath in a pre-sales capacity. Your “business development managers”, which is a politically correct term for “steak-knife salesmen”, have promised the clients that InfoPath is so good that it can also make your coffee too. Essentially, anything to make the sale and earn their commission. Of course, they don’t have to stick around to actually implement it. They have moved off onto their next victim, and you are left to satisfy the lofty expectations.

Now, we switch into “implementation engineer” mode where a proof of concept trial has been agreed to. At this point we are dealing with three client stakeholders. Monty Burns (project sponsor), Waylon Smithers (process owner) and Homer Simpson (user reference group).

To remind you about parts one to four, we introduced the leave form requirements, and demonstrated how quick and easy it is to create a web based InfoPath form and publish it into SharePoint with no programming whatsoever. Was it a realistic demo? Of course not! But we have sold the client the dream and now we have to turn that dream into reality!

Here are the original requirements.

- Automatic identification of requestor

- Reduce data entry

- Validation of dates, specifically the automatic calculation of days absent (excluding weekends)

- Mr Burns is not the only approver, we will need the workflow to route the leave approval to the right supervisor

- We have a central leave calendar to updateSo in this article, we will deal with the first requirement.

Automatic Identification of Homer

Now, some of the staff of the Springfield Nuclear Power aren’t the most computer literate. Technical support staff report that one employee in particular, Homer Simpson, is particularly bad. In between sleeping on the job and chronic body odour, he has been known to hunt in vain for the “any” key on the keyboard. So he was chosen to be the user acceptance test to ensure that the entire process is completely idiot-proof.

The way we will do this is to automatically fill in the full name of the current user, so Homer will not have to type his name in. It would just automagically appear as shown below.

![image_thumb[5] image_thumb[5]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb5_thumb.png)

So our process owner, Waylon Smithers, is excited and expectantly asks you, “This is easy to do, right”?

“Sure”, you answer confidently. “It’s built into InfoPath and I do it all the time”.

You then proceed into the properties of the above “Employee Name” textbox and locate the “Default Value” textbox. You then click the magical “fx” button that lets you pick from a bunch of built-in functions as shown below.

![image_thumb[7] image_thumb[7]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb7_thumb.png)

![image_thumb[9] image_thumb[9]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb9_thumb.png)

Now anybody who has used Excel will be used to the notion of using built-in functions to create dynamically created values. InfoPath provides similar cleverness. There are formulas for mathematical equations, string manipulation, date and time functions as well as a bunch of others.

While I won’t be discussing every built-in function in this article, I encourage you to check out what is available.

Now our intrepid consultant already knows what formula they want to use. The “Insert Function” button is clicked and from the list of functions available (conveniently categorised by type), we choose the function userName as shown below.

![image_thumb[11] image_thumb[11]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb11_thumb.png)

![image_thumb[13] image_thumb[13]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb13_thumb.png)

![image_thumb[15] image_thumb[15]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb15_thumb.png)

So we have now set the default value for the “Employee Name” field to be whatever the data the userName() function decides to give us. So let’s see what our favourite employee Homer Simpson now sees.

![image_thumb[17] image_thumb[17]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb17_thumb.png)

“Voila”, you think to yourself. “Homer, please test it.”

![image_thumb[18] image_thumb[18]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb18_thumb.png)

Homer: “Herrrrrr Simmmmmpson. Mmmmm. Who is hersimpson”?

Consultant: “Who”?

Homer: “Hersimpson. Is that… Lenny?”

Consultant: “…”

Homer: “Oh, wait! I know! I know! It’s that new sprinkled donut … mmmm donuts….”

Making it Homer Proof

So, it seems we have a problem at the user acceptance testing phase. Apart from drifting off into a donut induced daydream, it is clear that showing the username is not a good idea as Homer has not realised that his userid (hsimpson) represents himself. Clearly it would be more “Homer friendly” if his full name was displayed instead. At this point, we hit our first InfoPath challenge. How do we get the full name? We have no built in function called “fullName()”, so how can we do it?

Hmm, this is a little tougher than everything we have done so far. Perhaps we should get in some additional help from Professor Frink.

![image_thumb[20] image_thumb[20]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb20_thumb.png) “Well it’s quite simple, really. We need to create a secondary data source to the SharePoint web service UserProfileService.asmx and call the GetuserprofileByName method, passing it a blank string parameter called AccountName, and then interrogate name value pairs in the response to grab the first and last name and concatenate them into a full name Mm-hai.”

“Well it’s quite simple, really. We need to create a secondary data source to the SharePoint web service UserProfileService.asmx and call the GetuserprofileByName method, passing it a blank string parameter called AccountName, and then interrogate name value pairs in the response to grab the first and last name and concatenate them into a full name Mm-hai.”

Does anybody want the non-nerd (English) version of the above sentence?

Given that lots of interesting “stuff” lie buried in various applications, databases, files and other “systems”, the designers of InfoPath were well aware that they had to make InfoPath capable of accessing data in these systems. In doing so, electronic forms can reduce duplication, data entry and leverage already entered (and hopefully, sanitised and verified) data.

One of the many different methods of accessing “stuff” is via “web services”. The easiest way to think about web services is to think about Google. When you place a search on Google for say, “teenyboppers”, your browser is making a request to google servers to return “stuff” that relates to your input. In this case, people who actually like Brittany Spears’ songs.

Google is in effect providing a service to you and the protocol that drives the world wide web (HTTP) is the transport mechanism that both parties rely on.

So, a real-life web service is really just a more sophisticated version of this basic idea. One program can chat to another program by “talking” to its web services over HTTP. In this way, two systems can be on the other side of the world, yet be able to communicate with each-other and provide each-other with data and, well … services!

SharePoint is no exception, and happens to have a bunch of web services that allow programs to “talk” to it in many different ways. I am not going to list them all here, but it just so happens that one of those web services, gives us just what we need – the full details of the currently logged-in user. So we are going to get InfoPath to “call” this particular web service and return to us the data we need to make Homer happy.

Okay in theory but…

Now hopefully the basic idea of webservices now makes sense. Actually making the leap to using them does take some learning. Since this is all over HTTP, a web service is simply a website URL.

Real programmers – don’t start getting all anal with me about definitions here. This explanation is for people who speak human!

The URL of the SharePoint web service that we want is this:

http://<address of sharepoint site>/_vti_bin/UserProfileService.asmx

eg

http://radioactivenet/_vti_bin/UserProfileService.asmx

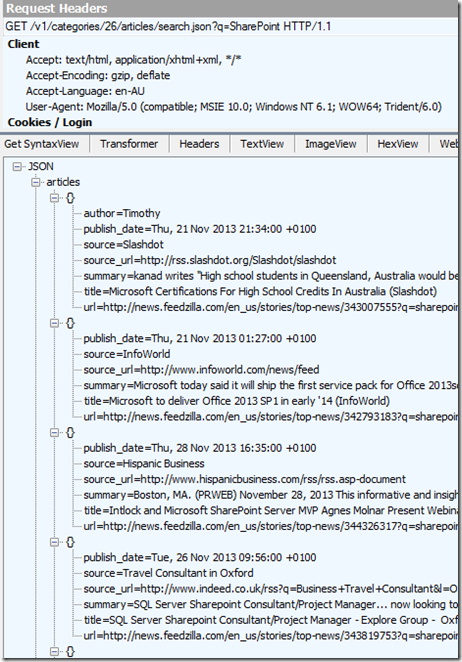

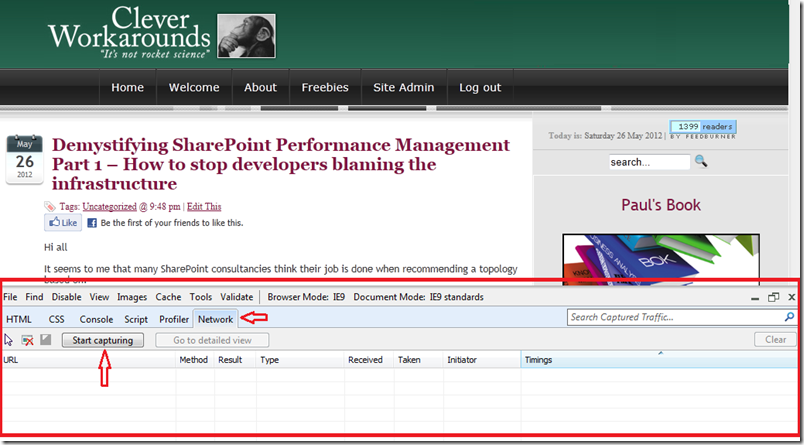

If you point your browser to this URL, you will get a response back, listing all of the various “methods” that this web service provides (click to enlarge). The screenshot below is not exhaustive, but the point is that one web service can actually provide many functions (generally known as methods) to perform all sorts of tasks.

![image_thumb[21] image_thumb[21]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb21_thumb.png)

Now, InfoPath likes to make things nice and wizard-based, and all of these above methods operate differently. Some take parameters (I.e. to create a new user account via web service you would have to supply details like name, password and the like). Some do not need parameters but return multiple values while some return a single value. Others still return different values based on parameters that you send to the method.

How can InfoPath possibly know in advance what it needs to send to/receive from our selected method? Fortunately the geeks thought of this and invented an extremely boring language called Web Services Definition Language (or WSDL for short). All you need to know about WSDL is that apart from being a fantastic cure for insomnia if you ever read it, it provides a way for applications, like InfoPath, to find out what it needs to do, to interact with a particular method.

So, using the above web service again, we will actually ask the web service to tell InfoPath all about itself using WSDL by slightly changing the URL as illustrated below

http://radioactivenet/_vti_bin/UserProfileService.asmx?WSDL

Now, if you try that in a browser you see all sorts of XML crap

![image_thumb[22] image_thumb[22]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb22_thumb.png)

But don’t worry, you will never have to actually look at it again – InfoPath digs it – and that’s all you need to know.

InfoPath Data Sources and Data Connections

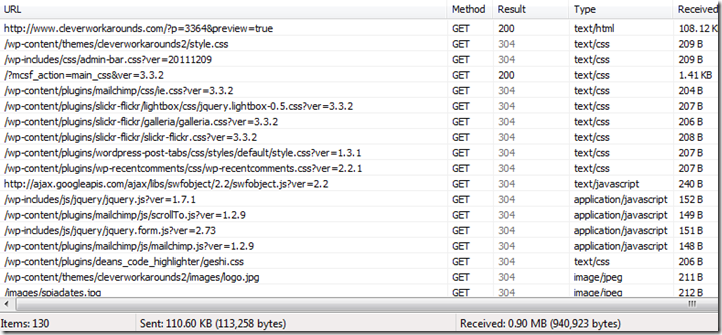

Now, it is time to actually get InfoPath to talk to this “UserProfileService” web service as described above.

As I said, Web services is one several sources of data that InfoPath can access from. First up, we need to make a connection to a data source (imaginatively called Data Source connections).

From the tools menu, choose “Data Connections” and a dialog box asks you to some information about the sort of data connection that we want to use. We wish to receive Homer’s details from the web service, so we choose to receive data and choose “Web Service” as the source of our data.

![image_thumb[23] image_thumb[23]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb23_thumb.png)

![image_thumb[25] image_thumb[25]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb25_thumb.png)

![image_thumb[26] image_thumb[26]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb26_thumb.png)

We are next asked for the URL of the web service. We pass it the URL of http://radioactivenet/_vti_bin/UserProfileService.asmx?WSDL

![image_thumb[28] image_thumb[28]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb28_thumb.png)

Note: This really should be the exact sub-site where the InfoPath form was published to. So, if say, this form was to be published into a sub site called HR, then the URL would be http://radioactivenet/HR/_vti_bin/UserProfileService.asmx?WSDL.

Clicking NEXT and you are presented with the various methods that this web service provides. The method that we are going to use is “GetUserProfileByName”. We choose it and click “Next”.

![image_thumb[30] image_thumb[30]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb30_thumb.png)

At this point, we really start diving deep into talking to web services. InfoPath examines the details of this method by looking at the WSDL information. It determines that this particular method (GetUserProfileByName) expects a parameter to be passed to it called “AccountName”. We are prompted to supply a value for “AccountName”. Fortunately for us, if we do not supply a parameter here, then the web service will use the account name of the currently logged in user! This is exactly what we want. This method will automatically use the username of the current person without us having to manually specify it.

Thus, we can leave this screen as is and click NEXT. The final message asks if we wish to connect to the web service now to retrieve data, but in this case we do not.

![image_thumb[32] image_thumb[32]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb32_thumb.png)

![image_thumb[34] image_thumb[34]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb34_thumb.png)

We have now finished configuration. We are asked to save this connection and give it a name. (As you can see below the name defaults to the method name, so we will leave it unchanged. We also tick the box to connect to the data source as soon as the form is opened).

![image_thumb[36] image_thumb[36]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb36_thumb.png)

Using the data connection

We have an InfoPath data connection to this web service. Now what?

Well, let’s go back to our employee name text box and change the default value to something returned by the web service method GetUserProfileByName. As we did earlier, we click the function button, but this time we are not doing to add a function. We will instead use the “Insert Field or Group” button.

![image_thumb[38] image_thumb[38]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb38_thumb.png)

![image_thumb[40] image_thumb[40]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb40_thumb.png)

By default, InfoPath has a “main” Data source, which is the form itself. We now have to tell InfoPath that the default value for the employee name is going to come from a different data source. On the data source drop down, choose secondary data source called “GetUserProfileByName” from the drop down as shown below.

![image_thumb[42] image_thumb[42]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb42_thumb.png)

![image_thumb[44] image_thumb[44]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb44_thumb.png)

Notice that the fields available to choose for the GetUserProfileByName datasource looks very different to the main datasource. This is because the web service returns data in a particular format, and it is now up to us, to interrogate that format to get the information we want.

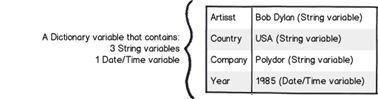

The GetUserProfileByName method returns a whole bunch of user profile values, way more than the stuff we specifically want. It returns these details in a name/value format as shown below:

| Name |

Value |

| FirstName |

Homer |

| Lastname |

Simpson |

| Office |

Sector 7G |

| Title |

Safety Inspector |

… and another 42 more name/value pairs like the above!

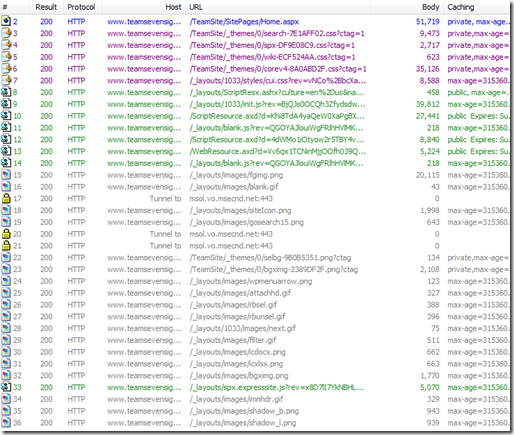

So we will have to tell InfoPath to examine the full list of “Values”, and find the specific value for “FirstName”.

Note that firstname and lastname are separate items. There is no property called “FullName”. We will deal with this a little later.

Now although telling InfoPath to filter the user profile information is achieved using a wizard, it is not the most intuitive process. Once you have done it a few times, it becomes second nature. But be warned – you may be about to suffer death by screenshot…

Recall in the last screenshot we were looking at the data returned by the web service method called GetUserProfileByName. We need to filter the 46+ possible profile values to the specific one we need. Now you know what that “Filter Data…” button does

From the list of fields returned by this method, choose “Value” and click “Filter Data”.

![image_thumb[45] image_thumb[45]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb45_thumb.png)

We have now told InfoPath that we want one of the “value” fields, but we need to filter it to the specific value that we need. That value is “FirstName”. So in effect we are saying to InfoPath “give me the value for the property where the property called Name = “FirstName”. The sequence of screenshots below shows how I tell Infopath to use the Name field as the filter field.

![image_thumb[47] image_thumb[47]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb47_thumb.png)

![image_thumb[48] image_thumb[48]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb48_thumb.png)

Now we have to set name to equal the property name “FirstName”. The next two screenshots show this.

![image_thumb[50] image_thumb[50]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb50_thumb.png)

![image_thumb[53] image_thumb[53]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb53_thumb.png)

Click OK and you now have your formula as shown below.

![image_thumb[55] image_thumb[55]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb55_thumb.png)

Click a zillion other “OK” buttons and you will be back at your form in design mode. Clicking the “Preview” button and check the Employee name field. Wohoo! It says “Homer!”

![image_thumb[57] image_thumb[57]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb57_thumb.png)

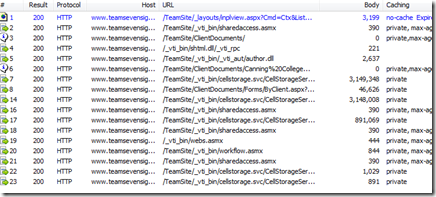

But I said “full name” not “first name”…

So, that’s great. Although connecting to a web service and telling it to retrieve the correct data is tricky and requires some training, no custom programming code was written to do this. But alas, poor old Homer still get’s a little confused and we wish to see the full name of the person filling in the form.

Fortunately, this is actually quite easy. We just need to grab the FirstName and the LastName values from the web service and then join them together.

I demonstrate this below (with an adjusted formula – for the sake of article length I’ve not added the screenshots used to create this formula. I am hoping that I gave you enough to figure it out for yourself).

Application developers or Excel people will quickly see that I have retrieved the “LastName” value using the exact same method described in the last section for the “FirstName” value. I then used the built-in function concat (concatenate) to join FirstName and LastName together (with a space in between).

![image_thumb[59] image_thumb[59]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb59_thumb.png)

Let’s now preview the form… Wohoo!

![image_thumb[61] image_thumb[61]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb61_thumb.png)

So, just to be completely sure, we republish the form, following the steps in part 4 and examine the form in the browser to make sure that it works there as well.

![image_thumb[63] image_thumb[63]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb63_thumb.png)

Conclusion (and further notes)

So amazed at your ingenuity, you have managed to get InfoPath to retrieve the details it required so that the user did not have to fill in their name manually. There was certainly a lot more to it than using a built-in InfoPath function, and (for the first time anyway), probably took you a little while. But the main thing is, you have satisfied the first requirement and Waylon Smithers is now happy. He is a little bewildered in all the low-level web service stuff and concerned about how easy it would be for some of his staff to create forms, but regardless, everything was performed via the InfoPath graphical user interface.

So in the next post, we will look at two ways we can deal with the automatic retrieval of the employee number.

Thanks for reading

Paul Culmsee

P.S: Do not attempt to explain the low level details of pulling values from a web service in a presales demo  .

.

![image_thumb[2] image_thumb[2]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb2_thumb.png)

![image_thumb[5] image_thumb[5]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb5_thumb.png)

![image_thumb[7] image_thumb[7]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb7_thumb.png)

![image_thumb[9] image_thumb[9]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb9_thumb.png)

![image_thumb[11] image_thumb[11]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb11_thumb.png)

![image_thumb[13] image_thumb[13]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb13_thumb.png)

![image_thumb[15] image_thumb[15]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb15_thumb.png)

![image_thumb[17] image_thumb[17]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb17_thumb.png)

![image_thumb[18] image_thumb[18]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb18_thumb.png)

![image_thumb[20] image_thumb[20]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb20_thumb.png)

![image_thumb[21] image_thumb[21]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb21_thumb.png)

![image_thumb[22] image_thumb[22]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb22_thumb.png)

![image_thumb[23] image_thumb[23]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb23_thumb.png)

![image_thumb[25] image_thumb[25]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb25_thumb.png)

![image_thumb[26] image_thumb[26]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb26_thumb.png)

![image_thumb[28] image_thumb[28]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb28_thumb.png)

![image_thumb[30] image_thumb[30]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb30_thumb.png)

![image_thumb[32] image_thumb[32]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb32_thumb.png)

![image_thumb[34] image_thumb[34]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb34_thumb.png)

![image_thumb[36] image_thumb[36]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb36_thumb.png)

![image_thumb[38] image_thumb[38]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb38_thumb.png)

![image_thumb[40] image_thumb[40]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb40_thumb.png)

![image_thumb[42] image_thumb[42]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb42_thumb.png)

![image_thumb[44] image_thumb[44]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb44_thumb.png)

![image_thumb[45] image_thumb[45]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb45_thumb.png)

![image_thumb[47] image_thumb[47]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb47_thumb.png)

![image_thumb[48] image_thumb[48]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb48_thumb.png)

![image_thumb[50] image_thumb[50]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb50_thumb.png)

![image_thumb[53] image_thumb[53]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb53_thumb.png)

![image_thumb[55] image_thumb[55]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb55_thumb.png)

![image_thumb[57] image_thumb[57]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb57_thumb.png)

![image_thumb[59] image_thumb[59]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb59_thumb.png)

![image_thumb[61] image_thumb[61]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb61_thumb.png)

![image_thumb[63] image_thumb[63]](http://www.cleverworkarounds.com/wp-content/uploads/2013/07/image_thumb63_thumb.png)

![image_thumb[12] image_thumb[12]](http://www.cleverworkarounds.com/wp-content/uploads/2012/01/image_thumb12_thumb.png)

![image_thumb[14] image_thumb[14]](http://www.cleverworkarounds.com/wp-content/uploads/2012/01/image_thumb14_thumb2.png)

![image_thumb[18] image_thumb[18]](http://www.cleverworkarounds.com/wp-content/uploads/2012/01/image_thumb18_thumb.png)