Why do SharePoint Projects Fail? – Part 8

Hi

Well, here we are at part 8 in a series of posts dedicated to the topic of SharePoint project failure. Surely after 7 posts, you would think that we are exhausting the various factors that can have a negative influence on time, budget and SharePoint deliverables? Alas no! My urge to peel back this onion continues unabated and thus I present one final post in this series.

Now if I had my time again, I would definitely re-order these posts, because this topic area is back in the realm of project management. But not to worry. I’ll probably do a ‘reloaded’ version of this series at some point in the future or make an ebook that is more detailed and more coherently written, along with contributions from friends.

In the remote chance that you are hitting this article first up, it is actually the last of a long series written over the last couple of months (well, last for now anyway). We started this series with an examination of the pioneering work by Horst Rittell in the 1970’s and subsequently examined some of the notable historical references to wicked problems in IT. From there, we turned our attention to SharePoint specifically and why it, as a product, can be a wicked problem. We looked at the product from viewpoints, specifically, project managers, IT infrastructure architects and application developers.

In the last article, we once again drifted away from SharePoint directly and looked at senior management and project sponsors contribution. Almost by definition, when looking at the senior management level, it makes no sense to be product specific, since at this level it is always about business strategy.

In this post, I’d like to examine when best practice frameworks, the intent of which is to reduce risk of project failure, actually have the opposite effect. We will look at why this is the case in some detail.

CleverWorkarounds tequila shot rating..

![]()

![]()

![]()

![]() For readers with a passing interest in best practice frameworks and project management.

For readers with a passing interest in best practice frameworks and project management.

For nerds who swear they will never leave the "tech stuff."

For nerds who swear they will never leave the "tech stuff."

Wicked problem or wicked process dogma?

The last major topic area I will cover, is really a manifestation of wicked problems as described in part 1. As an example, let’s say our SharePoint project has completely gone off the rails. Scope creep is rampant, requirements are unclear, time and budget has blown out, users hate it, the project team is dejected, the sponsor has disowned the project and senior management are very unhappy.

Is that example extreme? Not really – although I’m using SharePoint as an example here, the same story commonly afflicts many IT projects.

But what I have noticed is that the more acute the wicked problem symptoms are, the more likely that significant blame is lumped at the "process" level, rather than the wicked problem at the root of it all. For sure, I agree that process issues are a big factor. However, the inevitable outcome of blaming the process, is that you spend all of your time and energy looking at solutions for the process, in some belief that more formal, standardised process-steps would have helped solve the problem. The reality is, however, the project team likely did not have a shared understanding of the problem.

It is very easy to see how this happens. "We are so far over time and budget, we must have not used a strict process to nail down scope and requirements". Thus, best-practice frameworks suddenly come into the mix as after all, they are a best-practice, right?

My observation here is that the team or organisation still may not realise that they are dealing with a wicked problem. Therefore, there is a crucial factor here that is often overlooked in this situation. In reality, it doesn’t really matter which best practice you pick (and there sure are plenty to pick from). My experience is that as the problem "wickedness factor" increases, the more the "panacea effect" kicks in for the chosen best practice framework. The panacea effect (which I defined in part 3) is a reflection of how badly a project has gone bad, since the worse the fallout, the greater the pressure there is to get things on track and therefore anything with "best practice" sounds positively dandy.

As a result, the implementation of a best practice is itself doomed to fail for very similar reasons that your original project failed!

Why? Once things get bad, relationships are strained and communication is poor. If your team was not able to gain a shared understanding of the original problem to solve, what makes you any more likely to gain a shared understanding of how to implement a best practice framework? Those frameworks also require deeper understanding too!

When frameworks bite back!

Anyways, as I write this post, the immediate analogy that summarises the theme of this last post is one of those dodgy ‘reality’ shows like "when animals attack", or "when household appliances go bad". You know those shows that tend to get broadcast during non ratings periods? Well, I have an idea for a pilot. Do you think the TV networks will take it up?

The pitch of my new reality show is to show reel after reel of emotionally shattered project teams, with dramatic re-enactments of the incidents set against those music scores that build tension – I’m thinking Mike Olfield’s "Tubular Bells" here (that creepy piano piece used on the movie "the exorcist"). To host the show, I’m thinking either Scott Baio or Mr T would suffice.

Each episode will tell a harrowing story of an initial, well-intentioned effort to use a best-practice framework to improve some aspect of the business. Buy-in and motivation are initially high among participants, but is quickly and fatally derailed due to misplaced expectation, over-zealous, rigid interpretation style implementations that result in increased red tape for little measurable gain.

Imagine the "60 minutes" style voice over during the opening credits…

"…they thought it would just be a routine project, but little did they know of the horror that was about to change their lives forever…"

Introducing the All Star Cast

As you can see in my mythical DVD cover, there is a bit of an all-star cast of frameworks there. But really, it only scratches the surface of the variety of methods, tools and alternatives in the realm of the ‘framework’. If you were going to break them down into broad categories (which can be tough because of significant overlap amongst them), my suggestion is to have a look at the webcast by Lynda Cooper of Fox IT. This is an excellent presentation that breaks down various frameworks into decent groupings and relates it all back to IT governance for business.

Although this series is not directly focused on IT governance as such, the webcast above is a great reference to put it all into perspective and I intend to write more on this topic in a SharePoint context.

Lynda lumps all of these frameworks into 3 broad categories: Best Practice Guidelines, Standards and Recognised Measurement Techniques.

Best Practice Guidelines

Examples: PMBOK, Prince2, COBiT, ITIL

Best practice guidelines tend to have wide recognition within their industry, but generally an organisation does not certify against them (individuals however can do so). The guidelines tend to be created and maintained by a consensus of a cross-section of industry experts who develop the reference material. The key point in relation to best practice guidelines is that they can be selectively applied – i.e. take the good bits!

Standards

Examples: ISO9001, ISO27001, BS25999

Standards are more mandatory than best practice guides. They are published by standards organisations, both regional and international. They are widely recognised and an organisation can be independently certified to and regularly audited against. Generally here it’s all or nothing – if you do not satisfy the various controls within the standard, you are non compliant.

Recognised Measurement Techniques

Example: CMMI, Six Sigma

Recognised measurement techniques seem to fall in between best practice guidelines and standards. They tend not to be developed by a standards body, instead often being developed by an organisation and over time see wider adoption. Wide industry recognition is important here, and like standards, an independent auditor can assess the organisation as to their compliance. The logic here though is that these measurement allow you to rate yourself against your competition, since you are all using the same metrics.

Which framework to pick on today?

For the purpose of this post and the topic at hand, I am going to pick on PMBOK only, but please bear in mind that the same arguments can be pretty much made with any of the other frameworks.

Now if any geeks have read this post thus far, and are used to religious wars based around your favourite operating system, you ain’t seen nothin’ yet when it comes to best practice frameworks. Just like the great platform wars of Microsoft vs *nix, there are similar schools of thought as to which framework is "better" than the other. A practitioner of one framework will commonly poo-poo another framework. Also like the platform wars, there is always considerable criticism of the more popular frameworks like Six Sigma, ISO9001 and PMBOK because they are more widely used and therefore, subject to more (mis)interpretation, dogma, certification waving and the like.

Before I particularly pick on PMBOK however, the inevitable question from an organisation perspective is, "Which framework is right for me?"

Hmm – I wonder if that innocent question is a wicked problem in the making? 🙂

Not many people have read all frameworks in depth, because no matter which framework you pick, you are setting yourself up for a fairly dry read. For some insane reason I like them and while everyone else downloads porn, I actually read these things! Most of them are several hundred pages of material that will cure even the most vicious case of insomnia. In my opinion the most readable one is COBiT, but given that it’s pitched at management, it has to have lots of nice pictures 🙂

The reality is that you’re either going to ask a consultant to tell you what to adopt or start a heck of a lot of reading. Only a true pragmatist would do the latter, because they by nature don’t trust the consultant 😉 No matter how you decide a framework is for you, you will quickly come across some academic telling you that you have to actually combine them to achieve "full governance". Sheesh – so many books., so little time 🙁

History of "Waterfall"

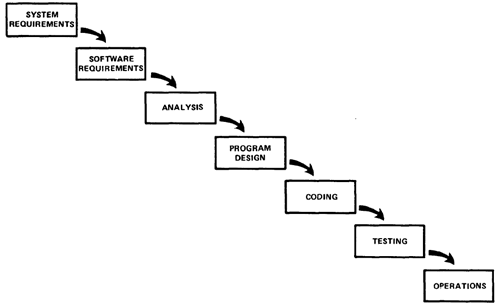

So, let’s get back to picking on PMBOK. In software development, and the project management around it, the term "waterfall" is a bit of a dirty word these days. The term originates from a paper written by Dr. Winston Royce around the time of Horst Rittel (1970). For reference, his paper is available online.

The basic idea I’m sure is very familiar to you. Anybody remotely connected to software development or project management will be familiar with this approach.

Gather data -> Analyze data -> Formulate solution -> Implement solution

PMBOK basically has the following "Process Groups" that are logically similar.

Initiating -> Planning -> Executing -> Controlling -> Closing

For many years, conventional wisdom in project management and software development was to follow an orderly and linear process based on Royce’s philosophy. The term “Waterfall model,” comes from the image a waterfall as the project “flows” down each of the discrete steps.

I find it interesting that Royce’s paper managed to establish itself as the underlying philosophy for many prevailing project management and software design methods. Rittell’s work on the other hand, did not receive the same coverage and has largely been ignored by comparison.

It is little wonder then, that many PMBOK practitioners have the waterfall model so ingrained into their thinking. Although I believe that it is misguided, PMBOK and many other frameworks are associated closely with waterfall. I have worked within a much-vaunted PMO (Program Management Office) environment at two different organisations, and in both cases, the PMO was based on an extremely rigid interpretation of the PMBOK and waterfall philosophy.

Waterfall – Predicated on false assumptions?

For this next section, I have to offer my sincere thanks to Chris Chapman, who made me aware of a subtle yet important fact with the waterfall model. He wrote a brilliant post entitled "Observations on the Rigor of Waterfall", and referred to a post from David Christiansen. Both authors do a great job in systematically pulling apart some of the misconceptions of the waterfall model (and I urge you to read both brilliant posts). Probably the most interesting thing is the following statement diagram and quote from Royce’s paper…

I believe in this concept, but the implementation described above is risky and invites failure… The testing phase which occurs at the end of the development cycle is the first event for which timing, storage, input/output transfers, etc., are experienced as distinguished from analyzed. These phenomena are not precisely analyzable. They are not the solutions to the standard partial differential equations of mathematical physics for instance. Yet if these phenomena fail to satisfy the various external constraints, then invariably a major redesign is required. The required design changes are likely to be so disruptive that the software requirements upon which the design is based and which provides the rationale for everything are violated. Either the requirements must be modified, or a substantial change in the design is required. In effect the development process has returned to the origin and one can expect up to a 100-percent overrun in schedule and/or costs.

So Royce in his original paper actually recognises that the waterfall model is risky and invited failure! Why hasn’t this important fact been mentioned, made it into the standard theory of any best practice framework? To be fair on Royce though, he does go on to say:

I believe the illustrated approach to be fundamentally sound. The remainder of this discussion presents five additional features that must be added to this basic approach to eliminate most of the development risks.

Has Waterfall been misrepresented? You be the judge.

How we really solve problems…

|

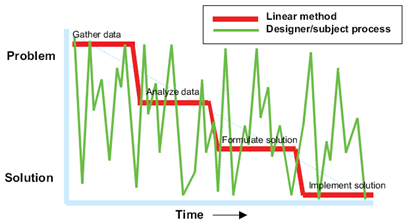

I own a exceptional book by Jeff Conklin called Dialogue Mapping – Building Shared Solutions to Wicked Problems. The first chapter is particularly good at a more scientific dissection on the waterfall model. He reaches similar conclusions as Chris Chapman and David Christiansen with one major point of difference. Jeff Conklin’s mentor was Horst Rittel, and thus his book is a more modern take on wicked problems in general, rather than software development projects. In his book, Conklin describes the ‘traditional’ approach of waterfall based problem solving. He cites a study in the 1980’s that examined how people solve problems. An experiment was conducted, where designers had to design a hypothetical elevator control system for an office building. Each participant was asked to think out loud while they worked on the problem. The results showed fairly clearly that participants worked simultaneously on understanding the problem and formulating a solution.

Conklin created a great diagram to illustrate the real application of the problem-solving process (I have pasted below). He overlays the linear (waterfall) approach of how a problem moves to its solution, with the thought process captured from the elevator experiment. |

Conklin observed that when faced with a novel and complex problem, we humans do not naturally start by gathering data about a problem. instead, understanding of a problem can only come from the thought process of creating solutions, and relating them back to the original problem. The green line in the chart to the left illustrates this. Conklin also notes that understanding continued to evolve all the way through the experiment. He concludes that "understanding" is something that evolves continually. the "real issue" is sometimes a moving target.

Is there a place for the linear approach?

You might get the idea in this post that I am dumping on waterfall completely. That is not the case at all. In my case, I feel that I don’t have enough experience to make the call, but it doesn’t take too much googling to find some great opinions. I highly recommend reading Mike Bianchi, Dave Nicolette and Abrachan Pudusserry. Additionally, one really excellent paper by David Longstreet takes a very six-sigma style approach (in God we trust, all others bring data) to criticise agile type methods.

In my experience, certainly in infrastructure projects that I have been involved in, phased approach has served us well. To understand why is a whole separate post, but I feel that certain types of problems lend themselves to the waterfall philosophy, but some may not. Obviously the trick is understanding the type of projects that have a natural leaning towards waterfall as opposed to the alternatives.

When I look at successful infrastructure projects, they tend to work because the requirements are fairly static. Take Exchange server as an example. Once you know how many sites, how many users, the communications infrastructure to site, the mailbox requirements and the like you can pretty much develop a project plan with reliable estimates of cost and time. If you ask me to plan a SharePoint farm from planning, sizing, infrastructure, governance and training I could also give you an answer with a fair degree of confidence as I’ve done many times. But then by definition, we are not talking about a wicked problem are we?

Dave Nicolette’s article in particular takes a really good look at the characteristics of waterfall versus agile and I agree completely with his summary.

| Adaptive (Agile) | Predictive (Waterfall) |

|---|---|

| High uncertainty or high urgency Non-repeating process New product development Right sort of people on staff High level of direct customer participation |

Low uncertainty and low urgency Repeating process Not new product development Traditional, process-oriented staff Low level of direct customer participation |

It is little surprise that the left side of the above table is typical of wicked problems and the right are not. As a result, all of the criteria that he lists on the right side of the above table results in the green line in Conklin’s chart fairly evenly matching the red line. You do not need to skip between problem to solution anymore and aligns relatively closely with the red line. Each successive time you do the same implementation reduces the spikiness of the green line.

But unfortunately for us all, many SharePoint projects have a big product development phase in there as well. More often than not, that little green line starts to spike again.

SharePoint Projects = Fluid Requirements

We spent several posts on SharePoint considerations from the Microsoft effect, the panacea effect, point vs platform, the new product factor, buzzword abuse, product complexity, organisational maturity, product skills, infrastructure complexity, information architecture complexity, governance complexity, developer skills, junk product DNA, branding, etc.

That is a lot of considerations! Therefore, a lot of soul searching, dialogue and shared understanding among stakeholders is required!

Is it little wonder then, that people aren’t sure what they want?

Is it little wonder then, that people do not have a shared understanding of the truth?

Is it little wonder then, that people have a propensity to jump into a new product before they have fully appreciated the complexity of what they are getting themselves into?

More importantly still..

Is it little wonder then, that PMBOK in its rigid, phased approach is going to make any significant impact on the the above problems? No, in fact it can make it worse and set up the project for failure from the very beginning.

PMBOK – Just misunderstood…

Earlier in this post, I observed that the more popular frameworks like ITIL, PMBOK and Six Sigma attract criticism about their success rate. Six Sigma, in particular, has a running joke that its success rate is around two sigma. Aside from the fact that implementing a framework is a project in itself and therefore subject to many of the same issues that a SharePoint project encounters, I believe that a big factor in this criticism stems from applying a very rigid, "one size fits all" approach to the implementation of the framework.

Thus, despite what this post may imply, I am in no way saying that all of these frameworks are bad. To the contrary, I believe that every one that I have studied has a lot to offer and the problem is all in the assumptions and execution of the given framework. (Wicked problem fodder!)

So, in defence of PMBOK, and for that matter most of the methodologies that I have read, is that the best practice frameworks specifically encourage practitioners to apply the guidelines in a manner that best suits their circumstances. Probably one of the best write-ups to illustrate this in relation to PMBOK and Agile software development, was a 4 part series written by Michele Sliger.

Specifically, on page 20 of the PMBOK, the text states "There is no single best way to define an ideal project life cycle". Furthermore, the PMBOK goes on to state "The project manager, in collaboration with the project team, is always responsible for determining what processes are appropriate, and the appropriate degree of rigor for each process, for any given project".

To all the PMBOK practitioners that skim this article and think that I am dumping on that framework, please don’t misunderstand. I like PMBOK a lot, I just dislike dogmatic interpretation of what it offers. The same applies to pretty much every other framework too, including the agile ones. In a project like SharePoint, where it should now be very clear that requirements are often (but not always) fluid and understanding among stakeholders definitely varies. Assuming that the root of all problems would be solved via a framework is likely misguided.

It’s the "problem", not the "process"

If you take anything away from this article, here are the key points:

- Do not mistake a lack of understanding of the problem by faulting the process used. It usually isn’t the root cause.

- Do not assume that improvement frameworks are the panacea to cure previous failures.

- Do not assume that improvement frameworks must be implemented in their entirety and in a standard way,

- Consider that SharePoint projects by their nature have characteristics that do not lend themselves to improvement frameworks that are implemented with a strict philosophy,

- Consider that implementing an improvement framework is a project in itself. How likely is its implementation going to suffer from the same problems as your original failed project?

Summing Up

So, here we are. After 8 posts I am finally spent with this topic. There are other project failure topics, but I fear that to keep going would start to bore people to tears.

What would be most satisfying to me is that somewhere in the world, someone has found this series of posts, recognised that the symptoms I described are affecting their projects and was able to use the content to help your project in some way before things became terminal. Like many afflictions in life, one of the main factors on the road to recovery is shared recognition of the problem. If you can get to that point then you are halfway there.

I sincerely hope that you have found this series useful. I really had little idea when I started the first post, that it would end up being one of eight. Nor did I really have much idea of the direction it would take. But I am pretty pleased with the outcome and along the way I’ve been able to meet and chat to some really brilliant minds out there and I have gained a hell of a lot personally from writing it. To all who have provided feedback or written nice things about this series, a huge thanks and if you ever are in sunny Perth, then beers are definitely on me.

Thanks people!

Paul

June 4th, 2008 at 12:53 pm |

Thanks, Paul. Keep them coming!

We had a discussion among team members of our SharePoint practice today. We had previously gone on and on working on developing a standard Statement of Work for a typical SharePoint project, only to realize that there is no such thing as a typical SharePoint project.

There are, however, common themes that emerge once you do a couple of dozen, and there are several phrases that should NEVER be included in a Statement of Work for SharePoint consulting services. (that is a teaser for a future blog article, perhaps)

A great quote from Winston Churchill comes to mind (did you tell me this?):

“Plans are worthless; [the act of] planning is priceless.”

Cheers, mg

June 4th, 2008 at 1:38 pm |

phrases? “how much” and “how long” should be absolutely outlawed

June 5th, 2008 at 5:34 pm |

Very interesting, particularly like the bit near the end (it took a while to get there) with agile vs waterfall. I think the distinction between ‘new product development’ and ‘not new’ is the key. Basically the justifications I’ve seen before for software dvt needing an agile approach as opposed to say an engineering dvt which can be waterfall based come down to that with a lot of software we don’t really know what people want, can’t explain what it will/can do, how the @^$& it actually works and fits together, and what the issues will be. As opposed to say building a new office block where the requirements, technology and risks are much better known. Sharepoint sits somewhere uncomfortably in the middle of the two where there is a degree of configuration and yet some custom coding with various unknowns. Still trying to figure out the best way to manage that. And of course – as you say early on in the series, there is a high degree of ‘we want sharepoint’ rather than solution to a problem. Only the other day someone in marketing said ‘we want it (the intranet) to be funky and sexy so people use it’. I was looked at blankly when I asked what people would use it for.

June 5th, 2008 at 8:00 pm |

Haha! After 6 months someone finally tells me I waffle! 😉

June 6th, 2008 at 12:39 pm |

Thanks for the mention, Paul – much appreciated. Of course, I can’t take total credit for “systematically dissecting the waterfall” – I’m afraid sharper minds than mine did that first – guys like Craig Larman, for example, or Fred P. Brooks Jr. for another. Like you, I just got pointed in the right direction, did some digging and had my worst fears affirmed.

With respect to applying the agile approach to SharePoint, take a look at Essential SharePoint 2007: Delivering High Impact Collaboration. The authors repeatedly make the case for segmenting delivery and getting users involved as soon as possible with their own data in a working environment.

It just stands to reason: If you go dark for a long period of time befor involving the client and end-users, you’re setting yourself up for some awkward, uncomfortable conversations later on.

Great series, Paul. I’m referring folks within MCS here in Canada to check it out – even a couple of clients…!

Cheers!

June 6th, 2008 at 12:57 pm |

Thanks Chris, I actually own that book – suprised? 😉

Definitely check out Conklin’s stuff, its complimentary yet has little to do with agile as a methodology. His theme is that shared understanding is the root of it all, Agile (and Scrum obviously) happens to have techniques within it that facilitate shared understanding, but there is still a lot of process there and therefore people can get fixated on whether they are following the process right, as opposed to getting everyone on the same page whatever the means…

September 11th, 2008 at 10:25 pm |

Spot on. I read the entire set of articles from start to finish. Interesting, enlightening and entertaining. Can’t wait to recommend this to our SharePoint team!

January 30th, 2009 at 5:41 pm |

I am in the unenviable position of trying to foster a more mature approach to support for our organisation. We already use Sharepoint in a very limited capacity as an intranet portal, and some thought was going towards turning it into an all singing all dancing toy.

After reading all this, I am content to use it as a very simple document repository to start with. It can do ONE thing well for now, and when I know how it performs for that, I will integrate the others. Stably.

February 12th, 2009 at 7:25 am |

[…] and in fact, most *encourage* you to take the bits that make logical sense for you. As I wrote in Part 8 of the "SharePoint Project Failure…" series, I found it ironic that implementation […]

March 1st, 2009 at 11:27 am |

Paul,

I printed out the entire series to read over the weekend. Just finished reading through, and all I can say is, “I wish I’d written it myself.” Thanks for a brilliant analysis of why projects fail.

As I’m sure you’re aware, the difficulty of achieving a shared understanding in software projects was also highlighted by Brooks in his classic article, No Silver Bullet. A quote from the paper is particularly apposite:

“…I believe the hard part of building software to be the specification, design, and testing of this conceptual construct, not the labor of representing it and testing the fidelity of the representation. We still make syntax errors, to be sure; but they are fuzz compared with the conceptual errors in most systems.

If this is true, building software will always be hard. There is inherently no silver bullet…”

Brooks mentions four inherent, irreducible properties of software systems: complexity, conformity, changeability and invisibility. A while ago I wrote a piece entitled, On the intrinsic complexity of IT projects, wherein I discuss the connections between Brooks’ analysis of the software systems and technical project management.

Thanks again for your entertaining and edifying analysis of why projects fail – links to the series will be forwarded to friends and colleagues shortly.

Regards,

Kailash.

March 1st, 2009 at 11:34 am |

Kailash, I am a big fan of your blog and your writings, so your feedback is immensely gratifying.

March 12th, 2009 at 7:47 am |

Paul – I ran across this series and read the entire posting, 1 to 8, this afternoon. Very insightful and thought provoking. I am in the early planning phases of a SharePoint implementation and will put the info in this series to good use.

Thank you for taking the time to share your thoughts and experience.

March 17th, 2009 at 12:46 am |

[…] has been around since… forever. I wrote in more detail about the perils of waterfall in the project fail series in the section "how we really solve […]

January 9th, 2010 at 4:14 am |

Do you present the freelance writing ? Your information connecting to this topic seems to be smashing.

March 4th, 2010 at 12:08 pm |

Paul, all I can say is wow. Great series!!! I was actually searching for some entertaining reading on “SharePoint Horror Stories” and ended up finding your series. This series is full of points of wisdom and excellent links to other relevant and as intriguing articles. I can definitely say this series will be one I reference frequently in the future when dealing with wicked problems. I will probably also point some managers and stakeholders to pieces of this series in order to try to help educate them about how essential the roles they play are in projects. Please let me know if you do or have put this out as a white paper. I believe that would be priceless. Once again fantastic work.

June 18th, 2012 at 10:45 pm |

While I seem to have been a little late in discovering this jewel of a series, I wanted to congratulate you on presenting one of the best synopses I’ve read about issues with projects in general. While I am currently at work attempting to define the various problems the “Business Systems” group I’m in are trying to solve (there are just so many!), which is directly related to IT solutions (our current “methodology” looks a lot like that seismograph), it was thrilling to see a set of posts so oriented on projects instead of the tech invovled!

I’m passing along links not only to my IT nerds, but even the folks responsible for buying new manufacturing equipment and developing new products. This series was fantastic!!!