The cloud is not the problem–Part 3: When silos strike back…

My next door neighbour is a builder. When he moved next door, the house was an old piece of crap. Within 6 months, he completely renovated it himself, adding in two bedrooms, an underground garage and all sorts of cool stuff. On the other hand, I bought my house because it was a good location, someone had already renovated it and all we had to do was move in. The reason for this was simple: I had a new baby and more importantly, me and power tools do not mix. I just don’t have the skills, nor the time to do what my neighbour did.

You can probably imagine what would happen if I tried to renovate my house the way my neighbour did. It would turn out like the Ikea fails in the video. Similarly, many SharePoint installs tend to look similar to the video too. Moral of the story? Sometimes it is better to get something pre-packaged than to do it yourself.

In the last post, we examined the “Software as a Service” (SaaS) model of cloud computing in the form of Office 365. Other popular SaaS providers include SlideShare, Salesforce, Basecamp and Tom’s Planner to name a few. Most SaaS applications are browser based and not as feature rich or complex as their on-premise competition. Therefore the SaaS model is that its a bit like buying a kit home. In SaaS, no user of these services ever touches the underlying cloud infrastructure used to provide the solution, nor do they have a full mandate to tweak and customise to their hearts content. SaaS is basically predicated on the notion that someone else will do a better set-up job than you and the old 80/20 rule about what features for an application are actually used.

Some people may regard the restrictions of SaaS as a good thing – particularly if they have dealt with the consequences of one too many unproductive customization efforts previously. As many SharePointer’s know, the more you customise SharePoint, the less resilient it gets. Thus restricting what sort of customisations can be done in many circumstances might be a wise thing to do.

Nevertheless, this actually goes against the genetic traits of pretty much every Australian male walking the planet. The reason is simple: no matter how much our skills are lacking or however inappropriate tools or training, we nevertheless always want to do it ourselves. This brings me onto our next cloud provider: Amazon, and their Infrastructure as a Service (IaaS) model of cloud based services. This is the ultimate DIY solution for those of us that find SaaS to cramping our style. Let’s take a closer look shall we?

Amazon in a nutshell

Okay, I have to admit that as an infrastructure guy, I am genetically predisposed to liking Amazon’s cloud offerings. Why? well as an infrastructure guy, I am like my neighbour who renovated his own house. I’d rather do it all myself because I have acquired the skills to do so. So for any server-hugging infrastructure people out there who are wondering what they have been missing out on? Read on… you might like what you see.

Now first up, its easy for new players to get a bit intimidated by Amazon’s bewildering array of offerings with brand names that make no sense to anybody but Amazon… EC2, VPC, S3, ECU, EBS, RDS, AMI’s, Availability Zones – sheesh! So I am going to ignore all of their confusing brand names and just hope that you have heard of virtual machines and will assume that you or your tech geeks know all about VMware. The simplest way to describe Amazon is VMWare on steroids. Amazon’s service essentially allows you to create Virtual Machines within Amazon’s “cloud” of large data centres around the world. As I stated earlier, the official cloud terminology that Amazon is traditionally associated is called Infrastructure as a Service (IaaS). This is where, instead of providing ready-made applications like SaaS, a cloud vendor provides lower level IT infrastructure for rent. This consists of stuff like virtualised servers, storage and networking.

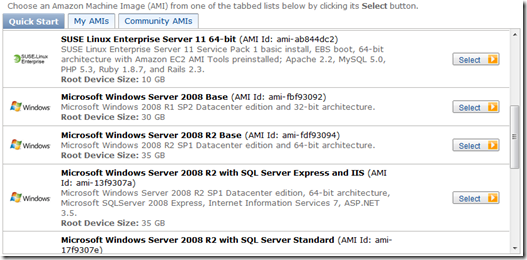

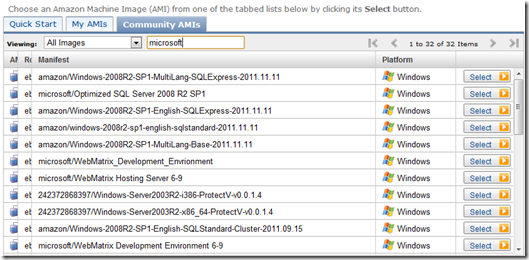

Put simply, utilising Amazon, one can deploy virtual servers with my choice of operating system, applications, memory, CPU and disk configuration. Like any good “all you can eat” buffet, one is spoilt for choice. One simply chooses an Amazon Machine Image (AMI) to use as a base for creating a virtual server. You can choose one of Amazon’s pre-built AMI’s (Base installs of Windows Server or Linux) or you can choose an image from the community contributed list of over 8000 base images. Pretty much any vendor out there who sells a turn-key solution (such as those all-in-one virus scanning/security solutions) has likely created an AMI. Microsoft have also gotten in on the Amazon act and created AMI’s for you, optimised by their product teams. Want SQL 2008 the way Microsoft would install it? Choose the Microsoft Optimized Base SQL Server 2008R2 AMI which “contains scripts to install and optimize SQL Server 2008R2 and accompanying services including SQL Server Analysis services, SQL Server Reporting services, and SQL Server Integration services to your environment based on Microsoft best practices.”

The series of screen shots below shows the basic idea. After signing up, use the “Request instance wizard” to create a new virtual server by choosing an AMI first. In the example below, I have shown the default Amazon AMI’s under “Quick start” as well as the community AMI’s.

Amazons default AMI’s |

Community contributed AMI’s |

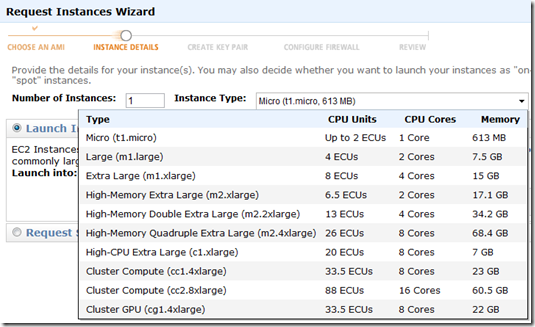

From the list above, I have chosen Microsoft’s “Optimized SQL Server 2008 R2 SP1” from the community AMI’s and clicked “Select”. Now I can choose the CPU and memory configurations. Hmm how does a 16 core server sound with 60 gig of RAM? That ought to do it… 🙂

Now I won’t go through the full description of commissioning virtual servers, but suffice to say that you can choose which geographic location this server will reside within Amazon’s cloud and after 15 minutes or so, your virtual server will be ready to use. It can be assigned a public IP address, firewall restricted and then remotely managed as per any other server. This can all be done programmatically too. You can talk to Amazon via web services start, monitor, terminate, etc. as many virtual machines as you want, which allows you to scale your infrastructure on the fly and very quickly. There are no long procurement times and you then only pay for what servers are currently running. If you shut them down, you stop paying.

But what makes it cool…

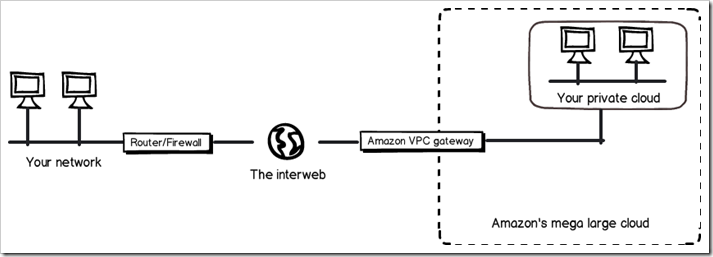

Now I am sure that some of you might be thinking “big deal…any virtual machine hoster can do that.” I agree – and when I first saw this capability I just saw it as a larger scale VMWare/Xen type deployment. But when really made me sit up and take notice was Amazon’s Virtual Private Cloud (VPC) functionality. The super-duper short version of VPC is that it allows you extend your corporate network into the Amazon cloud. It does this by allowing you to define your own private network and connecting to it via site-to-site VPN technology. To describe how it works, diagrammatically check out the image below.

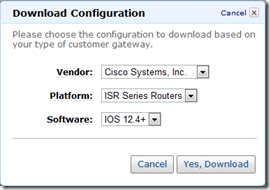

Let’s use an example to understand the basic idea. Let’s say your internal IP address range at your office is 10.10.10.0 to 10.10.10.255 (a /24 for the geeks). With VPC you tell Amazon “I’d like a new IP address range of 10.10.11.0 to 10.10.11.255” . You are then prompted to tell Amazon the public IP address of your internet router. The screenshots below shows what happens next:

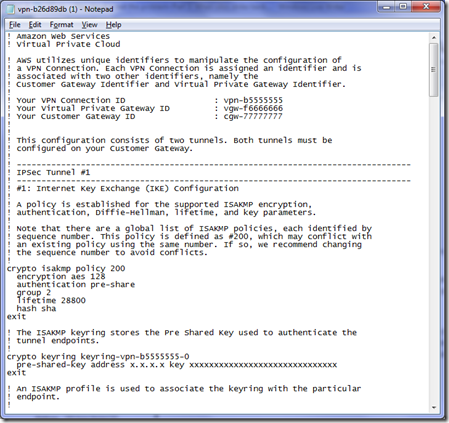

The first screenshot asks you to choose what type of router is at your end. Available choices are Cisco, Juniper, Yamaha, Astaro and generic. The second screenshot shows you a sample configuration that is downloaded. Now any Cisco trained person reading this will recognise what is going on here. This is the automatically generated configuration to be added to an organisations edge router to create an IPSEC tunnel. In other words, we have extended our corporate network itself into the cloud. Any service can be run on such a network – not just SharePoint. For smaller organisations wanting the benefits of off-site redundancy without the costs of a separate datacenter, this is a very cost effective option indeed.

For the Cisco geeks, the actual configuration is two GRE tunnels that are IPSEC encrypted. BGP is used for route table exchange, so Amazon can learn what routes to tunnel back to your on-premise network. Furthermore Amazon allows you to manage firewall settings at the Amazon end too, so you have an additional layer of defence past your IPSEC router.

This is called Virtual Private Cloud (VPC) and when configured properly is very powerful. Note the “P” is for private. No server deployed to this subnet is internet accessible unless you choose it to be. This allows you to extend your internal network into the cloud and gain all the provisioning, redundancy and scalability benefits without exposure to the internet directly. As an example, I did a hosted SharePoint extranet where we use SQL log shipping of the extranet content databases back to the a DMZ network for redundancy. Try doing that on Office365!

This sort of functionality shows that Amazon is a mature, highly scalable and flexible IaaS offering. They have been in the business for a long time and it shows because their full suite of offerings is much more expansive than what I can possibly cover here. Accordingly my Amazon experiences will be the subject of a more in-depth blog post or two in future. But for now I will force myself to stop so the non-technical readers don’t get too bored. 🙂

So what went wrong?

So after telling you how impressive Amazon’s offering is, what could possibly go wrong? Like the Office365 issue covered in part 2, absolutely nothing with the technology. To understand why, I need to explain Amazon’s pricing model.

Amazon offer a couple of ways to pay for servers (called instances in Amazon speak). An on-demand instance is calculated based on a per-hour price while the server is running. The more powerful the server is in terms of CPU, memory and disk, the more you pay. To give you an idea, Amazon’s pricing for a Windows box with 8CPU’s and 16GB of RAM, running in Amazon’s “US east” region will set you back $0.96 per hour (as of 27/12/11). If you do the basic math for that, it equates to around $8409 per year, or $25228 over three years. (Yeah I agree that’s high – even when you consider that you get all the trappings of a highly scalable and fault tolerant datacentre).

On the other hand, a reserved instance involves making a one-time payment and in turn, receive a significant discount on the hourly charge for that instance. Essentially if you are going to run an Amazon server on a 24*7 basis for more than 18 months or so, a reserved instance makes sense as it reduces considerable cost over the long term. The same server would only cost you $0.40 per hour if you pay an up-front $2800 for a 3 year term. Total cost: $13312 over three years – much better.

So with that scene set, consider this scenario: Back at the start of 2011, a client of mine consolidated all of their SharePoint cloud services to Amazon from a variety of other another hosting providers. They did this for a number of reasons, but it basically boiled down to the fact they had 1) outgrown the SaaS model and 2) had a growing number of clients. As a result, requirements from clients were getting more complicated and beyond that which most of the hosting providers could cater for. They also received irregular and inconsistent support from their existing providers, as well as some unexpected downtime that reduced confidence. In short, they needed to consolidate their cloud offering and manage their own servers. They were developing custom SharePoint solutions, needed to support federated claims authentication and required disaster recovery assurance to mitigate the risk of going 100% cloud. Amazon’s VPC offering in particular seemed ideal, because it allowed full control of the servers in a secure way.

Now making this change was not something we undertook lightly. We spent considerable time researching Amazon’s offerings, trying to understand all the acronyms as well as their fine print. (For what its worth I used IBIS as the basis to develop an assessment and the map of my notes can be found here). As you are about to see though, we did not check well enough.

Back when we initially evaluated the VPC offering, it was only available in very few Amazon sites (two locations in the USA only) and the service was still in beta. This caused us a bit of a dilemma at the time because of the risk of relying on a beta service. But we were assured when Amazon confirmed that VPC would eventually be available in all of of their datacentres. We also stress tested the service for a few weeks, it remained stable and we developed and tested a disaster recovery strategy involving SQL log shipping and a standby farm. We also purchased reserved instances from Amazon since these servers were going to be there for the long haul, so we pre-paid to reduce the hourly rates. Quite a complex configuration was provisioned in only two days and we were amazed by how easy it all was.

Things hummed along for 9 months in this fashion and the world was a happy place. We were delighted when Amazon notified us that VPC had come out of beta and was now available in any of Amazon’s datacentres around the world. We only used the US datacentre because it was the only location available at the time. Now we wanted to transfer the services to Singapore. My client contacted Amazon about some finer points on such a move and was informed that they would have to pay for their reserved instances all over again!

What the?

It turns out, reserved instances are not transferrable! Essentially, Amazon were telling us that although we paid for a three year reserved instance, and only used it for 9 months, to move the servers to a new region would mean we have to pay all over again for another 3 year reserve. According to Amazon’s documentation, each reserved instance is associated with a specific region, which is fixed for the lifetime of the reserved instance and cannot be changed.

“Okay,” we answer, “we can understand that in circumstances where people move to another cloud provider. But in our case we were not.” We had used around 1/3rd of the reserved instance. So surely Amazon should pro-rata the unused amount, and offer that as a credit when we re-purchase reserved instances in Singapore? I mean, we will still be hosting with Amazon, so overall, they will not be losing any revenue al all. On the contrary, we will be paying them more, because we will have to sign up for an additional 3 years of reserve when we move the services.

So we ask Amazon whether that can be done. “Nope,” comes back the answer from amazons not so friendly billing team with one of those trite and grossly insulting “Sorry for any inconvenience this causes” ending sentences. After more discussions, it seems that internally within Amazon, each region or datacentre within each region is its own profit centre. Therefore in typical silo fashion, the US datacentre does not want to pay money to the Singapore operation as that would mean the revenue we paid would no longer recognised against them.

Result? Customer is screwed all because the Amazon fiefdoms don’t like sharing the contents of the till. But hey – the regional managers get their bonuses right? ![]()

Conclusion

Like part 2 of this cloud computing series, this is not a technical issue. Amazon’s cloud service in our experience has been reliable and performed well. In this case, we are turned off by the fact that their internal accounting procedures create a situation that is not great for customers who wish to remain loyal to them. In a post about the danger of short termism and ignoring legacy, I gave the example of how dumb it is for organisations to think they are measuring success based on how long it takes to close a helpdesk call. When such a KPI is used, those in support roles have little choice but to try and artificially close calls when users problems have not been solved because that’s how they are deemed to be performing well. The reality though is rather than measure happy customers, this KPI simply rewards which helpdesk operators have managed to game the system by getting callers off the phone as soon as they can.

I feel that Amazon are treating this is an internal accounting issue, irrespective of client outcomes. Amazon will lose the business of my client because of this since they have enough servers hosted where the financial impost of paying all over again is much more than transferring to a different cloud provider. While VPC and automated provisioning of virtual servers is cool and all, at the end of the day many hosting providers can offer this if you ask them. Although it might not be as slick with fancy as Amazon’s automated configuration, it nonetheless is very doable and the other providers are playing catch-up. Like Apple, Amazon are enjoying the benefits of being first to market with their service, but as competition heats up, others will rapidly bridge the gap.

Thanks for reading

Paul Culmsee

February 12th, 2012 at 8:34 am |

[…] took a look at some of the dodgier side of two of the industries biggest players, Microsoft and Amazon. While I have highlighted some dumb issues with both, I nevertheless have to acknowledge their […]