Demystifying SharePoint Performance Management Part 9 – Don’t believe everything you R/W

Hi and welcome to Part 9 (bloody hell… nine!) of my series on trying to demystify SharePoint performance management a bit. If by any chance you have been asked to provide some sizing information for your organisation and you are finding the resources online a bit overwhelming, this series is for you. If you have been a part of our varied journey so far, the last few posts have been all about Disk IO performance in the form of latency, IOPS and MBPS. In the last two articles, we have been learning about the different IO patterns that SQL Server is likely to utilise, as well as using the jackhammer known as the SQLIO utility, that is used to simulate those IO patterns on unsuspecting disk infrastructure.

Now just to set the scene for this post (and conveniently perform some product placement), I recently published a book called “The Heretics Guide to Best Practices”. Now being the author and all, I am going to suggest you buy it because it is a completely riveting read! :-).

Now apart from blatant product placement, the real reason I mention it is because one of the chapters is called “Myths, Memes and Methodologies”. In it, we examine why some ideas gain legitimacy, even though they are based on often completely dodgy foundations. I mention this here, because in terms of SQL disk IO sizing, something similar has happened with Microsoft’s published material on the topic. So the focus of this article is to finish off our discussion on understanding disk IO patterns, while lifting the lid on some of the inconsistencies in the material that that end up being repeated by SharePoint consultants as gospel to their unsuspecting disciples.

Now harking way back to part 1 to the notion of lead vs. lag indicators, our use of SQLIO thus far has essentially been used as a lead indicator. While SQLIO puts a real load on disk infrastructure and faithfully reports the resulting IOPS, latency and MBPS, the reality is it can never truly capture the nuances of a production SharePoint farm doing its thing. But in terms of a lead indicator that is okay. After all, a lead indicator by definition cannot guarantee an outcome. It can merely suggest that an outcome should be able to be met.

So while we are thinking about the lead indicator world view, some of you might have noticed that I have not yet made any suggestions what are the minimum conditions of satisfaction for disk infrastructure used to underpin SharePoint. This has been deliberate until now, because I felt that it was critical to understand the relationship between the size of a disk IO operation, and its effect on IOPS, latency and MBPS first. To that end, hopefully I have instilled a reflex in you where – if you are given an arbitrary latency, IOPS or MBPS figure that you have to meet – you immediately ask questions like, “What sort of IO patterns?” or “how large is the IO request typically going to be?” or “is the IO random or sequential?”

When whitepapers mislead…

Now we are about to get into one area where Microsoft’s published documentation is quite weak. Remember the 367 page “Capacity Planning for Microsoft SharePoint Server 2010” whitepaper? Starting at page 326, there is a section with the promising title of “Estimate Core Storage and IOPS needs” (this topic is also available separately as a technet article too). The problem is in despite that title, very little IOPS guidance actually is given. Instead the content in the section overwhelmingly speaks about estimating storage requirements. In fact the best you get is one explicit mention of IOPS in relation to the SharePoint Search service application which states the following:

The IOPS requirements for Search are significant.

- For the Crawl database, search requires from 3,500 to 7,000 IOPS.

- For the Property database, search requires 2,000 IOPS.

Note: For the purpose of the rest of this article, lets add the above figures together and simply say between 5,500 to 9000 IOPS for search.

Do you see the problem here? This is simply an arbitrary IOPS figure with no guidance as to the IO patterns underpinning it. What about latency or the IO request size that you need to assume? Unfortunately, no guidance is given for these questions which makes this quoted figure not overly helpful. Plus, as you will soon see below, Microsoft seemingly contradict themselves elsewhere in the same whitepaper…

So what are good numbers to use?

In the absence of any hard data, the best way to deal with storage requirements is to think in terms of lead indicators. Indicators from a lead point of view, can be framed as targets – something to aim for. Targets then can be broken down into different categories ranging from “cover your arse” to “above and beyond”:

- Aspired target: The “this would be bloody fantastic if we could get there” target.

- Agreed target: The “this is what we are going to deliver no matter what” target.

- Minimum Condition of Satisfaction (MCOS) target: The “If we don’t achieve this we may as well pack up and go home” target.

So given these sorts of targets, what should the disk IO performance targets for SharePoint be? To work this out, we can utilise information already out there. Well…that is, we could if the information out there wasn’t so disparate and disconnected. So unfortunately, it takes some digging to you can find what you need.

Our first point of call in this regard is indeed Microsoft and the very same capacity planning and configuration guide that I criticised earlier for poorly dealing with IOPS. Hidden in the bowls of that document, the following statement is made on page 334 (emphasis mine):

Any storage architecture must support your availability needs and perform adequately in IOPS and latency. To be supported, the system must consistently return the first byte of data within 20 milliseconds.

So the way I look at it, a 20ms latency should be our MCOS target (see the explanation above for MCOS). If we consistently do worse than this, then we do not have a lot of assurance about the scalability of the disk IO subsystem being used for SharePoint. But like the arbitrary IOPS figure quoted in the previous section, I wonder if readers have spotted the problem with specifying this latency figure alone?

In both cases, don’t forget the almost symbiotic type of relationships between IO size, IOPS and latency. If we assumed that all IO operations were small (for example SQL’s page size of 8KB) then we could likely stay way under the 20ms limit with a more modest disk infrastructure. But to sustain the same latency with a larger IO size would require a faster disk subsystem. Why? Well as we discussed in part 6, if the size of the IO writes are larger, such as 64KB, then latency will go up because servicing larger requests takes longer than smaller ones. Therefore, if we were to assume a larger IO size, we would need more/faster disks to be able to meet the same 20ms latency KPI.

So what disk IO size should we assume to give context to a latency figure? Some insight can be found back in part 6, when we examined SQL IO characteristics and established that it will likely be much more varied than SQL’s base IO unit of 8KB pages. My suggestion therefore, is to test 8KB but also ensure that 64KB can meet the latency target. This is because 64KB represents a reasonable average size between the 8KB to 256KB range most SQL Server’s IO operations will fall within. Thus, if a SQLIO test using random read/writes at 64K indicates more than 20ms latency consistently, then you should probably ask your storage people to take another look at it.

By the way, if you really want to give your storage guys a challenge, keep jacking up the IO size!

What about aspired latency targets?

So if you are cool with the notion that the minimum condition of satisfaction for a random IO test using 64K size should be less than 20ms latency, what about aiming higher with agreed or aspirational targets?

Luckily for all of us, we can once again stand on the shoulders of giants. In this case, the Bob Duffy indirectly answers this question by providing what he considers to be the indicators for optimal SQL Server performance in general. In an excellent article with the rather appropriate title of “How to Specify SQL Storage Requirements to your SAN Dude” Bob makes the following recommendations:

- SQL Data files must have a response time averaging about 8ms and a maximum response time of around 20ms using 64k size IOs and that are random in nature

- SQL Log Files must have a write response time averaging from 1-5ms. use 64k IO size and are sequential in nature

The nice thing about specifying a target or benchmark like this, is that you are able to sidestep discussions on RAID levels, stripe sizes and many other things that SAN nerds find interesting. We keep things focused on the lead indicators and in effect state “If you can meet these figures, configure it any way you like.” This gives the SAN guys the freedom to do their job, while giving you an indicator that can give you confidence in the disk infrastructure. So if we were to distil the figures above into lead indicator targets for storage gurus, it might look something like this:

- MCOS target: Less than 20ms latency for random IO requests of 64KB

- Agreed target: Average 8ms latency for random IO requests of 64KB with no more than 20ms max latency. Less than 5ms latency for sequential log IO

- Aspired target: No more than 8ms latency for random SQL IO requests of 64KB and average of 1ms latency sequential log IO with max never going above 5ms

Now in the ProData article, Bob made a slightly tongue in cheek point that sums up the above thinking really well, as well as giving insight to a critical aspect we have not considered so far…

Nowadays most SQL consultants try and not talk about RAID types and types of disk, it can be best to leave that up to the storage guys. If the storage team can meet my requirement for 5,000 random 64k read/write IOPs at 8ms latency by using 50 old SATA drives at 5,400 rpm in RAID 5 then knock yourself out – I’m happy. Well maybe I’m happy till we have that chat about Service Level requirements during a disk degrade event but that’s a different story…

If you look closely at Bob’s quote, you will see that he has also specified the last critical variable in the mix. Bob’s mention of “5000 random 64k read/write IOPS” is in reference to another point he makes. Without an IOPS figure to work from, the targets we have come up with are effectively meaningless. Quoting Bob:

The main thing to specify apart form your latency requirement is the throughput (IOPs). It is no good meeting the 8ms target for 100 IOPs and then finding your workloads needs 5,000 IOPs. You wont be able to meet the 8ms target!!

Consider it this way… a SharePoint site that services 100,000 users, will process a lot more IO requests than a site that services 10 users. With the latter, it is quite likely that the latency targets we have been talking about (even the aspirational ones) would be pretty easy to meet with a single disk. (To hark back to our shopping centre metaphor, one check out operator is all that is needed at a corner store, whereas many are needed at the supermarket). This is presumably why Bob has used a figure like 5000 IOPS for his post. It is probably a figure that conveniently represents some fairly heavy disk usage. But it does beg two question:

- How much IOPS should we use to simulate SharePoint IOPS?

- In the absence of anything else, perhaps 5000 IOPS is a good figure to go with?

Don’t believe all you read…

Now if you go back and read the start of this post, you will recall I mentioned that Microsoft stated some IOPS figures for the SharePoint search application databases ranged between 5,500 to 9000. That would indicate that Bob’s base figure of 5000 is a bit low, especially given that SharePoint has many other components beyond search that have not been taken into account. So to put Bob’s 5000 IOPS figure in perspective, let’s re-examine Microsoft’s trusty capacity planning whitepaper. One of the great things about this document is that Microsoft detailed the performance stats of a typical day in the life of their internal SharePoint environments. Since Microsoft are so large, they have different SharePoint farms for different collaborative scenarios. The scenarios they covered were:

- Enterprise Intranet environment (also described as published intranet). In this scenario, employees view content like news, technical articles, employee profiles, documentation, and training resources. It is also the place where all search queries are performed for all of the other the SharePoint environments within the company.

- Enterprise intranet collaboration environment (also described as intranet collaboration). In this scenario, is where important team sites and publishing portals are housed. They are typically used for enterprise collaboration, organizations, teams, and projects. Sites created in this environment are used as communication portals, applications for business solutions, and general collaboration. No custom code runs in these sites.

- Departmental Collaboration environment. In this scenario, employees use this environment to track projects, collaborate on documents, and share information within their department.

- Social Collaboration Environment. This is the My Sites scenario. These connect employees with one another and the information that they need. Employees use this environment to present personal information such as areas of expertise, past projects, and colleagues to the wider organization. The environment also hosts personal sites and documents for viewing, editing, and collaboration.

Now reading about these scenarios is highly interesting and Microsoft provides some nice nuggets of information that we will use in a future post. But for now I will stick purely to a disk IOPS perspective. To that end, below are a few fun-filled facts about the number of users in each of the four scenarios:

- Enterprise Intranet environment: 33580 unique users per day, with an average of 172 concurrent and a peak concurrency of 376 users.

- Enterprise intranet collaboration environment: 69702 unique users per day, with an average of 420 concurrent users and a peak concurrency of 1433 users

- Departmental Collaboration environment. 9186 unique users per day, with an average of 189 concurrent users and a peak concurrency of 322 users

- Social Collaboration Environment. 69814 unique users per day, with an average of 639 concurrent users and a peak concurrency of 1186 users

So now you have a sense of the size of these scenarios and as an added bonus, gotten a glimpse into the difference that usage patterns can make. For example: social collaboration and enterprise collaboration have similar number of unique users but social has more average concurrency but less peak. But what about IOPS?

In the document, IOPS is split into reads per second and writes per second, so I added them to estimate IOPS. The results are rather surprising…

|

Metric |

Social Collaboration |

Departmental Collaboration |

Published intranet |

Intranet Collaboration |

|

Unique visitors |

69814 |

9186 |

33580 |

69702 |

|

Average concurrent |

639 |

189 |

172 |

420 |

|

Max concurrent |

1186 |

322 |

376 |

1433 |

|

IOPS |

941 |

74 |

409.66 |

409.66 |

Now while it might be tempting to ponder why social collaboration has over double the IOPS, yet half the concurrency of enterprise intranet collaboration, we are not going to worry about here. Besides, we actually covered some of it already when we used logparser to get insights of usage patterns. What I will instead do is draw your attention to is the fact that that none of the IOPS scenarios come anywhere near the 5000 IOPS figures cited by ProData or Microsoft’s 5500-9000 IOPS cited for search (in the very same capacity planning document I might add!)

So something is amiss. If an organisation the size of Microsoft can have almost 70000 unique users per day, with a peak concurrency of 1433 users and only total 410 IOPS, then where the hell did the 5500-9000 IOPS figure for search alone come from? Even if you take the scenario with the highest IOPS (the Social collaboration scenario with 941 IOPS), that’s still less than one fifth 5500 IOPS which was at the low end of the search IOPS figure.

Now I am also suspicious that two different case studies have the exact same IOPS figure. If you compare the “published intranet” scenario with the “intranet collaboration” scenario, one has half the visitors, yet both have precisely the same IOPS (right down to decimal places). That seems highly unlikely to me and I suggest that a mistake has been made. Given the intranet collaboration has the highest max concurrency figure, I would have expected IOPS to be a higher than it is. Hmmm…

What can we take away from this? For one, the capacity planning document could seriously do with a rewrite in this area. Secondly, I don’t have a lot of faith in those IOPS figures quoted (although I have more confidence in the case studies that the arbitrary figures specified for search).

So if we put aside the doubt created by the issues with the capacity planning guide, there is one really interesting fact that remains… none of the reported IOPS figures came anywhere near 5000 IOPS.

Insights from HP…

It turns out that Hewlett Packard also did some load testing of SharePoint 2010 (among other things) and published a whitepaper called the “HP performance and configuration guide for Microsoft SharePoint 2010“. In this guide, they detail the results of a scenario they tested based on what they termed an “Enterprise Workload”. The guide covers definition of enterprise workload in loving detail, but the gist of it is that it covers the following areas:

- Document Center (30% of operations) Check-out, download, upload and check-in documents

- Team Sites – (20% of operations) work with calendars, discussions and documents

- Portal SItes – (20% of operations) work with event, announcements and surveys

- My Sites – (10% of operations) work with documents in personal documents library

- Search – (20% of operations) Submit searches with random word or phrases

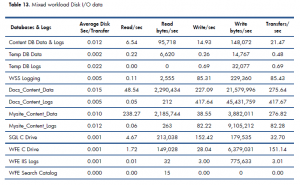

HP then simulates 500 concurrent users performing the actions above. In Table 13 of the report (page 28 of their document and reproduced below) , HP outline the performance and even break down the IO characteristics of each SharePoint database (which is really handy indeed). Adding up the last column of transfers/sec (which is essentially IOPS) we get a result of 1347.33 IOPS.

Thus we are still considerably under the 5000 IOPS that Bob Duffy suggests.

Conclusion…

Right! Remember our discussion above on MCOS, agreed and aspired targets? For an aspirational target, I think that we can reasonably use 5000 IOPS as a starting point for an enterprise organisation of Microsoft’s size. If we stick with 5000 IOPS, then my suggestion for an aspirational latency target would be:

- no more than 8ms latency for random SQL IO requests of 64KB

- average 1ms (and no more than 5ms max) latency of sequential log IO of 64KB

I think these figures are a pretty good test of a disk subsystem and think that Bob at ProData is therefore pretty close to the mark. Of course, you can use these figures to make your own judgement and adjust accordingly. Provided that you think of them as lead indicators that provide you a level of confidence in your disk infrastructure, you now have the tools and knowhow to run the tests too.

So if there was a moral of the story to this post, it would be to not believe everything you read and always verify espoused reality with actual reality via testing. On that note, the next post will finish off our examination of disk performance by going over 2 additional tools that I think are particularly good for testing assumptions. After that, we will be revisiting Microsoft’s case studies, as well as some findings, insights and recommendations from some additional lab scenarios that Microsoft conducted.

Thanks for reading

Paul Culmsee

August 16th, 2012 at 8:24 pm |

[…] IOPS and MBPSDemystifying SharePoint Performance Management Part 8 – More on SQL and SQLIODemystifying SharePoint Performance Management Part 9 – Don’t believe everything you R/WDemystifying SharePoint Performance Management Part 10 – More tools of the […]

August 24th, 2012 at 1:31 am |

[…] IOPS and MBPSDemystifying SharePoint Performance Management Part 8 – More on SQL and SQLIODemystifying SharePoint Performance Management Part 9 – Don’t believe everything you R/WDemystifying SharePoint Performance Management Part 10 – More tools of the […]